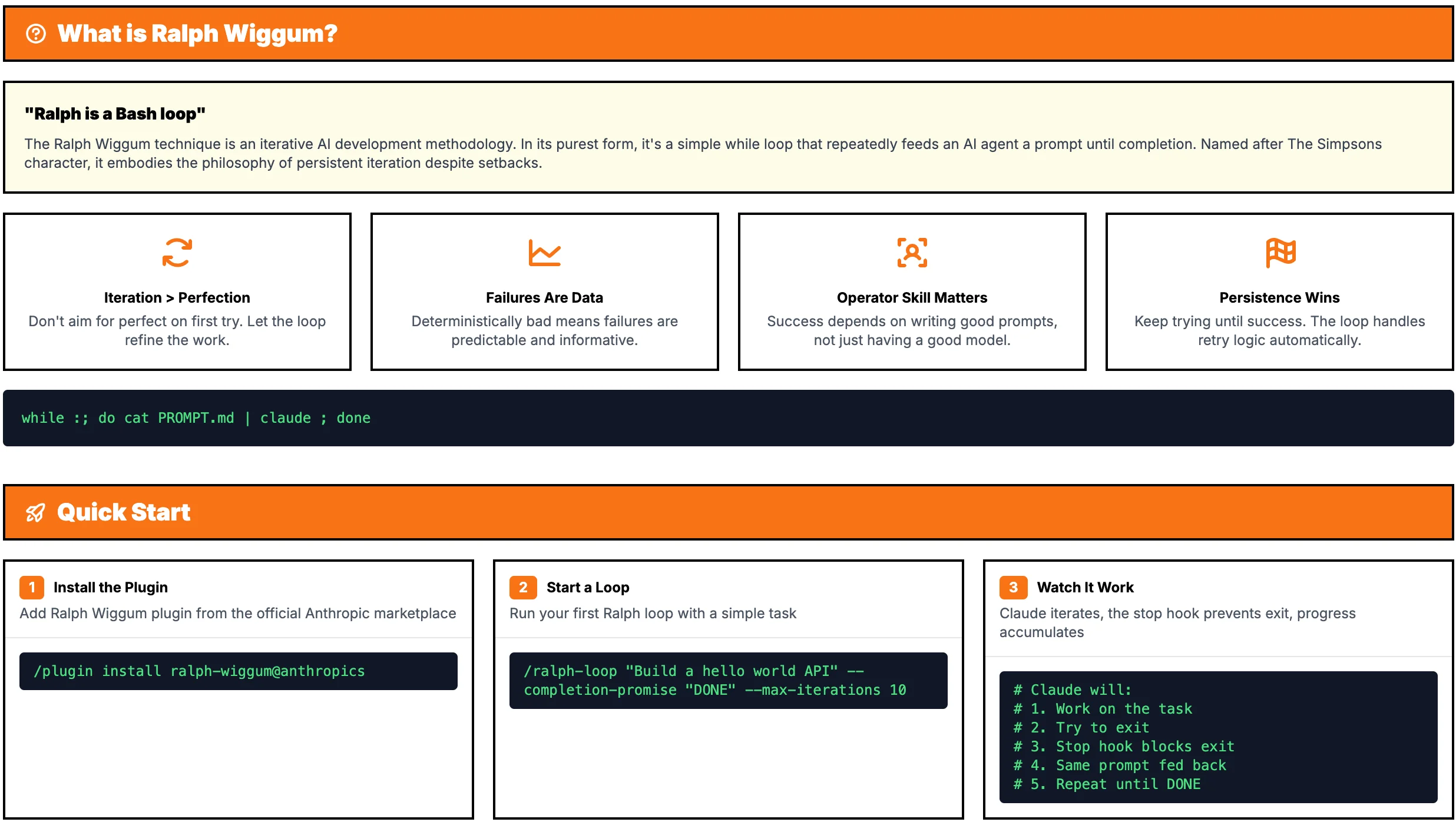

A lot of people encountering the Ralph Wiggum technique ask the wrong question. They want to know whether it’s a clever prompt, a hidden Claude Code feature, or some weird self-improvement trick. The Reddit confusion is useful because it exposes the real mistake: people are reviewing the wrapper when the real story is the control loop. The Ralph Wiggum technique matters because it externalizes progress into machine-checkable feedback. This is not a new model feature; it’s an Anthropic Claude Code plugin pattern implemented via a stop hook.

That’s the whole game. Not “make Claude reason better.” Not “find the magic incantation.” Ralph shows that agentic coding progress comes more from better verifiers than better prompts. You keep the objective stable, let the repo state change, and force the agent to face test results, file diffs, and an exact stop condition until it either succeeds or times out. Ordinary prompting asks the operator to keep restating the task more clearly; Ralph asks the operator to define “done” so clearly that the machine can’t bluff.

What the Ralph Wiggum technique actually changes

In plain English, the Ralph Wiggum technique turns Claude Code into an iterative coding agent with a gate on the exit. The prompt stays the same. The workspace does not.

A completion promise is just an exact string the loop looks for to decide whether to stop, and the stop hook is the part that intercepts exit until the real condition has been met. Claude keeps working against the same task while seeing the consequences of its earlier attempts in the files, test output, and git history. So the “improvement” is not hidden inside the model. It comes from environmental feedback.

The surprising part is how little of this depends on Claude being especially clever. Give the same model and the same prompt two different verifiers and you get two different systems. In one repo, “fix auth refresh and emit <promise>FIXED</promise> when 12 tests pass” produces convergence because every failure says something specific. In another, “make auth more robust” plus a vague smoke test produces flailing because the loop has nothing sharp to optimize against.

That difference is the real mechanism. Ralph relocates operator skill from prompt wording to verification design. If your tests are sharp, the loop looks smart. If your tests are mushy, the loop turns into expensive improvisation.

Here’s the comparison people usually miss:

| Approach | Main input | Feedback source | Stop condition | Common failure mode |

|---|---|---|---|---|

| Prompting harder | Better wording from the operator | Human judgment in chat | “Looks done” | Endless clarification |

| Tightening verification | Stable task plus machine checks | Tests, linters, exact diffs, thresholds | Completion promise plus passing checks | Stops only when verifier is weak |

That table is why Ralph matters beyond Ralph. It gives you a clean A/B test of where capability is actually coming from.

How the loop works inside Claude Code

The official plugin runs the loop inside the current Claude Code session. That detail matters because it explains why this feels different from manually retrying a prompt in chat.

Operationally, it’s pretty small:

- Claude gets a fixed objective.

For example: fix a bug, run tests, output<promise>FIXED</promise>only when the relevant checks pass. - Claude edits files and runs commands.

It changes code, maybe adds a test, then executes the test suite or linter. - Claude tries to stop.

Maybe it thinks the task is done. Maybe it just wants to exit after making progress. - The stop hook checks reality.

If the completion promise hasn’t appeared under the right conditions, or the checks still fail, the session does not actually end. - The next iteration starts with new evidence.

Claude now sees modified files, fresh errors, and what it already tried. - The loop ends only on a hard condition.

Either Claude emits the exact completion promise and satisfies the verifier, or the max-iterations cap fires.

Why does this matter? Because Ralph converts hidden failure into observable state transitions. Instead of “the model seems confused,” you get a sequence you can inspect: failing test, code change, narrower failure, passing test, stop. Once failure is visible in the environment, it becomes legible, reproducible, and eventually automatable.

The obvious reader question is: wait, if the prompt never changes, why doesn’t Claude just repeat the same bad move forever? Good question. Sometimes it does. That’s not a bug in the explanation. It’s the point of the pattern. The Claude Code loop only works when the environment supplies useful error signals. Specific failing tests, exact assertion messages, narrow diffs, those push the agent toward convergence. Vague feedback gives you a self-referential loop with nicer branding.

And that leads to the non-obvious implication: teams trying to get more out of coding agents should spend less time tuning tone and more time redesigning tasks so failure can be checked by a script. If a task cannot produce a crisp red/green signal, the agent is being asked to improvise in the dark.

Why it works for tests, and why it fails for judgment

Ralph is strongest when a shell command can grade the result.

That sounds almost too simple, but it’s the practical dividing line between “surprisingly effective” and “why is this thing burning tokens while making everything worse?” The docs and community guides point to the same pattern: the Ralph Wiggum technique shines when success is machine-checkable and bounded.

A good Ralph task looks like this:

- Good: “Fix token refresh in

auth.tsand pass 12 auth tests.”- Stable verifier: yes

- Bounded stop condition: yes

- Overbuilding risk: low

A bad Ralph task looks like this:

- Bad: “Make the onboarding flow delightful.”

- Stable verifier: no

- Bounded stop condition: no

- Overbuilding risk: high

What breaks in the second case is not mysterious.

First, there’s no stable verifier. You can test whether a route returns 200. You can’t directly test whether a signup flow feels “delightful” unless you’ve translated taste into proxies.

Second, there’s no clean stop condition. A completion promise needs a crisp end state. FIXED works when tests pass. It does not work when the task is “be more elegant.”

Third, open-ended loops tend to overbuild. Give an agent an aesthetic or product task without a boundary and it will keep “improving” things because nothing in the environment tells it to stop.

The boundary gets clearer with a UI example. “Make the checkout page feel cleaner” is a terrible Ralph task. But “reduce CLS below 0.1, keep Lighthouse accessibility above 95, and do not change the number of checkout steps” is suddenly plausible. Same product area. Totally different verifier quality.

The same trick works for tasks that look subjective at first glance. “Rewrite this onboarding copy to sound better” is not Ralph-compatible. But “rewrite the error messages, keep each under 80 characters, preserve the existing variables and placeholders, and pass snapshot tests for the 14 fixture states” is. You haven’t solved taste. You’ve converted part of the task into something the loop can actually judge.

Refactoring is another good example. “Clean up this module” is agent catnip in the worst way. It sprawls. But “split billing_service.rb into two files, preserve public method signatures, and pass the existing contract tests plus snapshot diffs on generated invoices” is suddenly bounded enough to loop on.

Here’s the mini-checklist that actually matters:

- If you cannot express done as a command, a threshold, or an exact diff expectation, don’t use Ralph.

- If failure messages are too vague to suggest a next move, don’t use Ralph.

- If the task can expand into “while we’re here, let’s also…”, don’t use Ralph without a hard cap.

This is why the Ralph Wiggum technique tends to do well on:

- bug fixes

- test-driven development

- refactors with strong coverage

- linting and formatting cleanup

- small greenfield tasks with exact acceptance tests

And poorly on:

- product strategy

- naming and copy choices without fixtures

- UI feel without measurable proxies

- architecture debates with multiple valid answers

- anything requiring stakeholder judgment

The interesting part is bigger than Ralph itself. A lot of what people call agent intelligence is really verification quality in disguise. Tighten the checks and the same model suddenly looks capable. Remove the checks and it looks flaky, verbose, and weirdly sure of itself.

When to use the Ralph Wiggum technique instead of a normal prompt

You do not need a philosophy of autonomous agents here. You need a decision rule.

| Question | If yes | If no |

|---|---|---|

| Can a script grade success? | Ralph is plausible | Use a normal prompt or human review |

| Is the scope bounded? | Ralph can converge | The loop may sprawl |

| Do you have a hard max-iterations cap? | Run the loop | Don’t automate retries |

| Can you write an exact completion promise? | The stop condition is real | The loop will blur “done” |

That rule is grounded enough to be useful: require a scriptable verifier, a bounded scope, and a hard max-iterations cap before you start. Without those, the Ralph Wiggum technique is mostly a way to spend more tokens on a vague task.

The trade-offs are real, and they’re not side notes:

- Token use: higher, because repeated attempts are the whole point

- Context retention: can degrade over long loops, especially when failures generate huge noisy outputs

- Operator skill: matters more than people think, because a bad verifier produces a bad loop

- Automation upside: high, because once failure is legible, it becomes reproducible and schedulable

That last point is the part people underrate. Ralph doesn’t just help the model iterate. It helps the operator build a task that can be handed off at all. A weak prompt can be rescued by a patient human. A weak verifier can’t. The moment you formalize a completion promise, a stop hook, and a machine-checked definition of done, you’ve turned vague supervision into something closer to software.

You can already see this idea escaping the original plugin. Projects like SimpleLLMs – Simple LLM Suite explicitly extend the Ralph pattern into more specialized looped agents. That’s useful evidence. The durable idea was never the name or the persona. It was the control architecture: fixed objective, environmental feedback, hard stop condition.

That also explains why the pattern connects cleanly to other Claude Code failure stories. Claude Code lost its thinking budget is really about what happens when the loop’s effective reasoning budget gets squeezed, and AI agent hack is what happens when the loop around the model is the weak point. Different incidents, same lesson: the wrapper logic is not secondary. It is the system.

Key Takeaways

- Ralph Wiggum technique is a control loop, not a prompt trick. The prompt stays constant; the repo state and test evidence change.

- It is not a model feature. It’s an Anthropic Claude Code plugin pattern built around a stop hook and a completion promise.

- Ralph shows that agentic coding progress comes more from better verifiers than better prompts.

- A completion promise is just an exact-match stop signal. Write it so the loop can end cleanly when the machine-verifiable goal is met.

- This pattern is strongest when a script can grade success. That makes it ideal for TDD, bug fixes, and bounded refactors.

Further Reading

- Ralph Wiggum Plugin, Official Claude Code plugin README describing the loop, stop hook, completion promise, and practical constraints.

- Ralph Wiggum – AI Loop Technique for Claude Code, Community guide explaining Ralph as a Bash-style loop and where it tends to work best.

- SimpleLLMs – Simple LLM Suite, Open-source project extending the Ralph pattern into more specialized agent workflows.

- Reddit discussion context for Ralph Wiggum, Useful mostly because the confusion and criticism reveal what people misunderstand about the technique’s real value.

The mistake is thinking Ralph makes the model more clever. If your agent only looks smart when a human keeps re-explaining the task, your real bottleneck is not prompting. It’s the missing verifier.