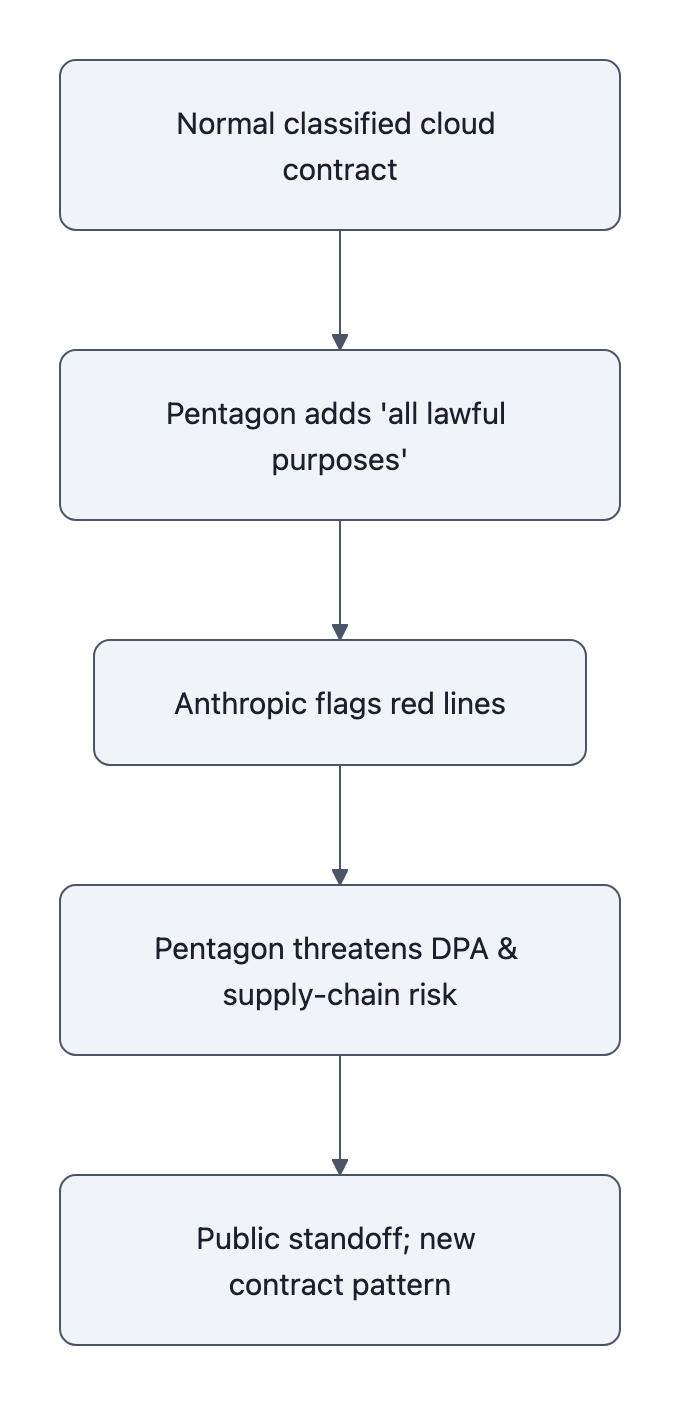

At 5:01 p.m. on a Friday, the Anthropic Pentagon dispute came down to a single sentence: the Department of Defense wanted Claude “for all lawful purposes,” Anthropic refused to strip Claude’s safeguards, and a $200 million contract went onto the chopping block.

On paper this looks like a values clash: cautious AI lab versus hawkish Pentagon.

In reality, Anthropic just ran a live‑fire stress test on the entire U.S. AI procurement stack, and the Pentagon blinked first by threatening to drag out the Defense Production Act and “supply chain risk” designations.

This wasn’t just conscience. It was controlled detonation.

Anthropic chose a fight that forces everyone, DOD lawyers, other AI vendors, and future regulators, to answer a question they’ve been dodging: can a model provider hard‑code limits on how the U.S. military is allowed to use its AI, and will the government’s emergency powers override them?

Anthropic Pentagon dispute: what happened and why it matters

Strip the news down to the transaction-level details.

The Pentagon:

– Wants Claude running on sensitive and classified networks

– Insists on using it for “all lawful purposes”

– Gives a 5:01 p.m. Friday deadline and threatens three things if Anthropic doesn’t cave:

– Terminate the ~$200M contract

– Designate Anthropic as a “supply chain risk”

– Invoke the Defense Production Act (DPA) to force removal of safeguards

Anthropic, via CEO Dario Amodei:

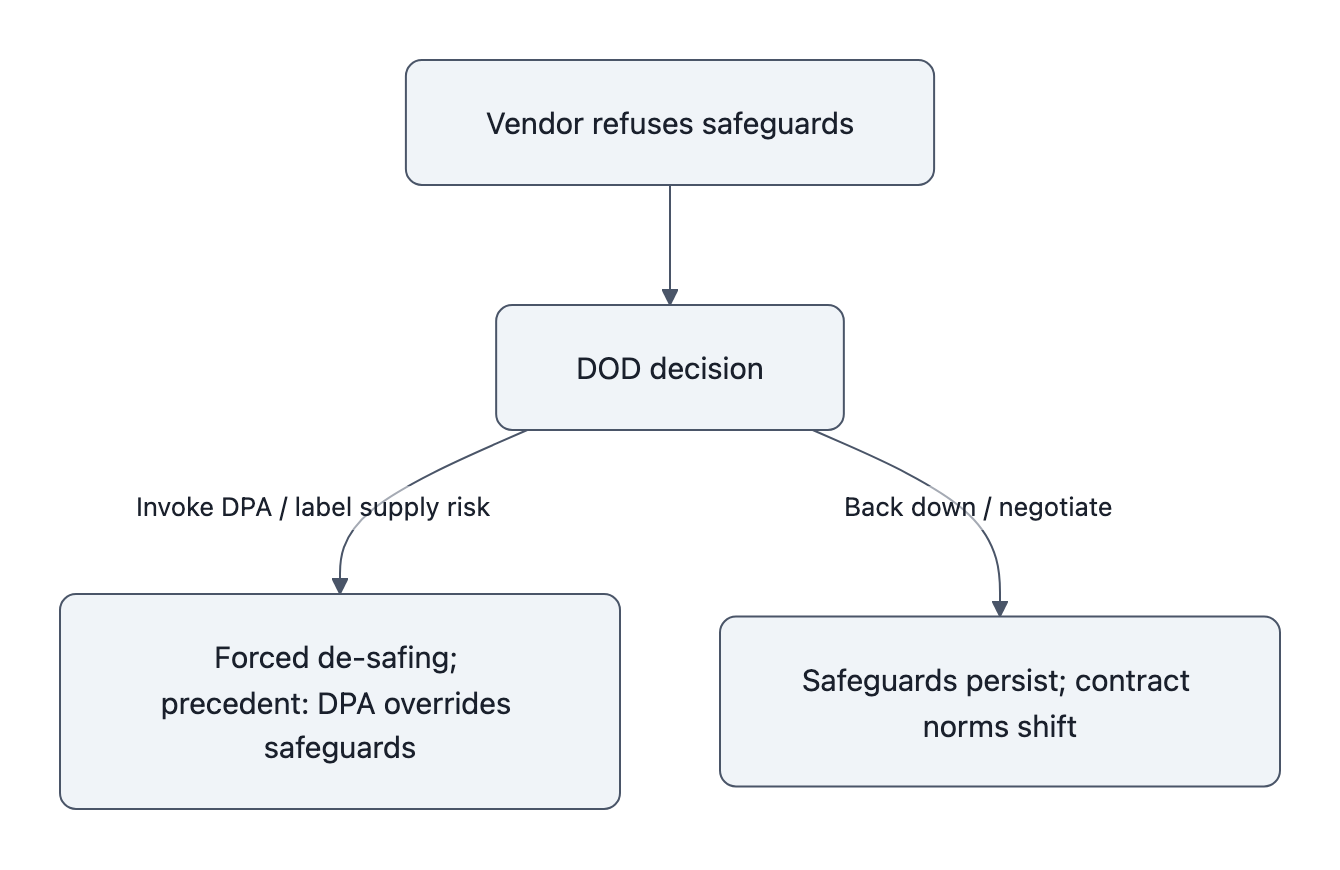

– Says frontier models are “simply not reliable enough” for life‑or‑death targeting

– Refuses to support mass domestic surveillance or fully autonomous weapons

– Rejects the Pentagon’s “final offer” and says, “we cannot in good conscience accede to their request”

– Offers to help DOD transition to another provider

Important nuance from Reuters and WaPo: Anthropic explicitly doesn’t claim the Pentagon is secretly plotting Skynet. They frame it as a product safety judgment, current AI systems are too brittle and too opaque to be safely pointed at lethal autonomy or population‑scale surveillance.

That distinction is your tell. If this were just anti‑war moralizing, you’d get “we don’t want to participate.”

Instead you get “this class of product cannot be safely used this way, and we want the contract to say so.”

That’s not PR. That’s an attempted rewrite of the baseline software contract.

And if you’ve ever read a government contract, you know how radical that is. Vendors usually sign up to “all lawful purposes” with some vague ethics boilerplate stapled to the back. Anthropic is trying to invert that: the default is “no,” and the government has to carve out specific “yeses” that stop before the red lines.

This was a deliberate stress test, Anthropic’s strategic logic

If you were Anthropic, when would you test whether the government will respect your safety guardrails?

Definitely before the next crisis where some colonel wants a “temporary override” because missiles are flying.

So they engineered a controlled scenario:

- Stakes high but survivable: $200M is meaningful, but Claude’s commercial growth means Anthropic can eat that loss. This isn’t an existential bet like a Series B startup flipping off its only customer.

- Clean, defensible red lines: no mass domestic surveillance of Americans, no fully autonomous weapons. Those are lines even a lot of Pentagon lawyers don’t want to be seen erasing on paper.

- Clear, quotable opposition: Pentagon spokesperson goes on X and says they want Claude “for all lawful purposes” and threatens DPA and supply‑chain status. That’s the exact posture Anthropic wants to smoke out.

From an engineering mindset, this looks like chaos testing your political environment.

Netflix kills servers randomly to see whether the system holds up. Anthropic just yanked on the most provocative clause in the contract, “all lawful purposes”, to see which parts of U.S. defense procurement fail open and which fail closed when an AI vendor says “no.”

This does three things at once:

- Hardens Anthropic’s own “safety brand” into precedent.

It’s one thing to blog about responsible AI. It’s another to point to a high‑profile case where you walked from nine‑figure revenue rather than soften contractual safeguards. Other agencies, and foreign governments, now know “the Claude deal” comes with teeth. - Forces other AI vendors to pick a lane.

Axios notes other firms have already accepted “all lawful purposes.” That difference is now public, legible, and politically loaded. If you’re OpenAI, Google, or Palantir, you’re now the vendor who was okay with contract language Anthropic labeled unsafe. - Flushes out the government’s real view of vendor constraints.

Are AI usage policies just marketing copy, instantly void where inconvenient? Or can they function like safety standards in aviation, conditions of use the FAA or DOD must honor?

If you assume Anthropic leadership is not naive, and nothing about their work on safety or their “constitutional AI” approach suggests naivety, then treating this as a one‑off moral spasm misses the point.

This is them asking, in public and under deadline:

“If we say no to certain uses, do you have the legal right to force us anyway?”

And they made sure the cameras were rolling.

Legal and procurement levers: DPA, supply‑chain risk, and precedent

Defense Production Act as a software wrench

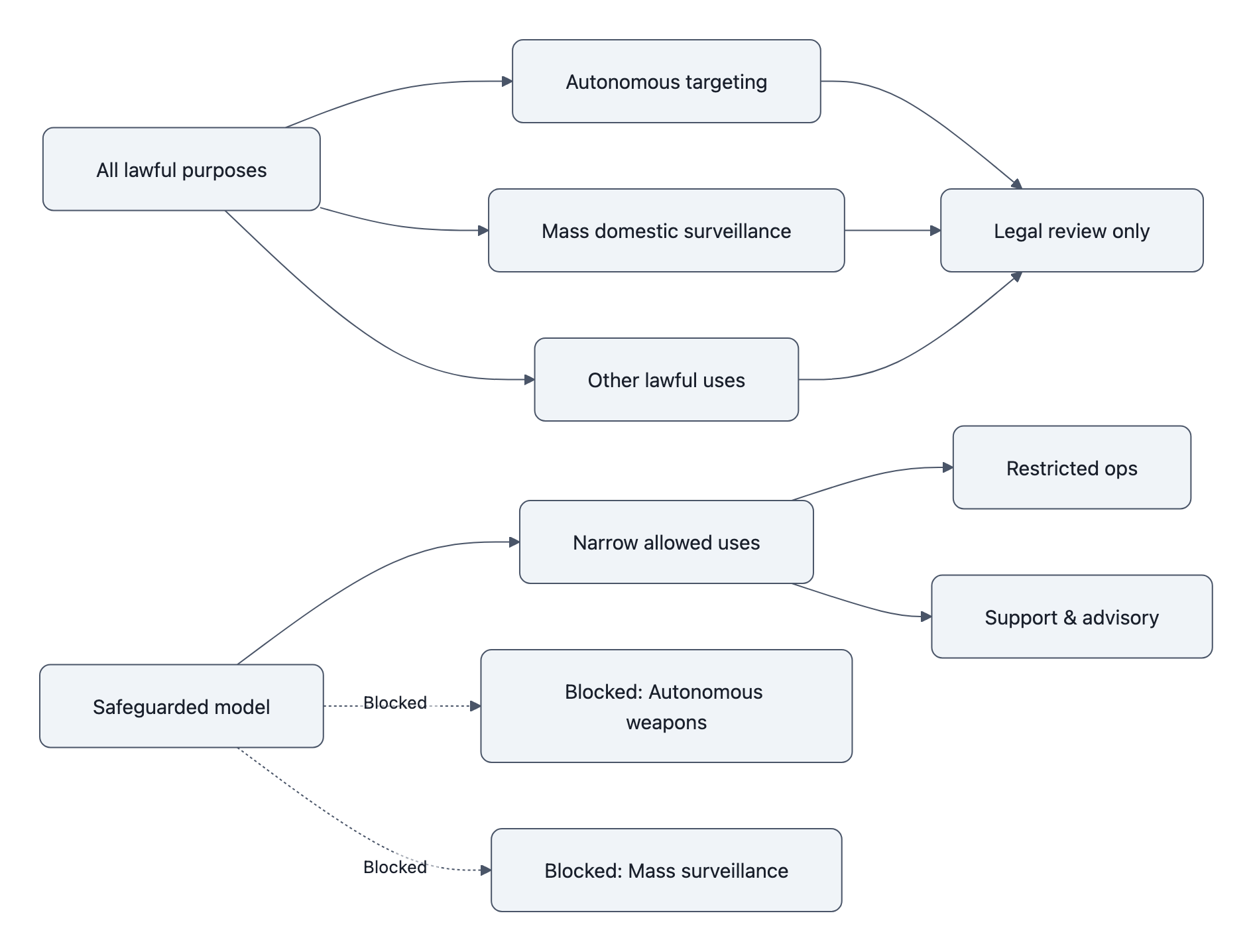

The Defense Production Act is a Korean War‑era law the government uses to prioritize and direct industrial production for national defense, think “you must sell us 100,000 chips before you ship to anyone else.”

Using the Defense Production Act for AI here would mean something very weird: compelling a cloud vendor to reconfigure its model, not to produce more copies of an existing one.

That matters for two reasons:

- It blurs the line between procurement and design control. With DPA, the buyer isn’t just saying “build more of X,” it’s saying “change how X behaves so we can use it in ways you say are unsafe.”

- It sets a precedent that any “safety” feature in an AI model can be reclassified as an optional configuration, revocable by executive order in the name of national security.

Legally, it’s murky. Politically, it’s dynamite. The AP and Reuters both flag how unusual this application would be. No one really wants to be the test case that drags “forced de‑safing of AI” into court.

Which is exactly why Anthropic forced that threat into the open. They made DOD either:

- Actually invoke DPA and own the precedent, or

- Back away and accept, de facto, that model vendor safeguards can constrain government use.

As of writing, we’re hovering in “threats and posturing” land, which is already a partial win for Anthropic. A quiet, back‑room override of Claude’s safety settings would have given them zero leverage and set a quiet norm that the DPA trumps AI safeguards whenever it’s convenient.

“Supply chain risk” as punishment

The second lever is the supply chain risk label.

Normally, this is pointed at companies that are security liabilities, think “Huawei but for models.” Applying it to a domestic AI firm because they refused to loosen safety constraints is bizarre.

It turns supply‑chain risk into a loyalty test:

are you risky because your software can be exploited, or because you won’t bend your usage rules far enough?

Anthropic’s move forces DOD to clarify that in public. If they actually blacklist Anthropic over “overly strict safeguards,” that’s a signal to every other AI vendor: don’t bake in ethics you’re not prepared to remove on command.

Again, that’s exactly the tension Anthropic wanted to surface.

Contract law: where this actually lands

Realistically, the near‑term battlefield isn’t DPA or supply chains. It’s contract language.

The Pentagon’s preferred baseline is simple:

We buy a tool. If something is legal, we reserve the right to do it.

Anthropic is pushing a different template:

You’re not buying a general tool. You’re buying this specific system, whose safety envelope is part of the product. These safeguards stay, even if the law would allow more.

If you’re building or buying AI for business, this should sound familiar. We’ve already asked whether large language models are reliable for business use when they hallucinate and misbehave on edge cases. Safety envelopes and clear contractual limits are the enterprise answer.

The Pentagon wants to pretend military AI is different. Anthropic’s argument is: no, it’s the same, just with bigger blast radius.

And if you’re a future contracting officer, this dispute just did you a favor: you now know this is contested ground, and that “all lawful purposes” is no longer guaranteed boilerplate for frontier AI.

What this changes for AI safety, buyers, and policymakers

For AI labs and vendors

The message is: you can say no, if you pick your battlefield.

Anthropic tested this with:

- A contract large enough to matter but not company‑killing

- Red lines average citizens instinctively get

- A very visible, very quotable posture (“cannot in good conscience accede”)

Other vendors now have a live example of AI vendor safety clauses surviving contact with the Pentagon, at least long enough to become a public controversy. Expect:

- More providers will ship productized safeguards (e.g., no‑autonomy modes, geofenced surveillance restrictions) as standard SKUs, not bespoke options.

- Internal debate at labs: do we follow Anthropic and enforce these in contracts, or do we stay “flexible” and risk being the go‑to vendor for sketchy uses?

In our piece on AI nuclear strike simulations, we argued that the failure mode isn’t just “rogue AI”, it’s humans offloading more and more of escalation logic to brittle systems. Anthropic is drawing a bright line before that becomes standard practice.

For government buyers

If you’re in government IT or acquisitions, this is your wake‑up call that AI is not “just another SaaS deal.”

You now have to:

- Negotiate usage envelopes, not just SLAs and uptime.

- Decide which model‑coded safeguards you’re willing to treat as non‑negotiable characteristics of the product, like max temperature on a reactor.

There’s also a message buried for other agencies: if you want access to top‑tier models like Claude, you may have to live inside the vendor’s safety box.

Given how fragile models get when they’re trained on their own outputs, as we discussed in Model Collapse: Can AI Eat Itself?, there’s a selfish reason for agencies to care too. A race to strip out every safeguard encourages perverse training and deployment behaviors that make the tools less reliable for everyone, including the government.

For policymakers and future regulation

The big shift is conceptual: safety isn’t just what’s legal; it’s what’s contractually and technically possible.

Anthropic is effectively lobbying for:

- Codified bans or strict constraints on fully autonomous weapons that rely on frontier AI

- Stronger legal limits on mass domestic surveillance at the inference level (what conclusions can be drawn), not just at the data-collection level

- Recognition that vendors can and should ship Claude AI safeguards that law and policy then treat as baseline, not optional frosting

If regulators are smart, they’ll treat this as free adversarial testing of their frameworks.

- Can the DPA really be used to compel model de‑safing? If not, close that loophole explicitly.

- Should “supply chain risk” ever be tied to a firm refusing to support ethically dubious uses? If not, cabin that definition.

And crucially: when the next Anthropic‑style dispute happens, maybe with a smaller vendor, or over a less popular red line, policymakers will have a template.

For everyone else actually building with AI

There’s also a quiet norm being set for commercial buyers: if your vendor is willing to cave on safety for the Pentagon, what makes you think your use case will be different when there’s money on the table?

If you’re deploying LLMs into financial systems, medical tools, or safety‑critical software, you want a vendor that treats some behaviors as unshippable, even if the law is silent.

You already worry about hallucinations, skewed outputs, and model brittleness in day‑to‑day business, we covered that in Are Large Language Models Reliable for Business Use?. Anthropic is arguing that, in the military context, unreliability isn’t a nuisance cost; it’s casus belli.

The difference between “annoying support ticket” and “unintended escalation” is mostly the domain.

Key Takeaways

- Anthropic’s refusal wasn’t just ethical posturing; it was a deliberate stress test of how far Pentagon legal tools (DPA, supply‑chain risk) can reach into model design and safeguards.

- The core fight is over who controls the AI safety envelope, the buyer via “all lawful purposes,” or the vendor via contractual and technical constraints that persist even when the law would allow more.

- Threatening to use the Defense Production Act and supply‑chain risk labels against a safety‑conscious U.S. AI firm is unprecedented and forces policymakers to clarify how these powers apply to software.

- The Anthropic Pentagon dispute creates a template for AI vendor safety clauses in high‑stakes contracts, pressuring other labs to either follow suit or be branded as the flexible ones for more dubious uses.

- For governments and enterprises alike, this standoff signals that AI procurement now requires negotiating usage limits as first‑class terms, not treating safety as an afterthought glued on with policy PDFs.

Further Reading

- Anthropic CEO says it ‘cannot in good conscience accede’ to Pentagon’s demands, AP’s straight account of the dispute, quotes from Anthropic and the Pentagon, and initial political reaction.

- Anthropic rejects Pentagon terms for lethal use of its chatbot Claude, Washington Post explains the contract context, classified‑network usage, and Anthropic’s red lines.

- Anthropic says Pentagon’s ‘final offer’ is unacceptable, Axios focuses on the “final offer” framing, the deadline drama, and how other firms have responded to similar Pentagon requirements.

- Anthropic cannot accede to Pentagon’s request in AI safeguards dispute, CEO says, Reuters (republished) adds detail on the safety rationale, contract size, and the DPA / supply‑chain threats.

- Anthropic CEO stands firm as Pentagon deadline looms, TechCrunch situates the standoff in the broader industry and how the Pentagon might transition to other vendors.

In a decade, when we’re arguing over whether some future model should sit in the nuclear chain of command, lawyers will dig up this case and call it “the Anthropic clause.” They’ll either thank them for forcing clarity early, or wish more vendors had been willing to run the stress test before the shooting started.