On a Friday afternoon, your agency’s AI dashboard looks normal. Dozens of internal tools quietly call one provider, Anthropic, through a GSA‑blessed contract. By Monday, a presidential post drops, the Pentagon labels Anthropic a “supply chain risk,” GSA starts pulling listings, and suddenly that core provider is politically radioactive.

That’s the Anthropic ban in practical terms: not a think‑tank hypothetical, but a live demo of how one bad weekend can amputate a critical AI dependency by presidential fiat.

The argument I want you to hold onto is this: the Anthropic ban is less about Anthropic and more about a new playbook. The White House just showed that the real battlefield for AI isn’t regulation or standards, it’s procurement. And if you’re building anything on top of AI, your architecture and contracts need to assume that a key provider can be switched off for reasons that have nothing to do with uptime or accuracy.

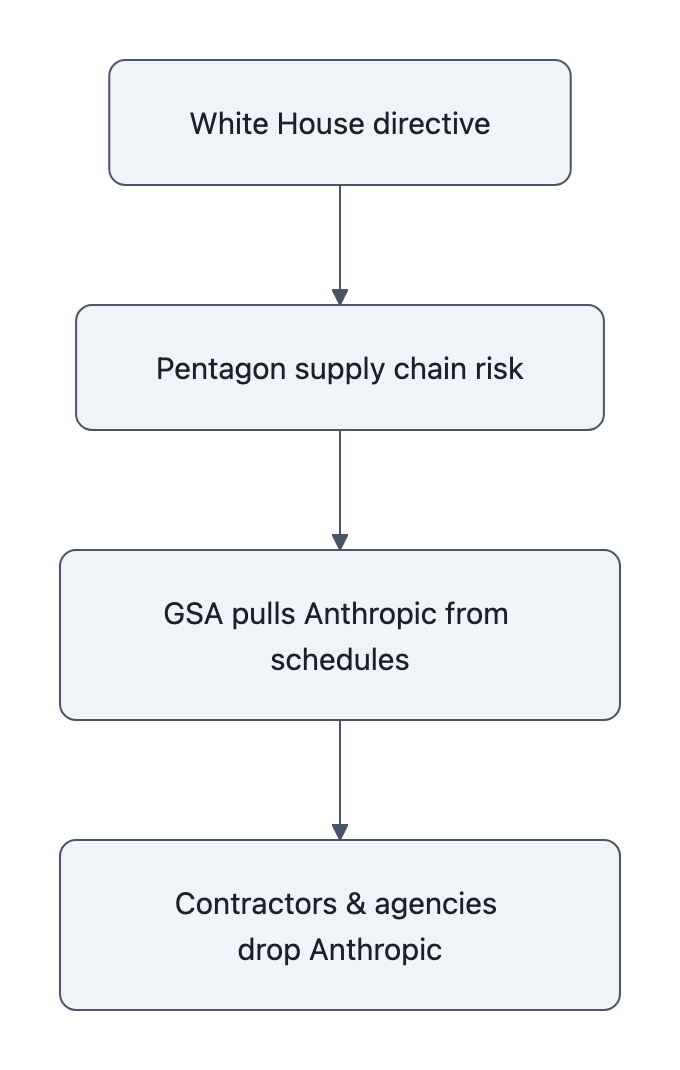

Quick timeline: what the Anthropic ban actually changed

Let’s compress the facts to the stuff that matters for engineering and procurement, not politics.

Talks between Anthropic and the Defense Department broke down over two red lines: Anthropic refused to allow its models for mass domestic surveillance or fully autonomous weapons. The next moves came fast:

- President Trump publicly directed “all U.S. agencies to stop using Anthropic’s artificial intelligence technology,” with a six‑month phase‑out window for defense uses, according to the Associated Press.

- Defense Secretary Pete Hegseth said he would deem Anthropic a “supply chain risk,” language usually reserved for foreign adversaries. In his words: “Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.”

- TechCrunch and Axios report that GSA moved to remove Anthropic from government procurement options, the GSA procurement Anthropic channel that made it easy and boring to buy Claude suddenly became… not that.

- Anthropic responded that such a supply chain risk designation would be “an unprecedented action, one historically reserved for US adversaries, never before publicly applied to an American company,” and promised to challenge it in court while helping customers transition if they’re forced off.

Notice what’s missing: a clean, public, standalone Treasury press release saying “we are terminating all use of Anthropic.” Some aggregators and non‑US outlets repeat that Treasury and the FHFA are cutting ties, likely based on wire snippets, but the consistent, verifiable moves are: presidential directive, Pentagon designation, GSA action, and contractors being warned off.

So in practice, the Anthropic ban means:

- agencies are under political instructions to stop using Anthropic;

- contractors who want DoD work are warned not to touch Anthropic;

- the easiest federal procurement on‑ramp has been yanked.

That’s enough to functionally erase Anthropic from large chunks of the federal market, and to scare any risk‑averse enterprise CIO watching from the sidelines.

Why the supply chain risk designation is a governance inflection point

“Supply chain risk designation” sounds like compliance wallpaper. It is not.

In the defense world, designating a company as a supply‑chain risk is the bureaucratic equivalent of putting a skull‑and‑crossbones on their logo. It tells every prime contractor and subcontractor: if you touch this vendor, you might lose your government work.

Historically, this tool was aimed outward, at Huawei‑style foreign vendors, or companies tied to adversary governments. The Anthropic Pentagon dispute took that same weapon and pointed it at a domestic company over a policy disagreement about AI safety.

That’s the governance turning point.

Once you accept that “supply chain risk” can include “CEO declined to sign off on fully autonomous weapons,” you’ve expanded the category from security posture to ideological alignment. The bar for “national security threat” just slid from “can China exfiltrate our data through this router?” to “does this firm comply with our current administration’s doctrine on how AI should be weaponized?”

This matters for three reasons:

- Precedent travels. If DoD can do this to Anthropic, other agencies now have a blueprint for punishing domestic vendors that don’t play ball, on AI or elsewhere. You don’t have to pass a new law; you just whisper “supply chain risk” and let procurement do the rest.

- The chill spreads faster than the memo. Most companies won’t wait for a formal designation letter. Hegseth posts, AP and the Washington Post call it unprecedented, GSA starts scrubbing listings, and a dozen GC’s tell their CTOs to freeze new Anthropic work “pending review.”

- The signal goes to investors. Axios framed it bluntly: Anthropic vs. the White House put tens of billions at risk. Political risk just got upgraded from a footnote in the S‑1 to a first‑order factor in AI valuations.

You can think of regulation as the official road system, hearings, rulemaking, comment periods. What the Anthropic ban shows is the service roads that really move traffic: procurement, designations, and eligibility lists.

The politics of AI just switched highways.

Practical fallout: vendor lock‑in, procurement, and startups

Look, if you’re an engineer or founder, you can roll your eyes at DC drama. But this part isn’t optional: the Anthropic ban is a worked example of why “we’re all‑in on Provider X” is now a governance failure, not just a tech‑stack quirk.

A top Reddit comment nailed it in one line: “this is why vendor lock‑in with any single AI provider is a terrible strategy right now… the entire landscape can shift overnight based on political decisions that have nothing to do with the technology.”

Concretely, three things change for anyone building on AI:

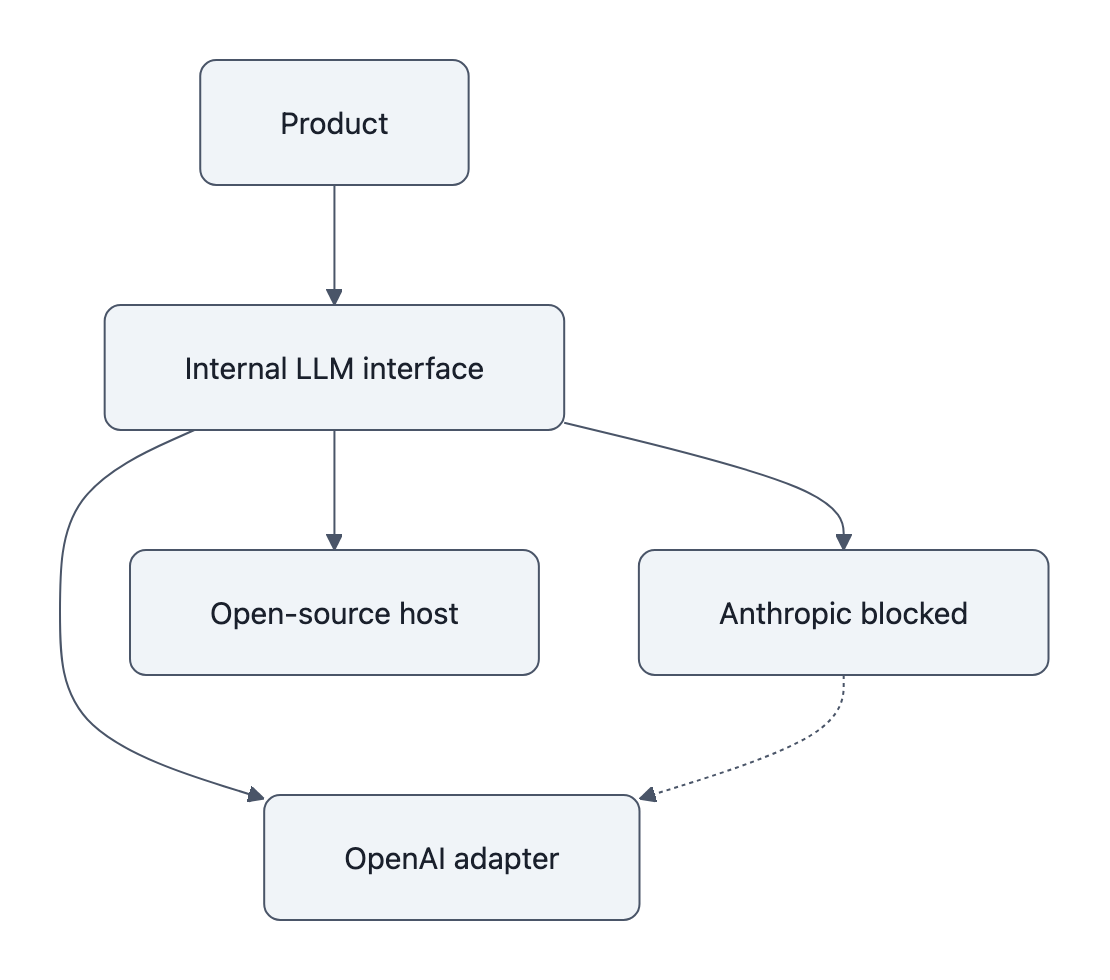

1. Vendor‑agnostic engineering becomes a survival trait

If your app talks directly to api.anthropic.com everywhere, you’ve essentially hard‑coded political risk into your codebase.

Better pattern: treat the AI provider like a database driver, not the app logic.

- Define your own internal “LLM interface”, a small set of request/response shapes that your product depends on.

- Put provider‑specific hacks (system prompts, tools, safety settings) in adapters behind that interface.

- Maintain at least two real integrations (say Anthropic + OpenAI or Anthropic + open‑source via an inference host) and auto‑failover when one returns sustained errors or breaches an SLO.

This isn’t abstract. If you’d had that abstraction layer before the Anthropic ban, a forced migration from Claude to another model would still hurt, but it’s a week of painful prompt and eval work, not a quarter‑long rewrite.

2. Procurement has to price in “political outage” alongside technical outage

For government buyers, the lesson is brutal: your risk register can’t stop at “what if the API goes down?” or “what if the model hallucinates?” You now have to ask:

- What if this provider is suddenly tagged as a supply chain risk?

- How fast can we switch to an alternative that’s already security‑cleared and on contract?

- Do our contracts permit us to dual‑source, or did we sign exclusivity because it was cheaper?

For private companies, the same logic applies, just with different politics:

- If your AI provider becomes a domestic political punching bag, does that spook your regulators, your board, or your customers?

- If a future EU or UK regulator mirrors this move in reverse, say, targeting a U.S. cloud giant, how trapped are you?

The organizations that glide through this will be the ones whose procurement teams insisted on:

- Multi‑year, multi‑vendor frameworks instead of single‑vendor, all‑you‑can‑eat deals.

- Explicit exit clauses that talk about political or regulatory designation as a trigger for accelerated termination and migration support.

- Data and prompt portability commitments, exportable logs, model‑agnostic fine‑tuning formats, and clear IP rights over your system prompts and tools.

3. Startups must sell optionality, not just “Claude but we wrapped it nicer”

If your startup is “Anthropic, but with a nicer UI for finance teams,” you just discovered what platform risk feels like.

The silver lining: the Anthropic ban makes vendor‑agnostic products more valuable. There’s suddenly premium on:

- brokers that can dynamically route to Anthropic, OpenAI, open‑weights models, etc.;

- eval frameworks that help teams compare performance across models quickly when they’re forced to switch;

- “compliance shields”, layers that can be reconfigured to satisfy new safety or use‑case restrictions without rewriting the app.

In other words, the governance shock that hurts Anthropic directly will reward anyone who’s been quietly building AI abstraction the way boring enterprise SaaS abstracts databases, clouds, and SSO providers.

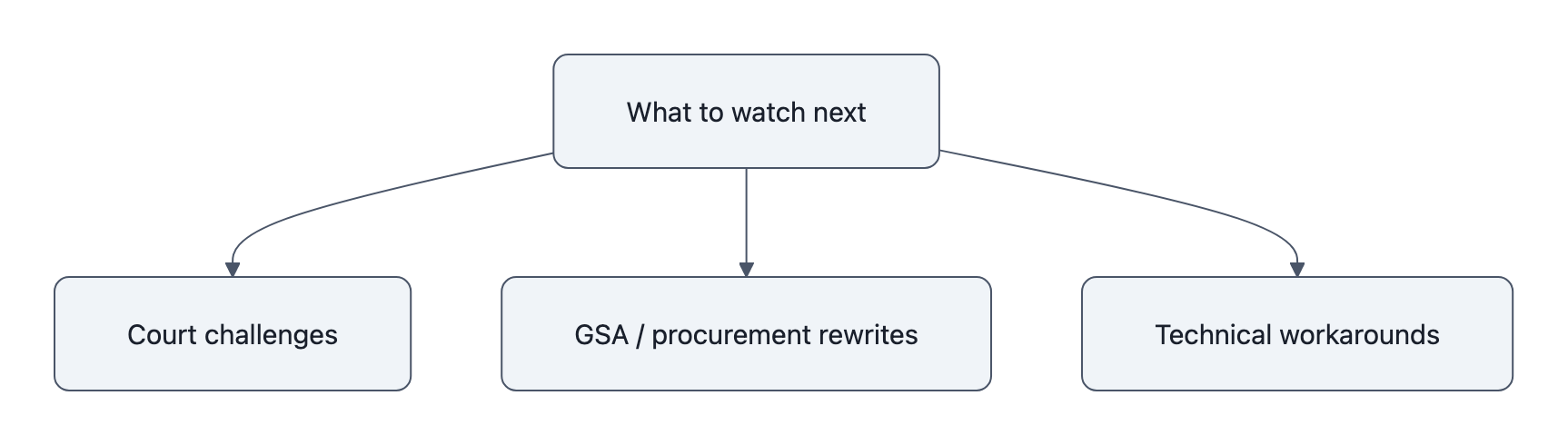

Anthropic ban as turning point: what to watch next

If this is the moment AI politics moved from regulation to procurement power, where does it go?

Three fronts to watch, and to prepare for.

1. Lawfare over the supply chain risk designation

Anthropic has already said it will “challenge any supply chain risk designation in court.” That’s not bluster; it’s existential.

If courts bless the Pentagon’s move, you’ve effectively constitutionalized the idea that the executive can weaponize supply‑chain rhetoric against domestic firms over policy disagreements. Expect:

- more aggressive use of similar designations in future disputes;

- copycat moves by other departments under the same logic;

- pressure on allies to adopt similar lists, fracturing the AI provider map by bloc.

If courts push back, especially if they stress how unprecedented it is to treat a domestic AI lab like a foreign adversary, you get a partial guardrail. The playbook still exists, but it’s riskier to run.

2. Quiet GSA and procurement rewrites

The loud fight is over Hegseth’s “national security” rhetoric. The quiet, arguably more important fight will happen in GSA boilerplate and contract templates.

Watch for:

- new standard clauses that bake in compliance with evolving DoD “risk lists;”

- pressure to standardize “acceptable use” definitions that mirror Pentagon preferences;

- de facto blacklists encoded in who’s allowed onto new AI‑specific schedules.

This is how a one‑off Anthropic ban becomes background radiation in procurement: not via a big “AI Law,” but via a thousand small requirements that conveniently filter out the next Anthropic‑style holdout.

3. Technical workarounds and pressure valves

On the technical side, expect a rush of:

- open‑weight investments: more money into Llama‑style models and secure inference hosts that can be rebranded as “domestic, controllable, less politically risky” alternatives;

- model compatibility efforts: libraries that normalize prompts and tool calls across providers so you can swap Anthropic out with fewer code changes;

- on‑prem and air‑gapped deployments: especially for defense and regulated sectors, as a way to keep using certain tech while insulating the vendor branding from the political fight.

Ironically, the Anthropic ban may slow down responsible AI safety work while accelerating crude “just run it ourselves” deployments on models with fewer guardrails. If refusing mass domestic surveillance gets you treated like Huawei, the incentive is to be less fussy next time.

That’s the part almost nobody is talking about: punitive use of procurement against safety‑oriented firms will, over time, select for the opposite behavior.

Key Takeaways

- The Anthropic ban is not just a contract dispute; it’s the first major use of “supply chain risk” tools against a domestic AI firm over safety terms.

- Once procurement becomes a political weapon, any AI provider, and anyone building on them, inherits real political outage risk.

- Vendor‑agnostic engineering (internal LLM interface, multiple adapters, clear evals) just graduated from “nice architecture” to “governance requirement.”

- Procurement teams need explicit exit rights, multi‑vendor frameworks, and data portability baked into every AI contract.

- How courts and GSA codify this episode will shape whether future Anthropic‑style standoffs are rare shocks or standard operating procedure.

Further Reading

- Trump orders US agencies to stop using Anthropic technology in clash over AI safety, AP’s straight‑news rundown of the directive, Pentagon rhetoric, and Anthropic’s response.

- Pentagon declares Anthropic a threat to national security, Washington Post on how the “supply chain risk” framing breaks precedent.

- Statement on the comments from Secretary of War Pete Hegseth, Anthropic’s own account of the dispute and its legal posture.

- Pentagon Moves To Designate Anthropic As A Supply-Chain Risk, TechCrunch on the designation mechanics and procurement impact.

- Anthropic vs. White House puts $60 billion at risk, Axios on investor and GSA fallout from the Anthropic ban.

The next time you hear about an “Anthropic ban”-style fight, don’t just ask what the model can do. Ask how fast you could rip it out of your stack without begging a president, a procurement officer, or a general for permission.