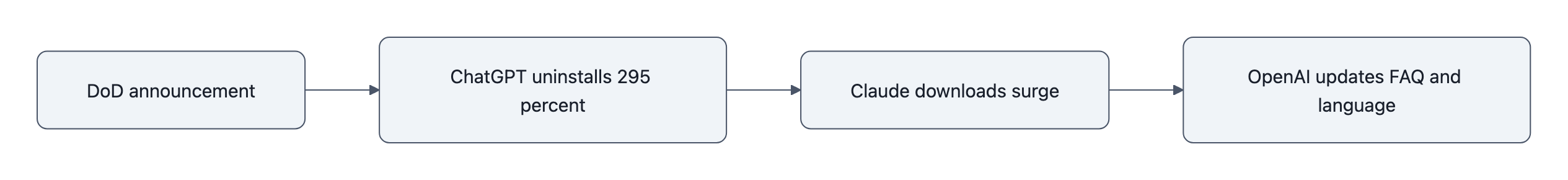

On Saturday, February 28, ChatGPT uninstalls in the U.S. jumped 295% day‑over‑day, right after OpenAI announced its deal with the newly‑renamed Department of War. Sensor Tower says the normal baseline is about 9% day‑over‑day change; this wasn’t background noise, it was a spike.

You can shrug that off as a Twitter tantrum made visible in the App Store charts.

Or you can see what it really is: the first clean example of a real‑time political → market feedback loop for consumer AI.

A government contract got announced, and by the same weekend:

- ChatGPT’s uninstall rate tripled vs normal

- Its new downloads dropped double digits

- Anthropic’s Claude jumped to #1 in the U.S. App Store, with daily U.S. downloads surpassing ChatGPT’s for the first time

That’s not vibes. That’s telemetry.

This isn’t a story about one bad PR week. It’s a story about how trust just became a quantifiable asset, and a new risk line on every AI company’s cap table.

Why the 295% ChatGPT uninstalls spike matters (and how to read the numbers)

Let’s de‑mystify the 295% first.

TechCrunch, citing Sensor Tower, reports:

- Typical day‑over‑day ChatGPT uninstall change: ~9%

- February 28 after the OpenAI DoD announcement: +295% day‑over‑day uninstalls

- At the same time, 1‑star reviews spiked 775% and 5‑star reviews were cut in half

Think of “295%” as: roughly 3x the recent normal level of people bailing, plus a matching wave of angry reviews.

Is it millions of people? Probably not. App‑analytics firms don’t share raw counts publicly; they share direction and magnitude. But in growth land, a sudden 3x in churn and a matching ratings collapse is a fire alarm, not a rounding error.

The better question isn’t “is that a lot?”, it’s “did behavior change in a coordinated way, traceable to one decision?”

Yes:

- OpenAI’s government deal lands

- Users immediately leave 1‑star reviews complaining about that exact thing

- ChatGPT uninstalls spike at the same time downloads of the main rival (Claude) surge, right after Anthropic publicly says “no” to that same kind of deal

That’s not background random walk. That’s event‑driven switching.

If you’re building or funding AI, that’s the trendline you care about: user trust flipping from “latent grumbling” to “measured churn” inside a 48‑hour window.

What the surge reveals about trust, politics, and AI market risk

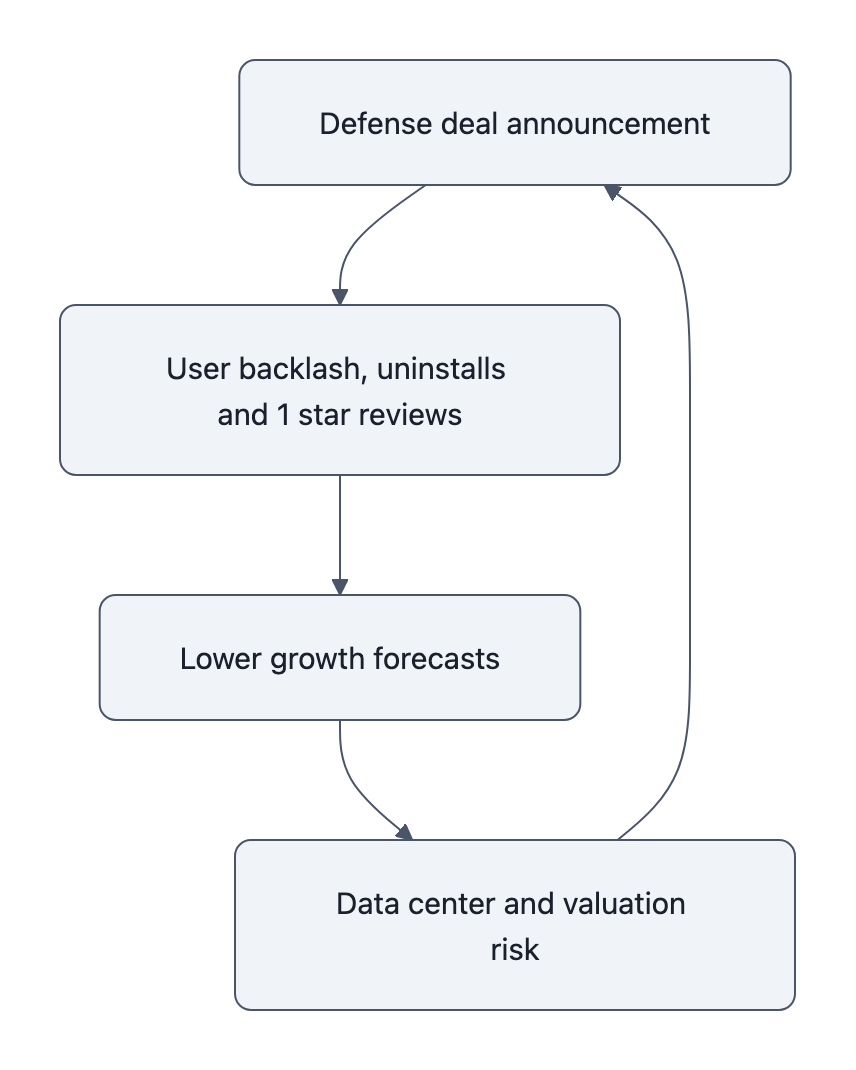

We just watched reputational risk get priced in, in real time, via app stores.

The old model:

- Political controversy → op‑eds → maybe some brand surveys next quarter → someone hand‑waves “trust” in a deck.

The new model:

- Blog post with “Department of War” in the title goes up.

- App‑analytics dashboards light up the same day.

- Investors can literally overlay political decisions with uninstall curves and ratings distributions.

Sensor Tower and Appfigures didn’t become more accurate last week. What changed is how clean the cause‑and‑effect is:

- OpenAI publishes “Our agreement with the Department of War,” promising red lines: no mass domestic surveillance, no fully autonomous weapons, no social‑credit‑style systems.

- Backlash is instant enough that OpenAI is editing contract language and FAQs within days to say, in effect, “no, seriously, we mean it about not spying on Americans.”

- Over the same days, Sensor Tower uninstall data and ratings data move sharply, and Appfigures sees Claude’s U.S. downloads jump past ChatGPT’s.

That’s not a PR problem. It’s a control‑systems problem.

You’re watching a closed loop:

Policy choice → public perception → measurable user behavior → revenue and valuation assumptions.

And because AI is now in a full‑blown data‑center bubble, with trillions in capex justified by rosy “every human will use this every day” curves, even small trust‑driven kinks in those curves matter.

If persistent backlash knocks, say, 10-15% off long‑term consumer adoption for one of the flagship players, you don’t just lose some subscription revenue. You potentially blow up:

- The ROI model on GPU and data‑center build‑outs

- The “winner‑take‑most” valuation story for that specific company

- The circular equity deals between chipmakers, cloud providers, and AI labs that all assume “this line always goes up”

Look back at that Reddit comment that went viral: “If OpenAI is worth 40% or 25% of current valuation, the math on new data center builds collapses.” That’s hyperbolic, but it’s directionally right.

If user trust can knock 20-30% off your realistic user TAM, a lot of those power‑hungry data centers start penciling out as stranded assets, not money printers.

Why Anthropic’s Claude benefited, and whether those users stick

Appfigures and Similarweb both saw it: while ChatGPT uninstalls and 1‑star reviews spiked, Claude’s daily U.S. downloads:

- Jumped 37% on Friday, then 51% on Saturday (Sensor Tower via TechCrunch)

- Surpassed ChatGPT’s U.S. daily installs for the first time, per Appfigures

- Hit #1 free iPhone app in the U.S. and several other countries

Anthropic didn’t just avoid a land mine. They stepped on a growth pedal.

They explicitly walked away from a Pentagon deal over concerns about domestic surveillance and fully autonomous weapons. Then the market, for once, had a live A/B test:

- Variant A: “We’ll work with DoD but promise red lines.”

- Variant B: “We won’t sign this because of those exact red lines.”

And for at least one crucial weekend, Variant B won.

Do those users stay?

Some will bounce. Some are just protest‑switchers who installed Claude, typed “hi”, then went back to their default habits in a week.

But two things matter:

- You only need a fraction to stick. If even 10-20% of that protest cohort becomes paying Claude subscribers, Anthropic just bought themselves years of traditional marketing spend, with one well‑timed “no.”

- Switching costs are low but onboarding friction is real. Every time a user moves their workflows, documents, and mental models from ChatGPT to Claude, the next switch gets harder. Stickiness compounds.

If you’re an investor, this is the nightmare and the opportunity:

- Nightmare: Trust shocks can reshuffle market share instantly, making any “this moat is permanent” pitch look silly.

- Opportunity: Competitors can weaponize restraint. Saying “no” to high‑margin government money becomes a user‑acquisition lever.

In other words: the upside to not taking the OpenAI DoD deal isn’t just sleeping better at night. It’s line items in Appfigures charts.

How reliable are these app‑analytics signals, really?

You should always treat these dashboards as proxies, not ground truth.

Sensor Tower, Appfigures, Similarweb, they’re all building models off:

- App Store / Play Store public charts

- Panel data from instrumented devices

- Developer‑side reporting where available

- Statistical sampling and extrapolation

They are not reading Apple’s raw install logs.

So yes, the exact numbers can be off. Maybe “295%” is really 260% or 330%. Maybe Claude’s installs that day were a little higher or lower than estimated.

But three things make this episode unusually trustworthy:

- Directionally consistent across vendors. Independent firms all saw the same thing: ChatGPT dips, Claude rips.

- Timing is tightly coupled to a single event. The curves move right after the DoD announcement, not randomly two weeks later.

- Qualitative signals match the quantitative ones. 1‑star reviews referencing the Pentagon deal, social media hashtags, Reddit threads like the one you just saw, all telling the same story as the charts.

In growth engineering, this is good enough to ship or roll back a feature.

If you wait for audited, GAAP‑compliant user sentiment numbers, you’ve already lost the cohort that uninstalled you and paid your competitor.

What companies and everyday users should do next

If you’re building or funding AI, you now have no excuse to treat “trust” as fluff.

You have the data to instrument it the same way you instrument latency or conversion:

- Define trust events. Government or defense deals. Policy changes. Big model‑behavior shifts. Announce → watch uninstalls, MAU, and 1‑star reviews on a 24-72 hour window.

- Set guardrails. Decide in advance: “If uninstalls jump >X% and 1‑stars >Y% on a trust event, we revisit or roll back.” Make that as binding as your SLOs.

- Price trust into your infra plans. Don’t model data‑center ROI on naive “everyone uses this every day forever.” Build in scenarios where a political decision lops off 20% of consumer use and pushes more usage to competitors like Claude.

And if you’re a user?

Treat your installs and subscriptions as votes with billing info attached.

- If you hate the OpenAI DoD deal, uninstalling isn’t symbolic, Sensor Tower literally turns that into charts that investors and PMs stare at.

- If you like Anthropic’s stance, Claude downloads aren’t just convenience; they’re a growth signal that says “this alternative is real.”

Then go one level deeper:

When the next “we’ve partnered with [government agency]” post drops, don’t just skim the blog. Read the FAQ. OpenAI added explicit “no domestic surveillance” language only after public pressure and bad optics. That tells you something about default incentives.

And, importantly: this isn’t just OpenAI. If Anthropic blinks in two years and signs a surveillance‑adjacent deal, the same feedback loop applies. Same for Google, Meta, whoever.

Trust is now visible on the dashboard. Use it.

The next time a lab signs a defense contract, don’t just argue about ethics on social media.

Open the charts, watch ChatGPT uninstalls or their equivalent, and decide whether you’re looking at a rounding error, or the moment somebody’s data‑center business quietly went off‑slope.

Key Takeaways

- ChatGPT uninstalls spiking 295% after the DoD deal isn’t a rounding error, it’s a live, measurable trust shock tied to a single political decision.

- App‑analytics firms like Sensor Tower and Appfigures just gave us proof that reputational risk now shows up as uninstall curves and 1‑star floods, not just angry tweets.

- Anthropic’s refusal to sign a Pentagon deal turned into a user‑acquisition lever: Claude downloads surged, App Store rank hit #1, and some of those protest installs will calcify into a durable user base.

- For OpenAI and peers, political partnerships now create not just PR headaches but valuation and data‑center ROI risk, because small adoption hits break fragile growth assumptions.

- Builders, investors, and users should treat installs, uninstalls, and ratings as trust telemetry, instrument it, set guardrails around it, and be willing to walk away from “easy” government money when the curves say it’s poison.

Further Reading

- ChatGPT uninstalls surged by 295% after DoD deal (TechCrunch), Original reporting on the 295% uninstall spike, 1‑star review surge, and Claude’s App Store rise, citing Sensor Tower and Appfigures.

- Our agreement with the Department of War (OpenAI), OpenAI’s announcement of the DoD deal, including stated “red lines” and later contract‑language clarifications under backlash.

- Claude Super Bowl mobile growth (Appfigures), Appfigures’ analysis of Claude’s mobile growth, showing how earlier momentum plus the Pentagon stance pushed it past ChatGPT in U.S. downloads.

- OpenAI, Anthropic and the Pentagon: Red Lines And Backlash (Forbes), Context on industry reaction, red‑line politics, and OpenAI’s contract adjustments.

- Sensor Tower, Market‑intelligence provider whose uninstall and ratings data underpins the reported 295% spike.