OpenAI revenue 2026 is supposedly a $25B rocket ship; Anthropic revenue is rumored to be chasing hard at ~$19B. The hot‑take is “wow, Anthropic is catching up.”

Wrong argument. The interesting bit is how they’re making that money.

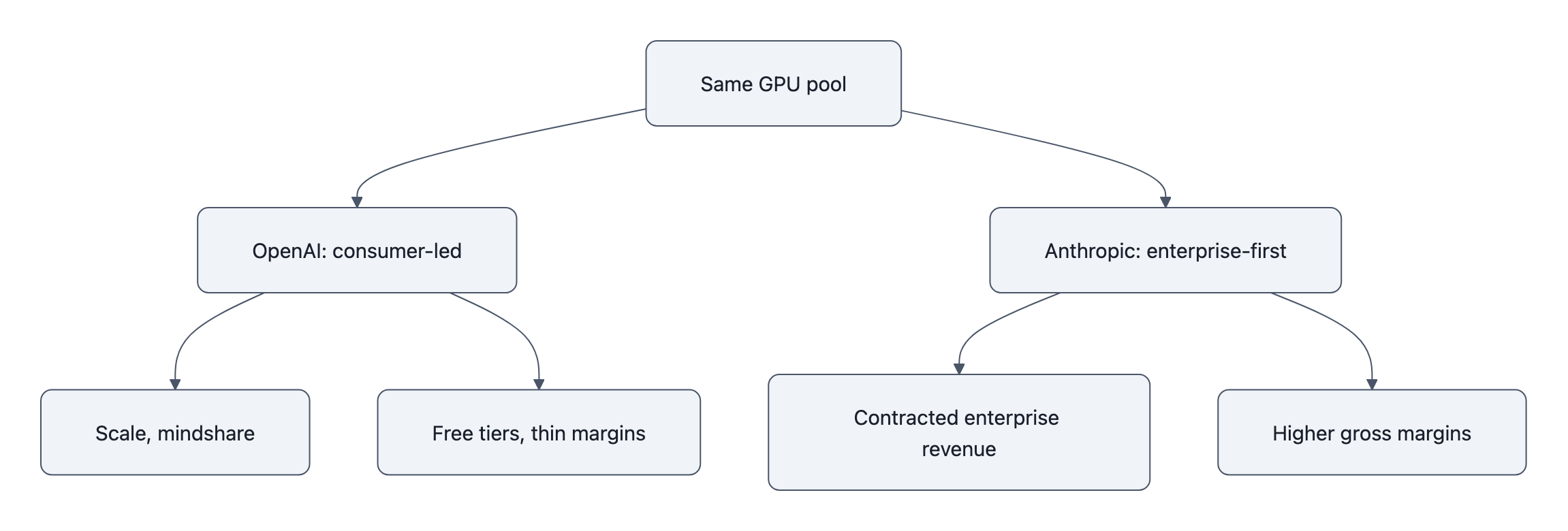

Those run‑rate numbers describe two completely different businesses sharing the same GPU pile. One is still half consumer app, half platform. The other is basically a SaaS enterprise vendor with a giant language model in the basement.

Once you see that, you start making different bets.

OpenAI revenue 2026: what the $25B run‑rate actually means

The $25B figure comes from The Information’s sourcing; Reuters repeated it and explicitly said they couldn’t verify it. OpenAI’s only on‑record number is CFO Sarah Friar’s “$20B+ annualized in 2025” line in a January post.

So treat $25B as: “last month’s revenue × 12 in a very fast‑moving market,” not “GAAP revenue locked in stone.”

Run‑rate is a velocity, not a balance. If you’re on a sugar high of one‑off enterprise deals or bursty GPU‑reselling, the extrapolation will look heroic for a quarter and then… not.

The other hidden part of “OpenAI revenue 2026” is mix:

- ChatGPT subscriptions and usage‑capped free tiers that cost real compute

- API revenue that includes a ton of experimentation

- Strategic deals where credits are bundled, discounted, or bartered against GPU access and partnership

All of that sits on an insanely expensive cost base. Training, inference, support, safety teams, trust & safety overhead, plus the cost of being everyone’s default “free” AI.

If you were building this business from scratch, you’d never start with “give a billion people near‑frontier inference for free and pray the ones who pay offset the ones who don’t.” That’s a brand moat play, not a unit economics play.

It does buy them something: scale data, developer mindshare, and default integrations. But it also embeds structural obligations, you can’t just pull the ladder up on free users without detonating your reputation and your moat.

That’s the tension baked into the $25B number.

Why Anthropic’s enterprise‑first play explains the surge

Anthropic’s ~$19B run‑rate is just as squishy, Bloomberg via PYMNTS, unnamed sources, extrapolated from a recent revenue spike. AP’s piece on their funding round has the only on‑record-ish baseline: Anthropic said they were on track for ~$14B over the next year when they closed money at a $380B valuation.

So how do you go from “on track for $14B” to insiders whispering “actually we’re at ~$19B run‑rate” in a short window?

You don’t do that selling $20/mo subs. You do that by:

- Selling enterprise‑grade products, which Anthropic’s CFO literally says is the plan

- Landing big cloud distribution (AWS, Google) where your model is the premium checkbox

- Pricing like an enterprise vendor: volume commitments, minimums, support, compliance, SLAs

In other words: this looks a lot closer to Salesforce than to “another API.”

And unlike OpenAI, Anthropic never promised the world a free, magic talking box. Claude is positioned from day one as the premium, “we know you’re going to wire this into critical workflows” tool.

That has three brutal but effective consequences:

- They can starve the free tier. Restrict models, cap usage, gate features. Fewer tire‑kickers hammering GPUs for zero revenue.

- Revenue is more contractable. CIOs signing multi‑year deals for agents that handle support or coding aren’t churn‑in‑a‑month hobbyists. Enterprise AI adoption is sticky once security, procurement, and change‑management have taken their pound of flesh.

- They can say no. The same company that just rejected a Pentagon program is picking its battles. That’s not just ethics branding, it’s pricing power. “We don’t have to chase every dollar” is catnip to risk‑averse enterprises trying to avoid the next PR firestorm.

It’s not that Anthropic is “catching up” in the same race. They joined a slightly different sport: high‑margin, slower‑scale B2B, with models and tooling tuned for “my boss will scream if this goes wrong.”

Scale vs. focus: how OpenAI and Anthropic are making different bets

Here’s the core contrast:

- OpenAI: maximize surface area. Consumer app, enterprise ChatGPT, API, voice, video, agents, education, creative tools.

- Anthropic: maximize contract value. Fewer surfaces, denser revenue: coding copilots, agentic workflows, high‑reliability Claude deployments.

One Reddit commenter nailed the vibe: OpenAI “focused on the people first,” Anthropic “on companies first.” That’s not just vibes, it maps to cost structure.

Imagine two dashboards in January 2027:

- OpenAI: 600M monthly actives, endless brand awareness, $40B run‑rate, thin gross margins because you’re serving massive free demand and subsidizing countless near‑break‑even accounts.

- Anthropic: 15M humans have ever touched Claude directly, $30B run‑rate, but 80-85% gross margins because almost everything is enterprise‑paying or cloud‑resold at a markup.

Who’s “winning” that game? Depends whether the market still rewards “eyeballs” like it did in 2010, or whether the next funding crunch demands durable, contract‑backed cash.

There’s also a product‑architecture angle nobody in the headline math talks about.

Enterprise buyers don’t want a model. They want:

- Reliability characteristics they can put in a risk memo (see: LLM reliability for business use)

- Tooling and logging that slot into their existing stacks

- Clear knobs for governance, red‑teaming, region‑locking, data‑retention

Anthropic has built a reputation around “Constitutional AI” and safety constraints basically as a product feature. They’ve tuned their research portfolio toward agents, code, RAG, and structured outputs that look like obvious enterprise use cases.

OpenAI meanwhile chased maximal frontier flash: Sora, lifelike voice agents, multimodal everything. Great for brand and for future platform bets. Less directly monetizable today than “the best code copilot your CFO can safely approve.”

Those are coherent but incompatible strategies. You don’t get to optimize for both short‑term enterprise margin and maximal global foothold without eating a lot of AI deflation risk along the way.

(If you haven’t thought through AI deflation risk, the TL;DR is: when everyone can do the same thing cheaper, prices collapse faster than demand grows. Consumer‑led AI makes that worse.)

So what now? Pricing, integrations, and where to place your bets

If you’re an enterprise, startup, or product team, these run‑rate stories should change how you buy and build, not because $25B vs $19B matters, but because of what’s underneath.

1. Assume enterprise pricing will harden.

Those “insanely costly per‑seat” plans people mention on Reddit? They exist because buyers are still in land‑grab mode and vendors are testing the ceiling.

As Anthropic proves that enterprise‑first is a viable path to $10B+ in revenue, everyone else will follow:

- Per‑seat and per‑function pricing

- Minimum monthly commits

- Premium SLAs, security, on‑prem/VPC options as separate SKUs

If you’re deploying agents across your org, bake in the assumption that your effective cost per employee is going to rise over the next 2-3 years as discounts evaporate and experiments turn into “business critical” line items.

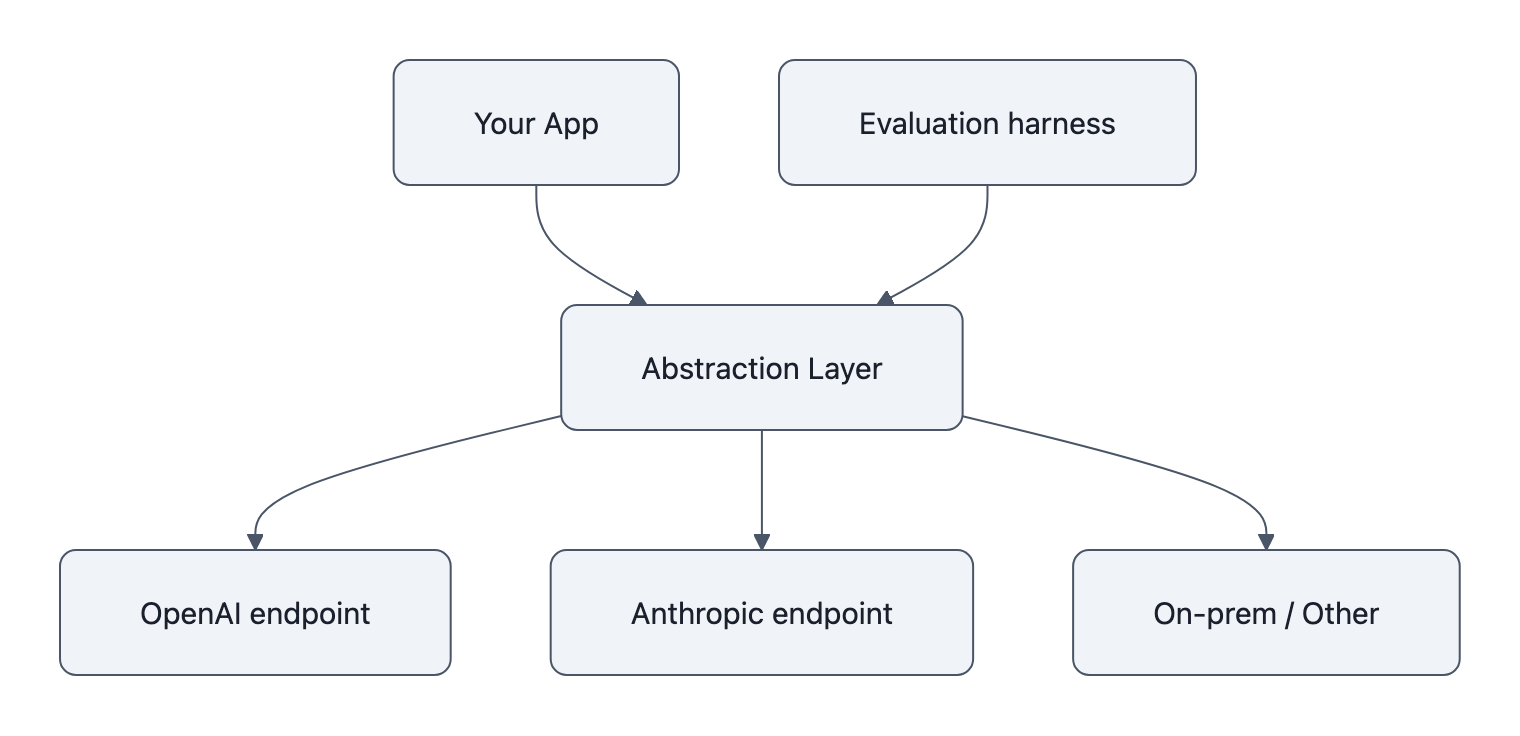

2. Don’t build anything where switching models is hard.

The rational response to “OpenAI vs Anthropic” becoming a pricing and margin war is: never let your app be hostage to one of them.

Architect like this:

- Put an abstraction layer in front of models

- Normalize prompts, tools, and outputs so you can hot‑swap backends

- Keep evaluation harnesses to compare cost/quality between providers on your own data

Today, OpenAI’s scale looks like a moat. In three years, the winning builder is the one who can switch between whichever model gives the best cost × reliability × latency on a per‑use‑case basis.

3. Expect “free” to get worse.

OpenAI can only subsidize the world for so long. As cost pressure mounts and enterprise revenue becomes the only thing that matters for valuation, the obvious levers are:

- Fewer free calls

- Weaker models on free tiers

- More aggressive upsells

Anthropic already lives in the world where free is thin and paid is premium. They don’t have to retreat from a promise.

If you’re building consumer‑facing stuff on top of “free ChatGPT,” you’re stacking your product on sand.

4. Stop reading run‑rate charts like league tables.

These numbers are not a Google‑vs‑Meta style ad‑revenue scoreboard. They’re blurry snapshots of two companies transitioning from “burn GPU, win mindshare” to “please god show a path to profit.”

Ask instead:

- How much of that revenue is recurring, contract‑bound, and attached to specific SLAs?

- What’s the gross margin on incremental usage?

- How painful is it for a typical customer to switch?

On those questions, Anthropic’s strategy looks saner for Phase 2 of AI adoption. OpenAI still has massive advantages, data, dev mindshare, brand, and the ability to push new primitives (like their bidirectional audio model) into the broader platform stack.

But the match is no longer “who has the biggest model.” It’s “who can turn GPUs into predictable enterprise cash without racing to the bottom.”

Key Takeaways

- “OpenAI revenue 2026” at $25B and Anthropic’s ~$19B are run‑rate guesses, not audited facts, useful directionally, misleading as a scoreboard.

- OpenAI’s growth is built on a consumer‑heavy, free‑tier‑subsidized model that buys scale and mindshare at the cost of messy unit economics.

- Anthropic’s enterprise‑first, premium positioning produces smaller surface area but denser, more contractable revenue that investors actually sleep on.

- Enterprise AI adoption is shifting power from “who has the flashiest demo” to “who sells the safest, most reliable, least‑swappable integration.”

- Builders should assume pricing will tighten, free access will erode, and the only sane move is architecting for multi‑model, easy‑switch deployments.

Further Reading

- OpenAI develops bidirectional audio model, boosts revenue run‑rate, The Information, Original report that pegs OpenAI at a $25B annualized run‑rate and notes Anthropic narrowing the gap.

- OpenAI tops $25 billion annualized revenue, Reuters via Yahoo Finance, Syndicated summary that repeats The Information’s figures and notes they’re unverified.

- Enterprises drive Anthropic run‑rate revenue to $19 billion, PYMNTS, Coverage citing Bloomberg on Anthropic’s rapid enterprise‑driven run‑rate growth.

- Anthropic raises funding, eyes enterprise sales, AP News, AP writeup on Anthropic’s funding, valuation, and explicit enterprise‑grade product focus.

- OpenAI CFO teases new money‑making plans, ITPro, Coverage of OpenAI’s confirmed $20B+ 2025 run‑rate and hints about their monetization strategy.

The scoreboard isn’t who can shout the biggest run‑rate; it’s who can survive when the hype cycle ends and AI has to pay its own GPU bill. Anthropic is playing that game on purpose. OpenAI is being dragged into it.