Two hundred thousand lab‑grown human neurons sit on a microelectrode array in Melbourne, wired into a PyTorch RL agent. A few days later, those neurons play Doom well enough to survive beginner levels, and anyone with a credit card can now rent that setup through a web API.

That’s the part people are missing about neurons play Doom: this isn’t a one‑off sci‑fi stunt. It’s a product.

Cortical Labs calls it CL1, exposes it through the Cortical Cloud, and ships a CL API with timing guarantees down to milliseconds. The GitHub repo for the CL API Doom demo looks like any other RL starter kit, except one of the devices you .connect() to is a sheet of living brain tissue.

We are not ready for that.

What happened when neurons play Doom, and why it matters

Look at the Doom demo code and docs, not the headlines.

You’ve got:

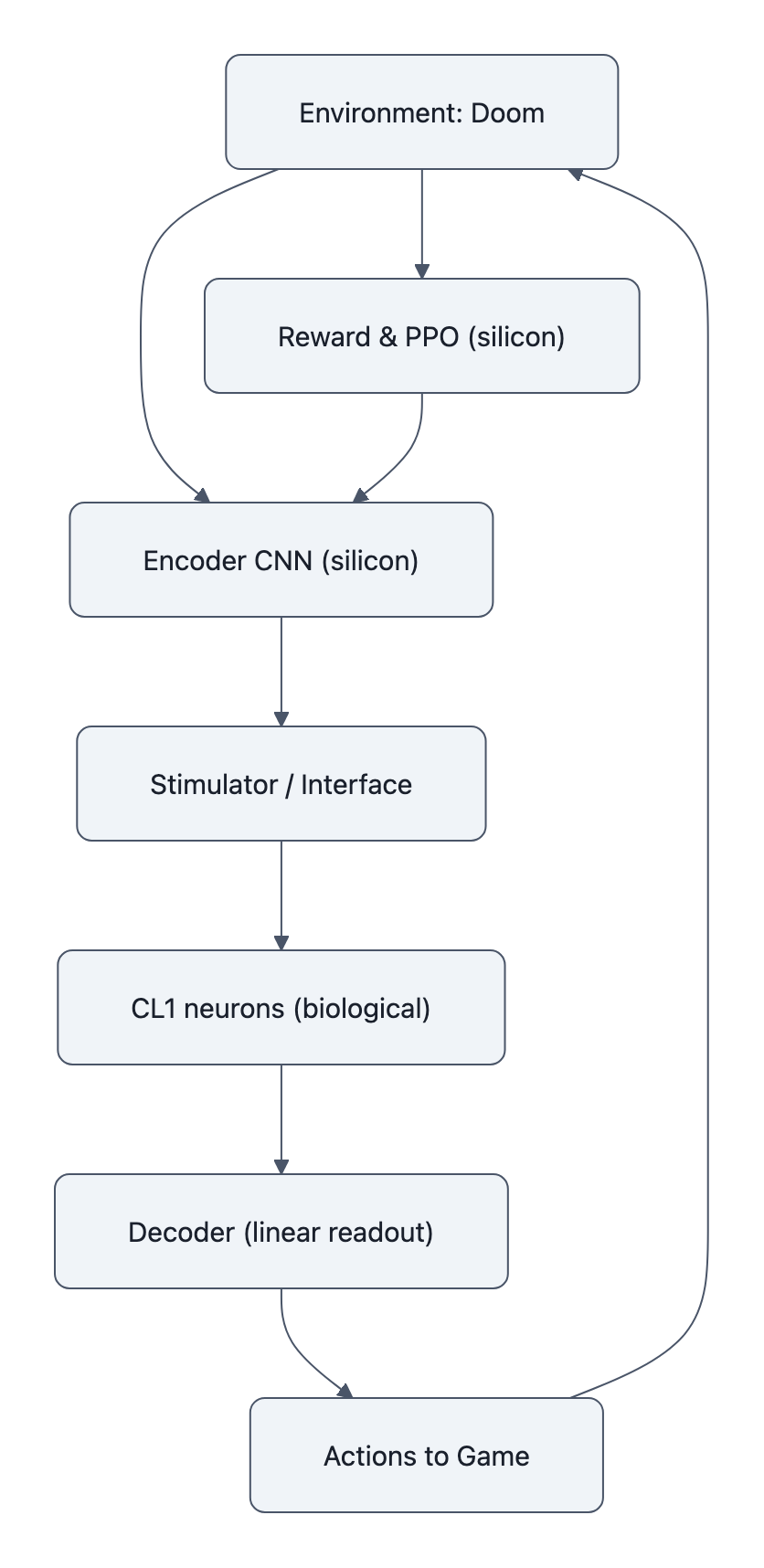

- A Doom environment rendered at 320×240.

- An encoder CNN + PPO policy running on normal silicon that decides how to stimulate the neurons (frequency, channels, pulses).

- A CL1 chip where ~200k neurons sit on an electrode grid, firing spikes when stimulated.

- A linear decoder that maps spike patterns back to joystick actions.

The loop is closed: change the stimulation → neurons adapt firing patterns → decoder turns spikes into move/shoot → Doom guy lives or dies → reward signal shapes future stimulation.

Cortical’s FAQ for the repo is explicit: the “0‑bias full linear readout” means the decoder is dumb; the learning signal is in how the neurons change their spiking over time. Ablation runs with random spikes or zero spikes don’t improve. Freeze the encoder weights and you still see performance gains during testing.

So the company is not faking it. There is learning in the tissue.

But RDWorld’s replication, 601 runs and a close read of the code, points out that almost all the machinery that decides what counts as success lives off‑chip. The CNN, the PPO loop, the reward function, the scenario curriculum, all silicon. A docstring even says: “the CL1 device performs NO computation.”

Both things can be true:

- The neurons genuinely adapt to reward.

- The framing of “human brain cells play Doom” quietly moves most of the “intelligence” into the software around them.

Why does this matter ethically? Because the more we blur that line in the marketing, the easier it is to pretend there’s no moral status in the dish right up until there is.

Today, CL1 is a neat demo. In five product cycles, it’s the cheapest way to get a low‑power adaptive controller onto a drone.

And nobody has set rules for what you’re allowed to make that tissue do.

Why the biological vs. silicon learning debate changes the ethics

If the neurons were just a noisy sensor, this would be boring. It’s not.

Biological neurons are dynamical systems with internal state: membrane potentials, synaptic weights, adaptation currents. Cortical’s own materials stress that the same stimulation later in training produces different spike patterns because the network has been conditioned by feedback.

That’s the whole point. You rent CL1 because biology learns differently from your transformer.

Silicon RL agents:

- Optimize a loss.

- Store weights in flash or HBM.

- Can be cloned, rolled back, and deleted without anyone worrying about “experience.”

Cultured neurons:

- Rewrite synapses physically.

- Restructure micro‑circuits over hours and days.

- Live on a continuum with the stuff in your skull that implements pain, pleasure, and everything in between.

The Doom demo is deliberately playful, demons, shotguns, 90s pixels, but the reward structure is naked behaviorism: if the spikes produce actions that keep the agent alive, the tissue gets one kind of stimulation; if not, it gets another. That’s literally conditioning a tiny nervous system with pleasure/punishment‑like feedback loops.

Do those cells “experience” anything? Almost certainly not in the human sense.

But here’s the ethical trap: we don’t wait for proof of consciousness before we regulate every other risky tech. We use proxies and thresholds:

- Radiation dose, not “proof of future cancer.”

- Probability of off‑target edits in CRISPR, not “proof of a birth defect.”

- Animal models and welfare scores, not “proof the mouse has an inner life.”

When neurons play Doom, the very selling point is that the tissue is doing goal‑directed adaptation. That’s exactly the kind of thing that should trigger more ethical scrutiny than a standard GPU, not less.

Yet Cortical Labs can put CL1 on a commercial cloud with fewer ex‑ante rules than a university grad student has to follow to poke a mouse.

That’s backwards.

When do cultured neurons cross an ethical threshold?

Two bad extremes dominate this conversation:

- “It’s just cells in a dish, who cares?”

- “We grew a screaming homunculus and forced it to speedrun Hell.”

Both are lazy.

What we actually need is a boring, quantitative middle: standardized proxies that say, “Above this line, you treat the tissue as ethically sensitive. Below this line, it’s regulated like any other cell culture.”

Obvious candidates:

- Neuron count and architecture. 200k neurons on a flat array is nowhere close to a cortex, but what happens when someone wires millions into a 3D organoid with layered connectivity?

- Functional complexity. Can the network:

- Maintain state across long timescales?

- Integrate multi‑modal inputs?

- Generalize behaviors across tasks?

- Exhibit sleep‑like oscillations or global synchronous events?

- Closed‑loop reward sophistication. crude “more spikes = more reward” is one thing. Multi‑dimensional hedonic landscapes, aversive regimes, or simulated nociception are another.

None of these equal “consciousness.” They do mark “you’re approaching nervous‑system‑like behavior.”

That’s the moment to flip the switch from “interesting hardware” to “you are now under something closer to animal‑research standards.”

Right now, that line doesn’t exist. A startup can steadily ratchet up:

- Neuron counts.

- 3D structure.

- Training time.

- Task complexity.

…without ever crossing a bright regulatory boundary, because the law doesn’t recognize “living brain cells AI” as a category.

The Doom demo is the canary. Once you let neurons play Doom, you’ve accepted “trainable human neural networks as a service” into the commercial stack. From here, the only real questions are: how big, how long, and doing what.

Practical policy fixes: lab rules, disclosure, and consent

Look, we can’t and shouldn’t ban this work outright. The CL API paper shows serious science: sub‑millisecond closed‑loop control, new tools for probing computation in living tissue. You don’t throw that away.

But you also don’t let “brain‑in‑a‑box” slide by under the same norms as high‑throughput screening.

Three narrow fixes would close most of the ethical gap without killing the research.

1. Mandatory transparency about what the tissue really did

If you’re going to advertise that neurons play Doom, you should have to publish, at minimum:

- Clear diagrams of the split between biological and silicon components.

- Ablation studies showing performance with and without live tissue.

- Quantitative metrics of how much policy adaptation occurred in the cells versus the software.

RDWorld had to run the CL API Doom demo 601 times and dig through docstrings to argue that “the CL1 performs no computation” in the official architecture. That’s ridiculous. Journals, funders, and reviewers should demand this breakdown up front.

Concretely:

- Journals: no acceptance of “biohybrid controller” papers without a section quantifying the biological contribution.

- Funders: require grantees to publish code and ablation results as a condition of support.

- Labs: adopt internal guidelines that marketing claims (“living brain cells AI”, “neurons play Doom”) mirror the actual experimental role of the tissue.

Transparency first. Hype later.

2. Standardized proxy thresholds for ethical oversight

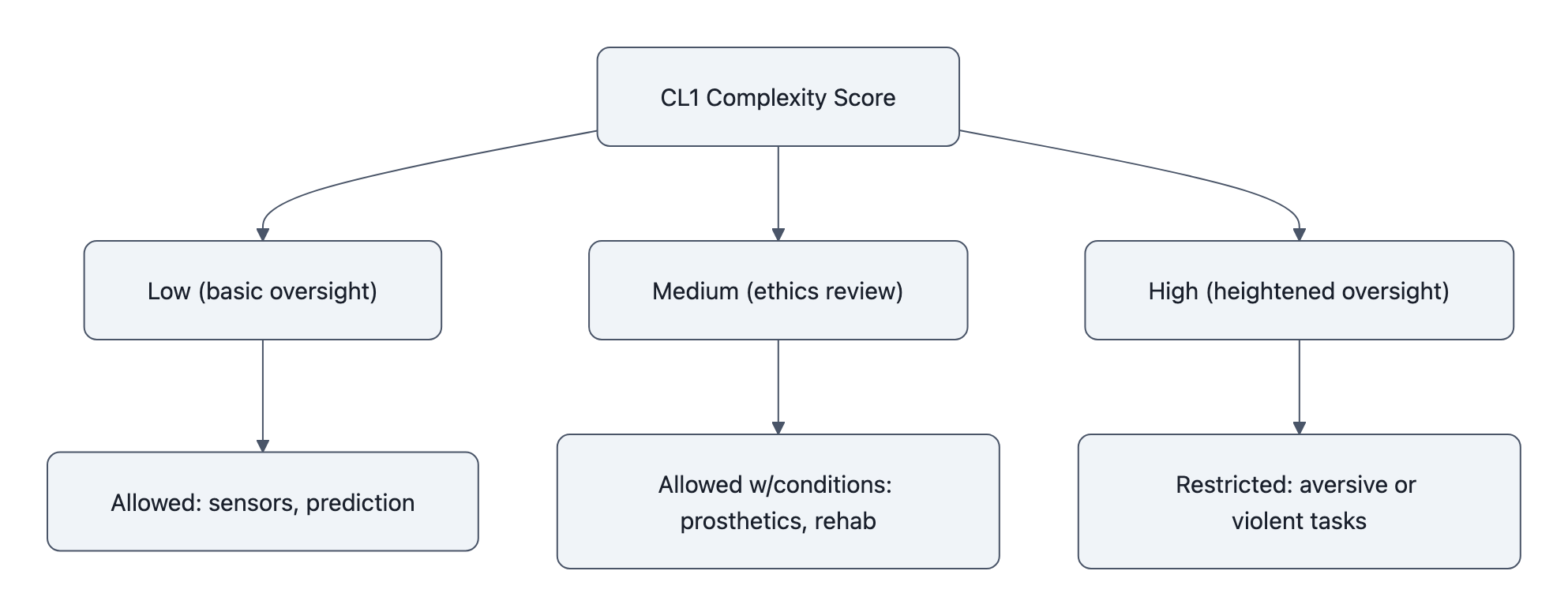

Set something like a Biohybrid Neural Complexity Score, crude but actionable:

- Points for neuron count, 3D structure, connectivity motifs.

- Points for behavioral hallmarks: long‑term memory, generalization, internal state.

- Points for reward schema complexity and duration of training.

Above a certain score, you trigger:

- Formal ethics board review (internal or institutional).

- Registration in a public registry of biohybrid computing experiments.

- Ongoing monitoring and an obligation to report unexpected emergent behaviors.

We already do this with animal work and human organoids. Extending it to CL1‑style devices is basically a copy‑paste with a new checklist.

3. Limits on classes of tasks and “virtual suffering”

Last, and most urgent: task restrictions.

There’s no good reason your first cloud‑accessible living neural culture should be trained primarily on:

- Simulated violence.

- Highly aversive states (endless dying, no‑win scenarios).

- Tasks designed explicitly to induce “fear‑like” or “pain‑like” cue associations, even if you think the tissue can’t feel them.

We’re not talking moral panic. We’re talking content ratings for organoids.

Policy sketch:

- No “torture sandbox” workloads. Disallow training regimes whose whole point is to maximize aversive feedback or helplessness, regardless of sentience debates.

- Positive‑or‑neutral default. Encourage tasks tied to control, prediction, pattern recognition, basically what you’d do in any reasonable neuroscience lab.

- Soft bans via funders and journals. Agencies and publishers can simply refuse to support or print work whose central gimmick is “we made our mini‑brain suffer.”

Want neurons to control a prosthetic, assist a sensor, or stabilize a robot gait? Fine. Want to see what happens if you trap a million human cells in a simulated horror loop? No.

None of this requires us to settle “are they conscious?” It just says: if you might be flirting with entities that can suffer, you don’t design experiments that look like torture porn.

Key Takeaways

- neurons play Doom is a commercial demo, not just a lab curiosity, CL1 and the CL API put trainable human neural cultures on the cloud.

- The real novelty is that biological tissue is doing adaptive, goal‑directed learning inside a silicon RL loop, not just acting as a fancy sensor.

- Waiting for “proof of consciousness” before caring is a stall tactic; we already regulate comparable risks using proxies and thresholds.

- We need standardized complexity scores and contribution metrics to decide when living neural tissue deserves animal‑style ethical protection.

- Labs, funders, and journals can immediately tighten requirements on disclosure, oversight, and the classes of tasks allowed on these cultures, without banning the research.

Further Reading

- Cortical Cloud, CL1 Biological Computer, Official page for Cortical Labs CL1, including Doom demo videos and cloud access details.

- doom-neuron GitHub Repository, Open code for the CL API Doom demo, showing encoder/decoder architecture and training loop.

- CL API: Real-Time Closed-Loop Interactions with Biological Neural Networks, Preprint detailing the Cortical Labs CL API, timing guarantees, and CL1 reference implementation.

- We Ran the Doom-Neuron Experiment 601 Times, RDWorld’s replication and critique of how much learning happens in silicon vs. neurons.

- Human Brain Cells Play Doom in Cortical Labs Experiment, Decrypt’s overview of the experiment and quotes from Cortical Labs scientists.

The next time you see a headline bragging that neurons play Doom, don’t argue about souls in Petri dishes. Ask the boring, urgent questions: what did the tissue actually learn, how complex was it, and who signed off on the experiment design. If we don’t draw those lines now, they’ll get drawn later, by whoever builds the first nervous system we can no longer comfortably ignore.