A Tennessee grandmother spent nearly six months in jail for crimes in Fargo, North Dakota, a facial recognition wrongful arrest in a state she’s never visited, based on an algorithmic match and some lazy copy‑paste detective work.

If your first reaction is “wow, that AI system was bad,” you’re already falling for the trick.

TL;DR

- “AI error” is being used as accountability laundering, a way for cops, prosecutors, and vendors to dodge blame for basic investigative failures.

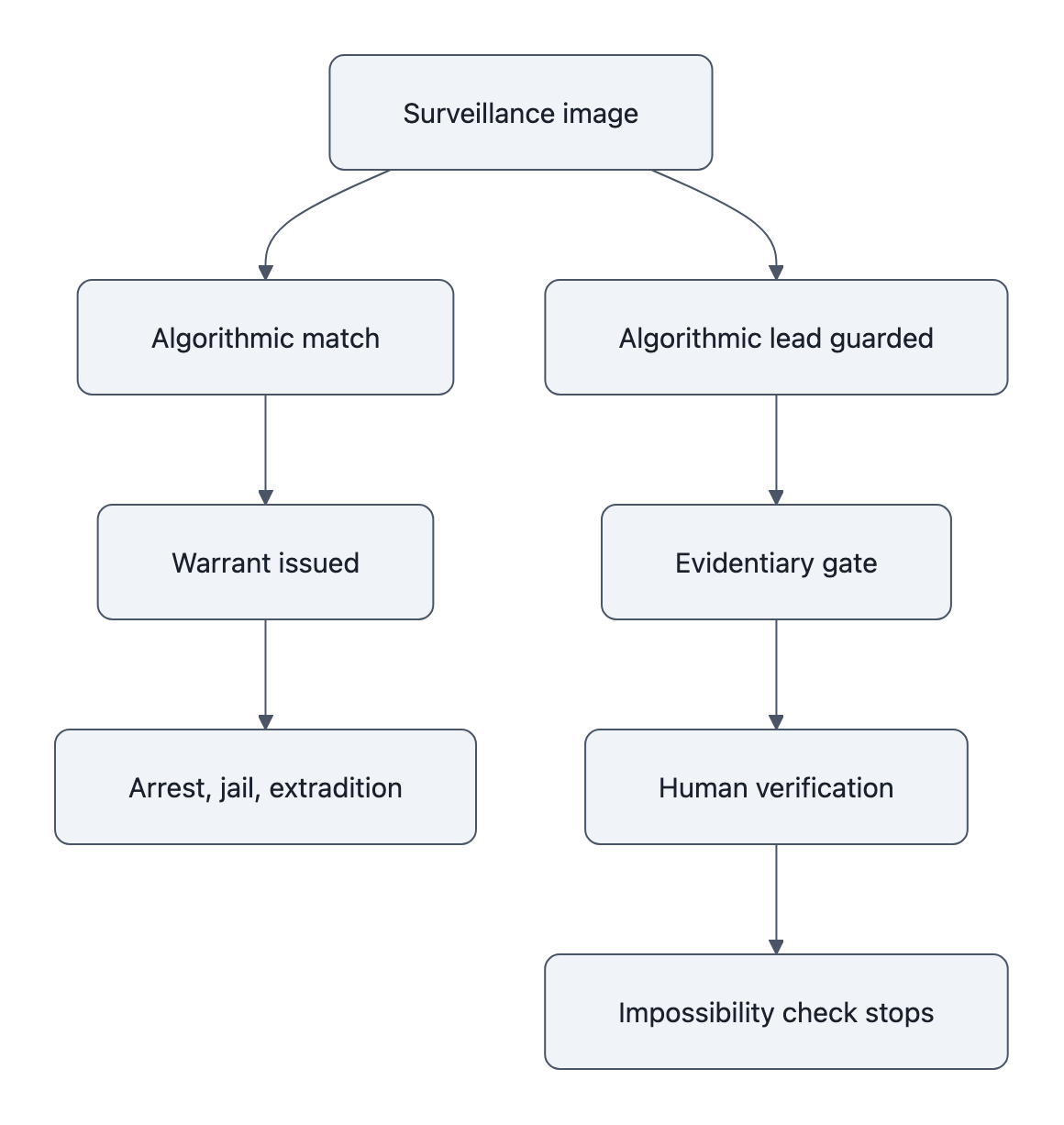

- Fixing this isn’t about waiting for better models; it’s about procedural guardrails: evidentiary gates, verification steps, extradition checks, and mandatory disclosure.

- You can push on this locally: ask your city what facial recognition they use, what safeguards exist, and who pays when it’s wrong.

Why the facial recognition wrongful arrest in Fargo matters now

Here’s the short version of the InForum/WDAY reporting:

- Fargo police ran surveillance footage from a bank fraud case through facial recognition.

- The system spit out Angela Lipps, a 50‑year‑old grandmother in rural Tennessee.

- Detectives checked her license and social media photos, wrote her into the warrant, and U.S. Marshals arrested her at home.

- She sat in Tennessee jail for ~108 days as a “fugitive,” then was extradited to North Dakota, and finally released when her lawyer pulled bank records proving she was in Tennessee during the Fargo crimes.

While she was locked up, she says she lost her home, her car, and even her dog. When North Dakota finally dropped the case, they left her stranded in Fargo with no money and no way home.

This is not “AI gone wrong” in the abstract.

This is what happens when institutions treat a probabilistic match as a golden ticket and turn off every other part of the justice system’s brain.

And this is about to be your town’s problem if your local police already use face search (Georgetown’s Perpetual Line‑Up report found that most major U.S. departments do).

This was human and institutional failure, not just an AI bug

If you were building a police facial recognition system, you’d do something like:

- Return a ranked list of possible matches, not a yes/no.

- Stamp every result with “INVESTIGATIVE LEAD ONLY, NOT PROBABLE CAUSE.”

- Require a human to confirm with additional evidence before doing anything drastic.

All the decent policies say some version of this. NIST’s FRVT work shows even top‑tier systems have non‑trivial error rates and demographic biases. The ACLU’s facial recognition cases (e.g., Robert Williams in Detroit) show what happens when cops treat matches as fact.

Yet in the Lipps case, the detective reportedly:

- Took the match.

- Glanced at her license and Facebook photos.

- Filed charges and an extradition request.

No travel records check. No cell‑site data. No bank transactions. No “has this person ever left Tennessee?” sanity check.

Calling this an “AI misidentification” suggests the key failure was in the confusion matrix.

The real failure was:

- A detective who treated a vendor score as truth.

- A prosecutor who signed a charging document built on that.

- Tennessee and North Dakota judges who green‑lit months of detention and extradition on that paper.

- A jail system that kept her locked up while a trivial check (her out‑of‑state bank transactions) sat undone.

If an eyewitness had misidentified her, we’d be talking about investigative malpractice and due‑diligence failures. Because the source is an algorithm, everyone shrugs and points at “AI.”

That’s accountability laundering: move the blame from specific humans with names and jobs to a foggy “technology error” that can be “fixed” with an upgrade.

We’ve seen this story before in other domains, like AI accountability after the Iran strike, where “algorithmic targeting” conveniently spread responsibility across a blurry system chart.

Same pattern. Different stakes. Same dodge.

Three practical fixes that would have prevented months of wrongful detention

If you strip away the AI branding and look at this like an engineering problem, you get a simple requirement:

“No one may be arrested, jailed, or extradited solely because a computer says two faces look alike.”

Let’s turn that into guardrails that would have stopped the Lipps case early.

1. Evidentiary gates: a facial match can’t cross probable cause alone

Implementation rule: Facial recognition hits are “tip‑level” only. To move from tip to warrant, you need at least one independent corroborating source.

Concrete examples of “independent” in a case like Fargo:

- Travel or location data showing the suspect in the right city (flight, bus, toll, phone).

- Financial activity near the crime (local purchases, ATM use).

- Witness description matching non‑facial attributes (tattoos, height, accent).

- Connection between the suspect and the victim or bank (employment, account, shared address).

In Lipps’ case, her attorney later pulled her bank records and immediately disproved the allegation. That same check is what police should have been required to do before requesting extradition.

The tradeoff: this slows some investigations down. You can’t go from video → match → warrant in one afternoon.

But that’s the cost of not turning your face into an all‑purpose arrest coupon.

2. Human‑verification protocols: “show your work” on every match

Verification today is often vibes‑based: a detective stares at side‑by‑side photos and says “looks good.”

Two problems:

- Humans are way too confident in their ability to tell similar faces apart (see our piece on AI‑generated faces, people routinely rate fake faces as more real than real ones).

- Once you know the algorithm’s answer, you’re anchored. You see similarity even where it’s weak.

A better protocol looks like this:

- Mask the candidate’s identity (no name, no social feed, just the image).

- Use two independent reviewers, trained in facial comparison, who don’t see the algorithm’s confidence score.

- Force them to document specific feature matches (“ear shape,” “nose bridge,” “scar under left eye”) and specific differences.

- Store that worksheet as part of the case file.

If the differences are obvious (as Reddit commenters noted when comparing Lipps to the surveillance still, nose, chin, overall shape), that should kill the lead or at least flag it as high‑risk.

The tradeoff: more paperwork, more training, more time.

The upside: when things go wrong, you can tell whether this was:

- A borderline machine call on a low‑quality frame, or

- A human who ignored obvious mismatches.

Right now, those two failure modes look the same in public: “AI error.”

3. Custody and extradition safeguards: stop the train when someone says “wrong person”

The most absurd part of the Lipps timeline isn’t the initial match. It’s how long the system kept going after she said, repeatedly, “I’ve never been to North Dakota.”

If you were designing this as a workflow:

- “Defendant claims total geographic impossibility” should be a blocking state, not a comment in the log.

- That state should require a specific “impossibility check” before the case can move from:

- local holding → extradition approval, or

- extradition → continued pretrial detention.

For out‑of‑state warrants, a simple checklist could be:

- Verify travel records (flights, buses) within ±48 hours of alleged offense.

- Check local presence evidence (employment logs, nearby purchases).

- Run a live interview where the person can point to concrete alibis (like Lipps’ bank withdrawals).

If this check isn’t done within, say, 7 days, the person gets released pending further investigation.

The tradeoff: a small increase in risk that a real suspect avoids extradition for a while.

But stack that against six months of wrongful incarceration and permanent life damage. From a systems point of view, the current cost function is wildly mis‑tuned.

What policy and legal accountability must look like next

The core problem isn’t that face recognition exists.

The problem is that nobody with power is forced to care when it’s wrong.

Right now:

- Vendors hide behind “we just provide a tool.”

- Police say “the system identified her; we followed procedure.”

- Prosecutors say “we relied on law enforcement’s representations.”

- Judges point to overloaded dockets and boilerplate warrants.

To break accountability laundering, you need specific duties and specific consequences:

- Mandated disclosure.

- Every defense attorney should automatically get: the vendor name, model version, confidence score, candidate list length, and all policy docs that governed its use.

- Non‑disclosure? Evidence suppressed.

- Audit trails.

- Every search is logged with who ran it, which images they used, and where it was used as evidence.

- Periodic external audits (think: state AG or independent lab) compare usage against policy and error benchmarks.

- Civil liability with teeth.

- If a wrongful arrest AI case happens because policies weren’t followed (no corroboration, no impossibility check), the department and city pay, not just “the insurance company.”

- Vendors that market “frictionless identifications” for policing without proper warnings and policy templates should share that liability.

- Local veto power.

- City councils can flat‑ban police facial recognition or require opt‑in with strict guardrails, as some cities did after ACLU‑documented abuses.

- That’s where you come in.

If you want something concrete to do this month:

- Ask your city or county: Do our police use facial recognition? Which vendor? Under what written policy?

- Ask your public defender’s office whether they’re notified when a case rests partly on an algorithmic match.

- Ask your state rep what guardrails exist on AI in policing and whether wrongful arrest AI cases trigger mandatory reviews.

You don’t have to be a lawyer to ask. These are basic operational questions.

Key Takeaways

- A facial recognition wrongful arrest isn’t a sci‑fi edge case; it just cost an innocent woman six months of her life for crimes 1,200 miles away.

- “AI error” headlines hide the real story: detectives, prosecutors, and judges treating a face match as proof instead of a fallible lead.

- Three simple guardrails, evidentiary gates, real human verification, and impossibility checks before extradition, would likely have stopped this case.

- Technical model improvements help, but without legal duties, audit trails, and liability, accountability gets laundered into a fog of “the system failed.”

- You can push locally: demand transparency on police facial recognition, written safeguards, and consequences when they’re ignored.

Further Reading

- AI error jails innocent grandmother for months in Fargo case, InForum, Original local reporting on the Lipps case, with timeline, documents, and interviews.

- Face Recognition Vendor Test (FRVT) Part 3: Demographic Effects, NIST, Benchmark study showing performance gaps and error rates in commercial face recognition systems.

- The Perpetual Line‑Up, Georgetown Law, Deep dive into police face recognition use and the regulatory vacuum it sits in.

- Face Recognition Technology, ACLU, Overview of documented wrongful arrests and the ACLU’s policy recommendations.

- AI accountability after the Iran strike, How militaries use “the algorithm” to blur responsibility for lethal decisions.

The hard part here isn’t building a better classifier.

It’s building institutions that refuse to outsource judgment to a confidence score, and that hurt when they try.