The woman at the folding table has two résumés in her bag and ChatGPT open on her phone.

She was laid off from a mid-size marketing agency in January. Officially: “AI efficiency gains.” Unofficially: her manager hinted budgets were a mess and “everyone in the C‑suite wants an AI slide in the next board deck.” She scrolls past another headline about AI and unemployment and then, almost reflexively, past a different one about deepfake scams.

She doesn’t trust the thing that supposedly took her job.

She also doesn’t trust the people who say it did.

TL;DR

- A modest rise in AI and unemployment numbers is not the scary part; the scary part is that 60-77% of Americans say they distrust AI at the same time.

- That distrust turns even small, slow-moving labor shifts into political and institutional problems, because no one believes the story they’re being told about what’s happening.

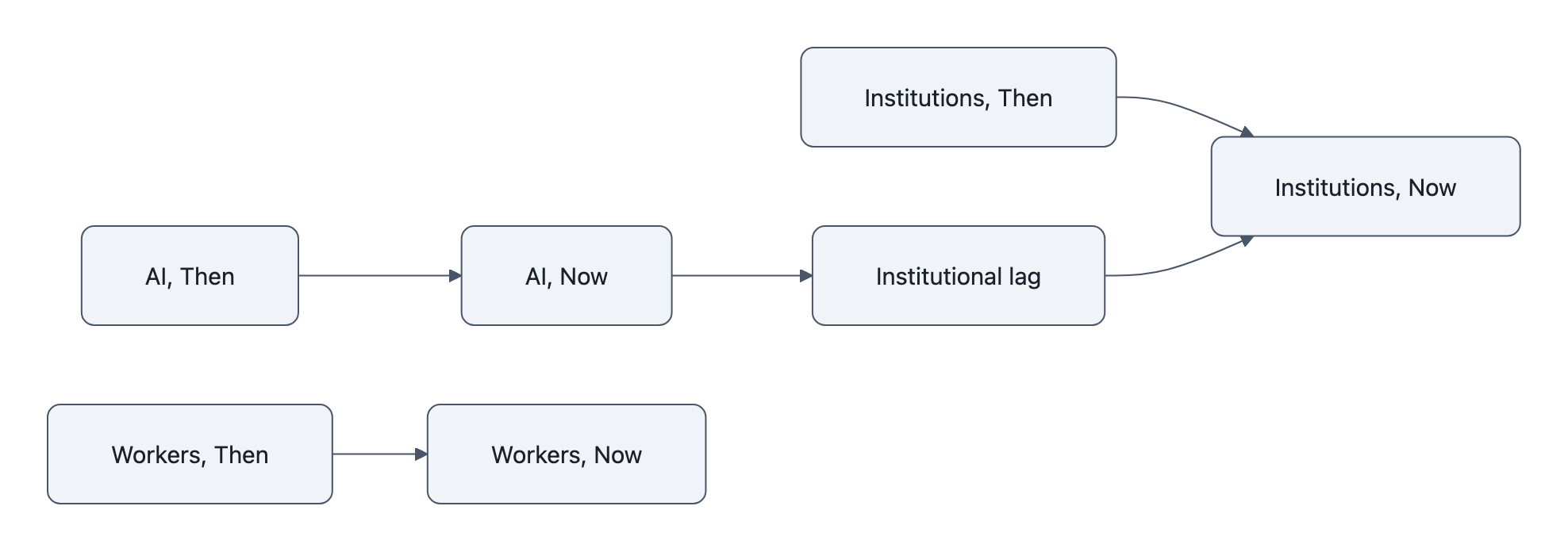

- The real risk is an “institutional lag”: firms, safety nets, and civic institutions are still built for factory-style shocks, while AI quietly rewires information work and decision‑making.

AI and Unemployment: Why Distrust Is the Real Early Warning

Two facts, compressed.

Official U.S. unemployment ticks up to around 4.4%. Surveys from Pew, Gallup, and others say roughly 60-77% of Americans feel uneasy or outright distrustful of AI.

Neither number alone screams crisis. Together, they describe a society where people expect technology to hurt them and don’t expect institutions to protect them.

That combination is the real early warning.

Because AI and unemployment interact less like cause and effect and more like accelerant and dry brush. If people already believe AI is a scam, a layoff “for AI reasons” doesn’t land as modernization. It lands as betrayal.

And betrayed people act differently than merely displaced people.

They resist retraining. They vote for punishment instead of adaptation. They uninstall the apps and sabotage the rollouts. (We just saw a version of that with the surge in ChatGPT uninstalls, not a technical failure, a trust rejection.)

How AI Displaces Knowledge Work, Slow, Broad, Hard to Absorb

If you grew up with factory metaphors for automation, AI is a confusing villain.

Robots on a line are visible. One machine, one job gone, one negotiation with the union. Political systems know how to stage that fight.

AI is different. It seeps.

A customer support team loses 20% of its headcount because an assistant can answer the easiest tickets. A hospital billing department stops replacing retirees because the claims software “got smarter.” A law firm quietly sends first‑draft contracts through an LLM, then needs fewer junior associates three years later.

No mass closure. No singular event. Just a thousand tiny absences.

Our own earlier piece, “Can AI Already Replace 11.7% of the U.S. Workforce?” made this clear in a different way: the exposed jobs weren’t just cashiers or drivers, but paralegals, bookkeepers, marketing coordinators, the connective tissue of information work.

This matters for AI and unemployment because it changes how pain shows up:

- Not as a wave of layoffs in one town, but as hiring freezes scattered across many.

- Not as “your factory closed,” but “we just don’t need a full-time person for that anymore.”

- Not as a newsworthy catastrophe, but as a slow erosion of opportunity.

It’s the kind of shift that can keep unemployment averages looking “fine” while making daily life feel worse and more precarious for millions.

People don’t see a robot take their job.

They see an email citing “AI efficiency” and a macroeconomy that was already wobbly, pandemic over‑hiring, higher interest rates, deflation concerns like Citi’s AI deflation warning.

Mix that with high distrust, and the most plausible story becomes: We are being lied to.

Institutions Built for Factories, Not Models: The Adaptation Gap

Here’s the deeper problem: our institutions still expect the factory story.

Labor law loves clear categories: employee or contractor, laid off or not, plant open or closed. Unemployment insurance assumes a discrete event you can point to. Retraining programs assume you can say, with some confidence, which occupations are dying and which are growing.

But AI doesn’t kill an occupation. It hollows it out.

McKinsey’s work on the future of jobs and skills keeps circling the same point: tasks, not whole jobs, are being automated. A marketing analyst still exists, but with a different mix of duties. A project manager still exists, but they’re suddenly also an AI prompt farmer.

Our systems barely know what to do with that.

So you get institutional lag: AI changes what work looks like, but firms, safety nets, and civic rules don’t adjust in time.

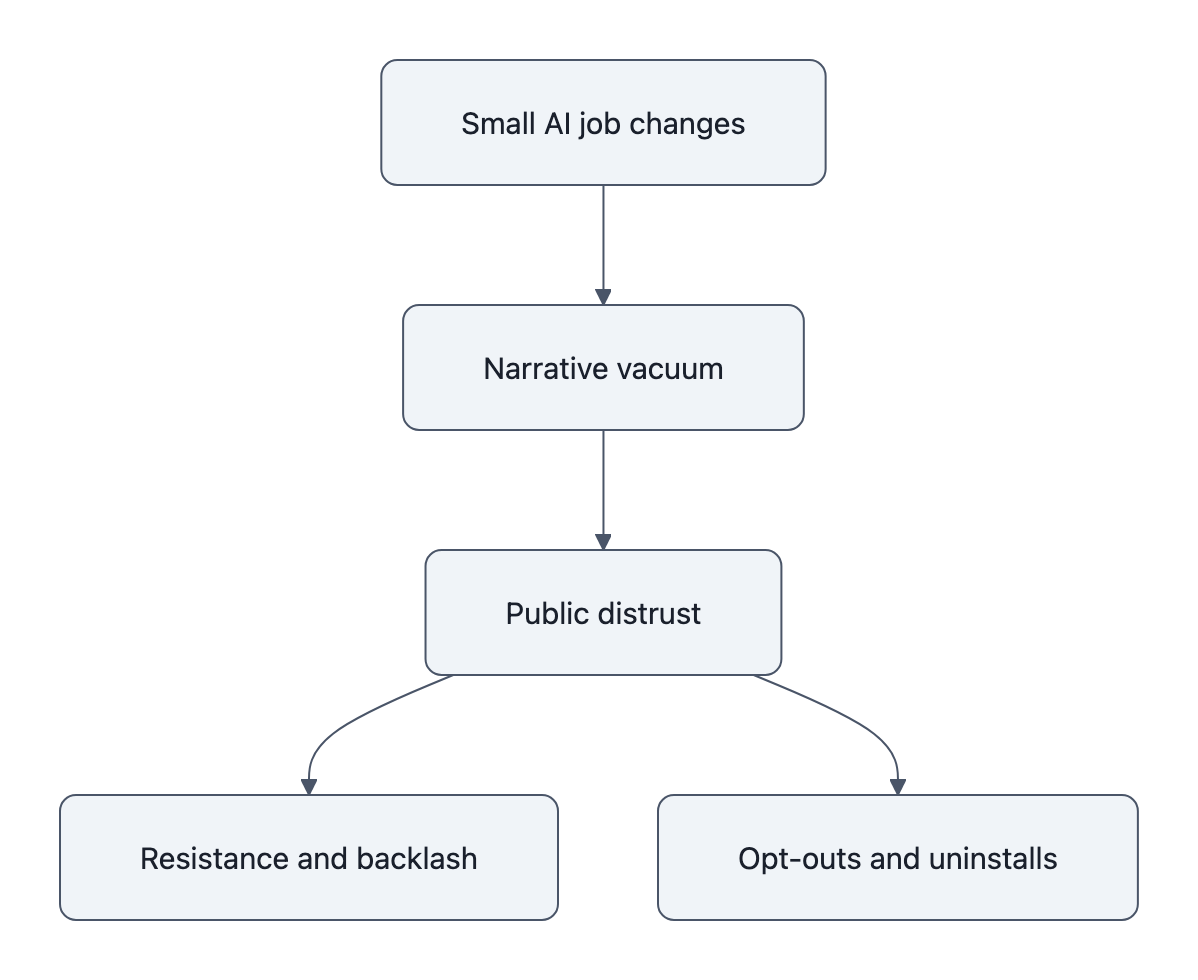

When that happens, every small change feels larger than it is, because it falls into a vacuum of explanation.

- A company trims 5% of staff and attributes it to AI. Maybe it’s half true, half macro slowdown. Without trusted intermediaries, unions, local press, public agencies, to dissect that story, the narrative hardens: They used AI as cover to dump us.

- A government pilot uses AI for benefits eligibility. Even if it slightly reduces errors, any visible mistake confirms the suspicion that “the algorithm” is rigged.

- A university pushes AI‑heavy curricula without articulating where the new jobs actually are. Students feel like guinea pigs, not beneficiaries.

The details matter less than the pattern: institutions are late and opaque, and distrust rushes in to fill the gap.

What This Means for Workers, Managers, and Civic Trust

For our woman at the job fair, the unemployment tick and the survey on AI attitudes collapse into one feeling: no one is steering this thing for my benefit.

Here’s where the argument turns a bit uncomfortable:

If you are a manager rolling out AI tools, or a policymaker saying “we’re monitoring AI and unemployment closely,” you are part of the institutional lag unless you treat distrust as data, not as noise.

Workers are not just asking, “Will I lose my job?”

They’re asking:

- “Who decides when a ‘pilot’ becomes my replacement?”

- “If AI makes the company richer, how does any of that reach me?”

- “When something goes wrong, a hallucinated report, a biased recommendation, who is accountable: the developer, my boss, or the ghost in the machine?”

These are governance questions, not UX bugs.

And they have very practical signals attached:

- Watch what executives blame in earnings calls. When “AI” becomes the go‑to euphemism for cost cutting, expect trust erosion to outpace real automation.

- Watch benefit systems and HR software. When AI is embedded there, errors become civic injuries, not just product annoyances.

- Watch opt‑out behavior: uninstalls, shadow bans on internal tools, quiet refusal to use “mandatory” assistants. That’s the smoke before the political fire.

For individuals, the adaptation play is paradoxical:

You probably should learn to work with AI, because the exposed tasks in your job will change, while simultaneously asking much more pointed questions about how your firm counts and shares the gains.

For organizations, the real risk isn’t simply “adopting AI too fast.” It’s adopting it secretly and narrating it badly. A modest AI productivity bump is not worth a legitimacy hit that takes years to repair.

Distrust isn’t a PR problem. It’s the canary telling you your institutional story no longer matches lived experience.

Key Takeaways

- AI and unemployment numbers look mild, but pairing them with 60-77% public distrust of AI reveals a deeper institutional lag.

- AI displaces knowledge work through slow, task‑level erosion, which our factory‑era laws, benefits, and narratives are bad at recognizing.

- That mismatch makes even small shocks feel like scams, amplifying political anger, resistance, and disengagement from both tools and institutions.

- Managers and policymakers who ignore distrust as an early warning will experience slower adoption and sharper backlash, even if actual automation is modest.

Further Reading

- Can AI Already Replace 11.7% of the U.S. Workforce?, A closer look at which jobs are most exposed to current AI capabilities.

- ChatGPT Uninstalls Surge 295%: Why It Matters Now, On consumer pushback and what distrust looks like in practice.

- AI Deflation Risk: Why Citi’s Warning Matters for Policy, How rapid AI adoption could ripple through prices, wages, and macro policy.

- Employment Situation, Bureau of Labor Statistics, Official monthly data on unemployment and labor force participation.

- What the future of work will mean for jobs, skills, and wages, Evidence-based scenarios for technology-driven labor shifts.

In a few months, our job‑seeker will sit at a different folding table, maybe with a role that asks her to be “AI‑augmented.” What will matter most then isn’t whether a model drafts half her emails, it’s whether she believes the humans around that model are still on her side.