She was babysitting four kids when the guns came out.

In July 2025, U.S. Marshals showed up at Angela Lipps’ home in north‑central Tennessee, pointed weapons, and took the 50‑year‑old grandmother away as a fugitive. By Christmas Eve, after 163 days in custody and a one‑way extradition to a state she’d never visited, North Dakota finally admitted they had the wrong woman, the product of a facial recognition misidentification.

To call this “an AI error” is technically true and emotionally evasive. What locked Lipps in a cell was not just a faulty match, it was what I’ll call evidence laundering: a blurry surveillance still gets run through a proprietary model and comes out the other side wearing the costume of hard proof.

TL;DR

- The Fargo case isn’t mainly about a buggy model; it’s about a false match being laundered into “evidence” no one dared question.

- Facial recognition misidentification is predictable, especially across demographics, but only becomes catastrophic when workflows and courts treat outputs as near‑conclusive.

- Fixing this means changing who gets to turn an algorithmic hunch into a warrant, not just tightening the code or nudging accuracy a few percentage points.

What happened in the Fargo case

Here’s the compressed version, because the details are awful, but the pattern is familiar.

Fargo detectives had bank‑fraud surveillance images. They ran them through facial recognition. The system spat out Angela Lipps’ name. A detective then looked at her Tennessee driver’s‑license photo and social‑media pictures and decided the match was good enough to write up charging documents for fraud and ID theft in a state she’d never seen.

Tennessee booked her as a fugitive. North Dakota took 108 days to come get her. She sat, without bail, while the machine’s hunch hardened into her new reality.

Only in December, when her North Dakota lawyer walked into a meeting with bank statements, showing Lipps in Tennessee, making deposits, cigarette runs, Uber Eats orders at the exact times of the Fargo withdrawals, did the case fall apart. Four days later she was free, without an apology, having lost her home, her car, and her dog.

That’s the timeline. The more interesting question is: how did a single algorithmic suggestion survive five months of human contact without disintegrating?

Facial recognition misidentification isn’t a plot twist; it’s the baseline

Start with the tech, briefly.

Face recognition systems don’t “see” a person. They convert an image into a vector, a long list of numbers representing facial features, and compare that vector to millions of others. If the distance between two vectors is small enough, you get a match.

There are at least three ways this can go wrong:

- Bad input, grainy, angled, occluded surveillance footage. The model is being asked to identify a person from the kind of image you’d hesitate to show your own family.

- Threshold games, tighten the score cutoff and you miss real suspects; loosen it and you get more false hits. Many departments don’t actually understand these dials; they just rely on default vendor settings.

- Demographic disparities, the NIST FRVT Demographic Effects report ran dozens of algorithms and found higher false‑positive rates for certain groups, especially Black and Asian faces, and in some cases women more than men. Error isn’t evenly distributed; it clusters.

In other words, a false match in a real‑world police workflow is not surprising. It’s what you should expect when you combine low‑quality footage, unclear thresholds, and demographic skew.

And yet, in Fargo, that inherently noisy guess, the system’s “maybe her?”, traveled through the system as if it were a lab‑tested DNA hit.

That jump, from maybe to must be, is the part we don’t talk about enough.

How a false match becomes “evidence”: automation bias plus broken workflows

The InForum reporting mentions a curious contrast: Fargo saw the facial‑recognition ID and moved ahead with charges. A nearby department, West Fargo, reportedly saw the same algorithmic identification and chose not to forward charges, saying AI alone wasn’t enough.

Same output. Two opposite reactions.

What changed wasn’t the model. It was the institutional habit of mind around the model.

In Fargo’s case, you can see a chain of small capitulations:

- The detective runs the photo, gets a name. Already, the interface is likely nudging him: confidence scores, green checkmarks, neat dashboards that feel forensic.

- He “confirms” it visually, looking at a Tennessee license photo, maybe some Facebook selfies. Humans are famously good at convincing ourselves that two similar‑ish faces are “the same person” once a system has told us so. That’s automation bias in practice.

- The match becomes the spine of the charging documents. Procuring additional evidence is no longer an open‑ended investigation; it’s a confirmation exercise. Look for things that fit the story, ignore the rest.

Across jurisdictions, every subsequent actor treated the original machine output as if someone upstream had already done the hard epistemic work.

Tennessee jailers didn’t ask, “How strong is this evidence?” They saw “fugitive warrant from North Dakota.” North Dakota didn’t seem in a rush to ask, “What else do we have besides this match?” because, on paper, they already had an identified suspect.

I keep coming back to the 108 days North Dakota took to physically pick her up.

More than three months in which no one in the chain thought: “We should at least interview her, or check if she’s ever been to Fargo, before we drag her halfway across the country.”

That’s not an AI failure. That’s a workflow that’s been quietly rewritten around the assumption that if a machine has pointed at someone, the hard part is over.

If you want a comparison, look at another facial recognition wrongful arrest: Robert Williams in Detroit, misidentified off a grainy still. Different city, same laundering effect, a probability score turned into probable cause.

The systems didn’t just misrecognize. The institutions mis‑reasoned.

The black box you can’t cross‑examine

Once Lipps finally had a lawyer in the same state as the charges, the spell broke fast. A stack of bank statements did what months of supposed “investigation” had not.

But imagine trying to fight the match itself.

The facial recognition vendor’s system is proprietary. The weights, the training data, the exact pipeline: all secret. Police agencies often sign contracts that explicitly limit transparency, even in court. You can’t put the model on the stand and ask why it misidentified a 50‑year‑old Tennessee grandmother as a North Dakota fraudster.

So what happens instead is epistemic outsourcing.

Courts and cops borrow the authority of “AI” while relieving themselves of the responsibility to understand it. Defense attorneys are left arguing in the dark against a black box that, on paper, looks like a sober expert witness.

This is what the ACLU’s The Perpetual Line‑Up has been screaming about for years: an unregulated mesh of local departments and private vendors, where usage policies are patchy at best, and where NIST’s demographic warnings float politely in the background while cities sign more contracts.

And when the inevitable harm occurs, Lipps losing her home, her car, her dog, responsibility beads up and rolls off every surface:

- The vendor says the software is a tool; humans misused it.

- The police say they relied in good faith on a widely used technology.

- The jail says they just honored a warrant.

- The judge points to the evidence that was presented.

Functionally, the only person who experiences the system as a single, coherent actor is the defendant.

We talk about AI accountability in the context of drones and targeting systems. The Fargo case argues for a more mundane, but equally sharp definition: accountability is the ability to point to someone in particular and say, “You don’t get to hide behind your tools.”

Evidence laundering is the real problem to solve

So what do you fix?

You can, and should, push vendors to reduce error rates, especially along the demographic lines NIST flagged. But if your workflow still treats any face recognition hit as a green light for an arrest warrant, then all you’ve really done is turn rare catastrophic harms into slightly rarer catastrophic harms.

The deeper fix is less glamorous and more uncomfortable:

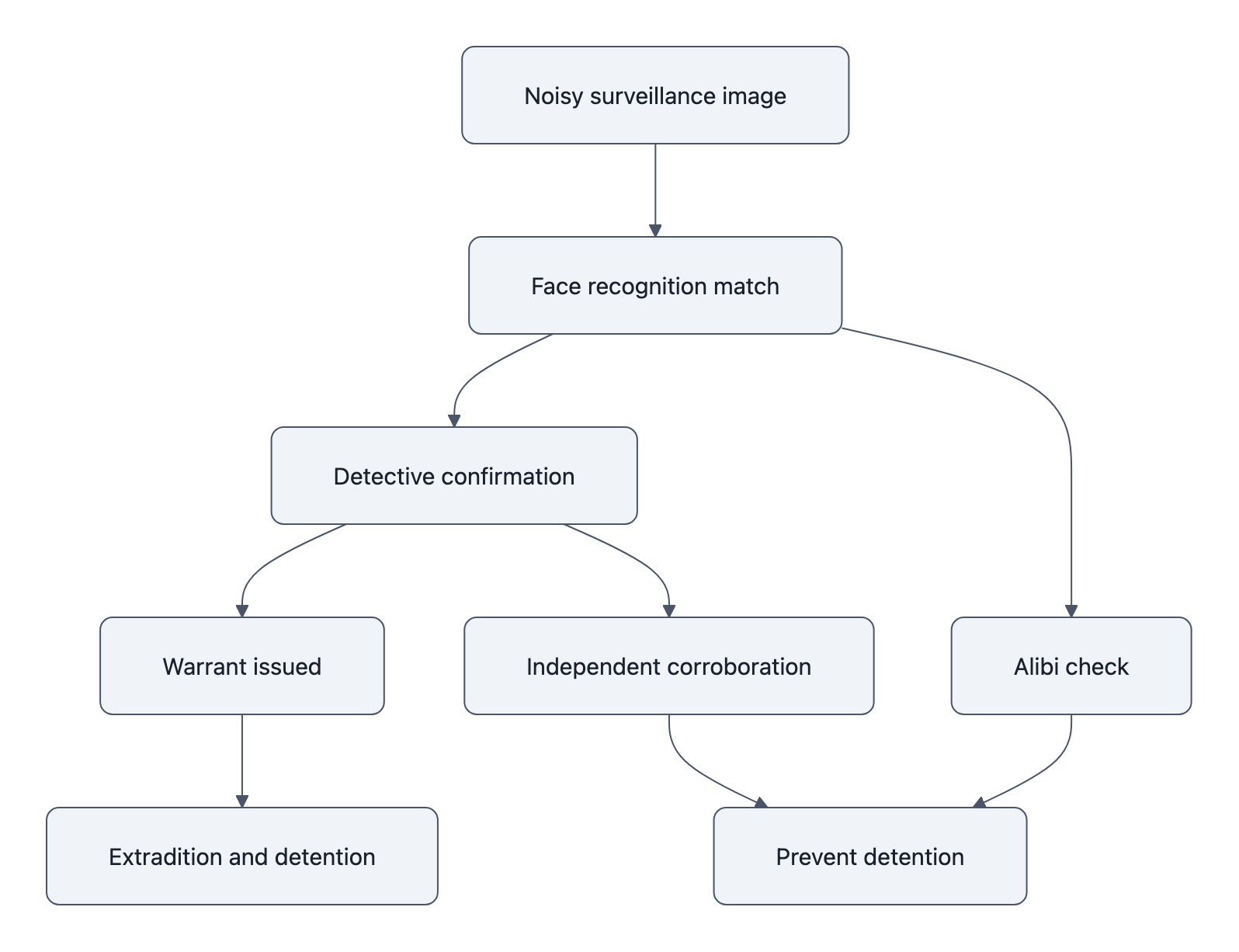

- Treat every algorithmic match as an investigative lead, never as stand‑alone evidence, and write that into policy with teeth.

- Make it procedurally impossible to file charges based solely on a proprietary model’s output. Require independent corroboration that can be explained to a jury without appealing to magic.

- Give defense teams visibility: if a facial recognition search played any role, that fact must be disclosed, along with logs, thresholds, and vendor documentation.

In other words, we stop laundering guesses into evidence.

Angela Lipps’ first words to reporters still echo: “I’ve never been to North Dakota. I don’t know anyone from North Dakota.” For nearly half a year, that simple, human sentence carried less weight than a line in a software log somewhere in a police file.

If we don’t change how institutions treat that line, who can generate it, who can use it, and how hard it is to challenge, we’ll keep improving the AI and still filling cells with people who were never there.

Key Takeaways

- The Fargo case shows facial recognition misidentification becomes devastating only after institutions treat an algorithmic guess as conclusive evidence.

- Technical causes of facial recognition error, noisy images, thresholds, demographic bias documented in NIST FRVT, are well‑known and predictable.

- The real engine of harm is evidence laundering: automation bias and opaque workflows that convert a false match into a warrant and months in jail.

- Proprietary models create an accountability vacuum; you can’t cross‑examine a secret algorithm, so responsibility diffuses across vendors, police, and courts.

- Fixing this requires changing policies and incentives around how AI outputs are used, not just tuning the models that generate them.

Further Reading

- AI error jails innocent grandmother for months in Fargo fraud case, InForum, Local reporting that reconstructs Angela Lipps’ arrest, detention, and eventual exoneration from public records.

- North Dakota legal experts question reliability of AI facial recognition in court, InForum, Follow‑up analysis with legal experts and civil‑liberties advocates reacting to the case.

- Face Recognition Vendor Test (FRVT): Demographic Effects, NIST, Technical report documenting how false‑positive rates vary by race, gender, and algorithm.

- The Perpetual Line‑Up: Unregulated Police Face Recognition in America, ACLU, Overview of police use of facial recognition, opacity, and the risks of unregulated deployment.

- Wrongfully Accused by an Algorithm?, The New York Times, Reporting on the Robert Williams case, another wrongful arrest driven by a face recognition false match.

In Fargo, the story ends with a volunteer driving Lipps back home, the long road south under winter sky. Somewhere in a police server, her name still exists as a past “match,” a ghost in the database. Until we redraw the line between lead and evidence, between guess and guilt, that ghost will keep coming back wearing different faces.