Changhan Kim didn’t ask his lawyers first.

He sat down, opened a browser, and typed a question into a chatbot: how do I get out of paying a $250 million earnout? Months later, a Delaware judge quoted that exchange back at him, described how he’d followed “most of ChatGPT’s recommendations,” and ordered the fired studio CEO reinstated with extended time to earn the bonus anyway.

TL;DR

- The danger of ChatGPT legal advice isn’t hallucinations; it’s that executives are generating pristine, timestamped evidence of bad-faith schemes.

- In Fortis v. Krafton, the court treated AI‑drafted strategy like any other corporate communication, using it to infer intent and pretext.

- Leaders don’t need AI bans; they need governance that assumes every prompt is tomorrow’s courtroom exhibit.

Why ChatGPT legal advice isn’t a legal strategy

If you’ve read the Delaware opinion, the weirdest thing isn’t that a CEO asked a bot for help.

It’s that the court describes him doing what the bot said. The AI suggested a “pressure and influence package,” forming a task force (“Project X”), and building a roadmap to wrest control from the acquired studio. Internal documents show Krafton followed most of that script.

Legally, that matters less because the advice came from an AI than because it revealed motive.

The judge didn’t say “ChatGPT is bad, therefore you lose.” She said, in essence: your own records show you were afraid of a “pushover” contract, you sought a takeover plan, and your later “performance-related” reasons for firing the studio’s leaders were pretext to avoid the earnout. The chatbot episode is the Rosetta Stone that makes all the other emails and Slack messages legible.

That’s the first lesson: AI doesn’t shield you from judgment; it collapses the distance between your worst impulse and a written plan.

You don’t get to say, “We were just brainstorming.” The model turns the brainstorm into a memo.

If you’ve ever wondered whether ChatGPT legal advice is “safe,” this is the wrong question. The interesting question is: safe for whom, and in which direction? Because for courts, it’s wonderful. It’s searchable, quotable, and strangely candid.

The real legal risk: AI-created trail as evidence of intent

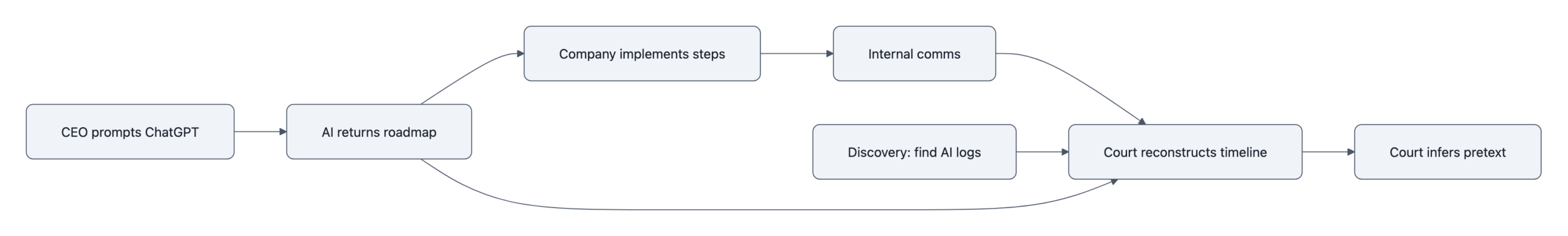

Imagine a future discovery request:

“Produce all emails, messages, and AI chatbot logs related to the earnout strategy.”

That’s not science fiction. In Fortis v. Krafton, plaintiffs already chased ChatGPT logs; the opinion recounts internal Slack and email where executives discussed what the AI said and how to act on it. Some logs were allegedly gone; the narrative survived anyway.

From a litigator’s perspective, AI usage is a dream:

- It timestamps the onset of the scheme. “On May 12, 10:42 p.m., you asked how to void the contract.”

- It captures the specific goal in the executive’s own words. No later spin needed.

- It often generates a bullet‑point roadmap that can be compared, step by step, with what the company did.

Courts routinely infer intent from much thinner scraps than this, half a sentence in an email, a scribbled note from a meeting. Now imagine facing a twelve‑paragraph “Response Strategy to a ‘No‑Deal’ Scenario” that reads like outside counsel’s memo but came from a stochastic parrot with no malpractice insurance.

The bad governance move isn’t using AI. It’s treating its output as if it were a private inner monologue instead of a discoverable document.

This is why the standard “AI hallucinations are dangerous” line misses the point here. The strategy didn’t fail because the model misread Delaware law. It failed because the CEO created contemporaneous evidence that he was trying to escape his obligations, and then lined up reality behind that script.

In other words: the legal risk is not that ChatGPT is wrong. It’s that you were.

How courts treated Krafton, AI as just another smoking gun

If you skim the opinion looking for a big doctrinal moment on AI, you’ll be disappointed.

Vice Chancellor Will doesn’t write a treatise on large language models. She does what Chancery judges do: reconstructs events from evidence, decides who’s credible, and asks whether contractual duties were honored.

On those questions, the chatbot is just one more piece of the puzzle:

- The CEO feared he’d agreed to a “pushover” contract and would be “dragged around.”

- He asked an AI chatbot how to avoid paying the earnout; the chatbot said it would be “difficult to cancel.”

- At ChatGPT’s suggestion, he formed “Project X,” an internal task force with an explicit takeover mandate.

- Krafton then fired key leaders and seized operational control under pretextual performance claims.

The court’s bottom line: Krafton breached the Equity Purchase Agreement, wrongfully terminated the “Key Employees,” and improperly grabbed operational control. Remedy: restore Ted Gill as CEO, and extend the earnout testing period by 258 days so the studio gets back the time lost to the wrongful coup.

None of this turns on the magical properties of AI. The judge doesn’t care that the advice came from ChatGPT rather than a golf buddy.

The opinion’s subtext, if you read between the lines, is simpler and more brutal: if you plan a bad‑faith strategy in writing, Delaware will happily use your own words against you, regardless of whether a human or a model wrote the first draft.

This is why the case matters far beyond game publishing. Every industry now has executives pinging bots for “quiet” ideas, how to structure layoffs, how to “rationalize” earnouts, how to weaken a cofounder’s voting power. They’re leaving AI‑formatted breadcrumbs for future plaintiffs’ lawyers.

What executives should do instead (and what this reveals about leadership)

The naive reaction is to ban AI for anything legal‑adjacent. No prompts, no transcripts, no problem.

But that’s like responding to email discovery by outlawing keyboards. The medium isn’t the issue. The issue is governance and character.

Executives asking “Is using ChatGPT for legal strategy allowed?” are already a step off. The better question is: Am I comfortable explaining this prompt to a judge, ten pages into an opinion about my credibility?

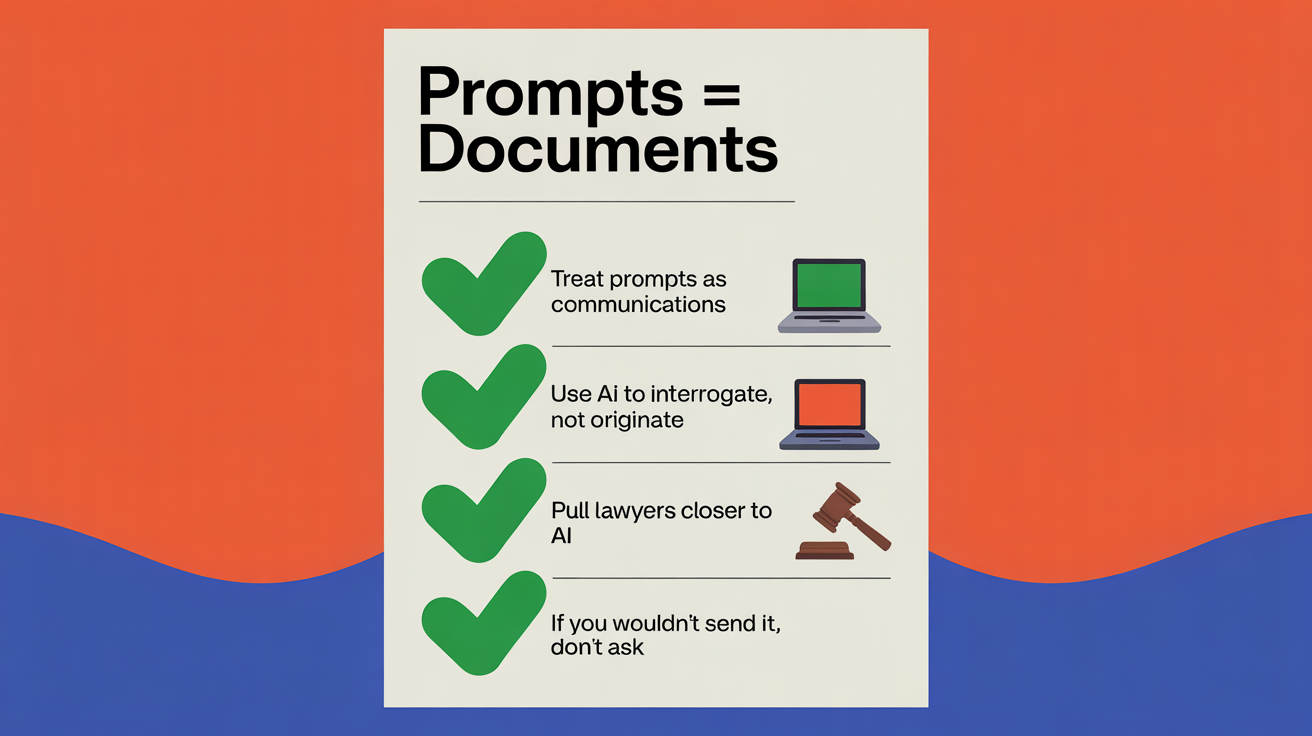

From that perspective, some practical shifts follow:

- Treat prompts as communications, not thoughts. If you wouldn’t send it to a junior associate, don’t send it to a model.

- Use AI to interrogate, not originate. “What arguments will the other side make about this contract?” is healthy paranoia. “How do we get out of paying them?” is an invitation to write your own bad‑faith script.

- Pull lawyers closer to AI, not away from it. In-house teams should own the guidelines for using ChatGPT responsibly: what can be drafted by AI, what must be human‑reviewed, and where the red lines are.

Underneath all this is a less comfortable truth: AI doesn’t corrupt neutral leaders; it amplifies whatever is already there.

A CEO inclined toward fair dealing will use models to clarify contracts, simulate worst‑case scenarios, and prepare better for board discussions. A CEO inclined toward gamesmanship will ask how to wriggle out of promises. The technology’s real “alignment problem” shows up in the judge’s findings, not the model’s weights.

The Krafton opinion reads, at times, like a character study written in corporate Slack. The AI cameo is memorable, but it’s not the villain. It’s the high‑lighter pen tracing the shape of a decision the court was always going to see.

Key Takeaways

- ChatGPT legal advice didn’t sink Krafton because it was wrong; it sank them because it documented a scheme to dodge obligations.

- Courts are treating AI‑drafted strategy as ordinary corporate evidence, using it to infer motive, pretext, and bad faith.

- The real corporate legal risk of AI is the discoverable trail of prompts and outputs, not just hallucinations or technical errors.

- Bans miss the point: leadership quality and governance norms determine whether AI becomes an asset or a liability.

Further Reading

- Fortis Advisors, LLC v. Krafton, Inc., Opinion (Del. Ch. Mar. 16, 2026), The full 92‑page Delaware Court of Chancery ruling detailing findings, remedies, and the CEO’s use of an AI chatbot.

- CEO Asks ChatGPT How to Void $250 Million Contract, Ignores His Lawyers, Loses Terribly in Court, 404 Media, Narrative summary of the case and the court’s scathing view of the AI‑driven strategy.

- Subnautica 2 Publisher Allegedly Asked ChatGPT To Find A Way Out Of Paying Cofounders, Kotaku, Reporting on pre‑trial allegations, discovery disputes over ChatGPT logs, and “Project X.”

- Judge orders Krafton to restore fired Unknown Worlds CEO and extends earnout testing period, PC Gamer, Focus on the practical impact of reinstatement and the extended earnout window.

- Docket, Fortis Advisors v. Krafton, Public filings, including the verified complaint and pre‑trial briefs that shaped the case.

Somewhere right now, another executive is opening a fresh chat window, fingers hovering over the keyboard, about to ask a model how to solve a “pesky” contractual problem. The real question isn’t whether the AI will hallucinate. It’s whether that first prompt is the opening line of their own future opinion.