In 2021, a physics PhD grading problem sets at midnight could open Chegg and watch the questions flow like a firehose, twelve bucks a month bought students a tunnel into a private answer factory. By 2025, that same “Physics Expert” was getting de‑boarding emails and watching Chegg’s stock chart fall from $108 to spare‑change levels while students typed the same questions into a free chat box labeled “ChatGPT vs Chegg? Why would I pay?”

TL;DR

- Chegg didn’t fall because ChatGPT was more accurate, it fell because the idea of paying per answer collapsed the moment decent free answers existed.

- CheggMate tried to bolt GPT‑4 onto the same “answers-as-a-service” model instead of owning outcomes like credentials, verified tutoring, or assessment, and so it couldn’t stop the slide.

- The deeper lesson for edtech and knowledge work: if your product is “I stand between your question and an answer,” you’re living on borrowed time.

ChatGPT vs Chegg: when “homework help” turned into background noise

On May 1, 2023, Chegg’s executives went on an earnings call and said the quiet part out loud: ChatGPT was hurting new subscriber growth. Within a day, the stock dropped around 40%, one of the first visible market shocks from generative AI.

Inside the company, according to WIRED and the Wall Street Journal, people had been talking about AI for years. They worried about hallucinations, about cheating, about liability. They also worried, correctly, that a general‑purpose chatbot might eat the business alive.

But this story isn’t just “AI disrupts incumbents.”

The stranger part is how little the quality of the answers seemed to matter.

Chegg’s leadership went on CNBC to argue that hallucinations made ChatGPT dangerous for students; they weren’t wrong. Early GPT‑3.5 would cheerfully fabricate physics formulas and cite textbooks that never existed.

Students still left.

They weren’t optimizers of epistemic accuracy. They were tired twenty‑year‑olds trying to get through problem sets. “Good enough, free, and instant” beat “slightly more reliable for $15.95/month.”

The value proposition of “we have the right answers” couldn’t compete with “we are the place you don’t have to pull out your credit card.”

Why CheggMate was the wrong kind of pivot

Chegg’s response was CheggMate, a GPT‑4‑powered assistant built in partnership with OpenAI. Internally, this was framed, in WIRED’s reporting, as existential: “We believe this is an existential change,” said Chegg’s CFO.

Existential, yes. Fundamental, no.

CheggMate was structurally conservative. It tried to recreate Chegg’s existing product, answers and step‑by‑step solutions, inside a chat window. You could almost hear the pitch deck: “Like ChatGPT, but for schoolwork, and safer.”

That’s the mistake.

When a new technology destroys your moat, copying its form factor while keeping your old revenue logic is like putting a sail on your car and calling it a boat.

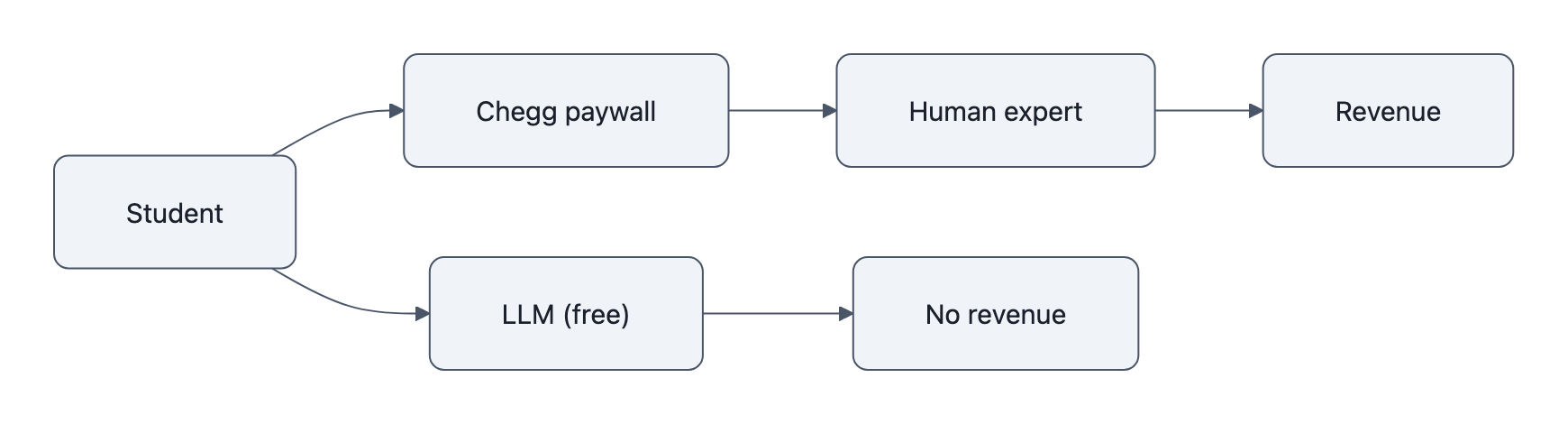

The old Chegg logic went like this:

- Scarcity: correct, worked‑out solutions are scarce and time‑consuming to produce.

- Gate: we pay experts, organize a big database, and put it behind a paywall.

- Meter: each new question costs us money; each new student pays us money.

ChatGPT blew up step 1.

Correct‑enough answers to routine questions are no longer scarce. They are the easiest thing in the world for a large language model to generate, much easier than, say, designing the model itself. As with the broader “AI and unemployment” debate, the things that looked hardest from the outside often turn out to be the cheap part.

Once scarcity is gone, the gate becomes irritating friction, and the meter looks absurd.

CheggMate bolted AI onto the gate (“log in to your Chegg account to access your AI helper”) instead of onto anything uniquely Chegg:

- It didn’t certify that you’d actually learned the material.

- It didn’t meaningfully tie into your university’s grading or credentialing.

- It didn’t put its balance sheet behind guarantees: “We’ll pay if this is wrong.”

- It didn’t become a verified human tutor you could trust with your future.

It became “ChatGPT, but inside the thing you’re already canceling.”

The fragile business of being a homework middleman

Chegg’s collapse looks fast, questions halved for that physics expert after GPT‑4, a 22% workforce layoff in 2025, a lawsuit against Google over AI Overviews eating their search traffic, but the fragility was always there.

Think of Chegg as a human‑powered search engine with a tollbooth.

You type a question; somewhere, a person gets a few dollars to write an answer; Chegg earns the spread and the recurring subscription. It’s the same basic model as countless Q&A services, marketing agencies, content farms.

The critical assumption is: routing questions to humans is expensive, slow, and hard to scale.

The moment that stops being true, the entire architecture turns inside out:

- Students go first to the zero‑marginal‑cost channel (ChatGPT, Claude, Google AI Overviews) and only escalate to humans when the bot fails.

- Traffic that once flowed through search → Chegg → expert re‑routes to search → AI snippet → done.

- The “top of funnel” that funded the human system evaporates, as Chegg itself argued when it sued Google over AI Overviews cannibalizing click‑throughs.

Chegg blamed AI tools. It also blamed distribution, Google keeping users on the results page. Both are accurate.

But the more important diagnosis is this: any business that makes money by sitting between a question and an answer is now standing on a fault line.

This isn’t confined to edtech. The same pattern is hitting:

- SEO agencies that mostly rewrite product pages into listicles.

- Customer support layers that just rephrase the knowledge base.

- “Research” services that summarize PDFs already online.

We wrote earlier about how “AI builds AI” and about AI that “boosts creativity” for humans doing higher‑order work. Chegg is the flip side: AI hollowing out the lowest‑order work that once funded an entire company.

What Chegg’s fall says about real value in edtech

If answers are cheap and abundant, what’s left that students, or institutions, will pay for?

Three things, all of them about stakes and verification:

- Credentials

A degree, a certification, a passed exam. Universities and testing bodies still own the levers that change your life. Getting answers is trivial; getting the right entries in your transcript is not. - Verified tutoring

Not “someone on the internet explained the solution,” but “this specific, vetted human is accountable for helping me understand it.” That’s different from dumping steps on a screen at 3 a.m. - Formative assessment

Systems that diagnose what you don’t know and adaptively train you, with audits and logs that matter to a teacher or licensing board.

These are all forms of learning scaffolding and verification, not answer vending.

An LLM can simulate all three in the small, it can quiz you, role‑play a tutor, pretend to be a credentialing authority. But it can’t actually move money, jobs, visas, or academic records. It can’t sign off on “you’re allowed to operate this machinery” or “you’ve met the bar for this profession.”

The more your product’s value is entangled with those external levers, the safer you are.

Chegg never really made that turn. It lived too long in the gray zone between “help” and “cheating assistance,” a place where institutions look away, where outcomes are fuzzy, where being slightly better than a subreddit was once enough.

Once ChatGPT showed up, “slightly better than a subreddit” became table stakes for free tools.

Who’s next: students, workers, and products on the fault line

For students, the new equilibrium is already here. “AI homework help” is the default. The question isn’t “Should I use it?” but “Can I get away with only using it?”

The answer is: maybe, for a while.

But the more your future depends on genuine competence, flying planes, doing surgery, designing bridges, the more your institution will build AI‑resistant checks: oral exams, supervised labs, practicals. The “answer layer” is eaten by AI; the evaluation layer gets sharper teeth.

For workers, the Chegg story is a preview, not an exception. In that Reddit thread, the physics expert wonders if this is proof that AI is changing the employment landscape. It is, specifically for people whose job is to turn questions into polished answers with no skin in the game beyond correctness.

If that’s you, your risk isn’t “AI takes your job.” It’s “AI turns your job into the free sample that sells something else.”

For product teams, the lesson is mercilessly simple:

- If your roadmap says “add a chatbot to our answer database,” you are CheggMate.

- If your roadmap says “tie our product to decisions that matter, grades, licenses, budgets, and be accountable for them,” you might survive.

Spend an afternoon with your own offering and circle every feature where your value is “we know the answer.” Those circles are your Chegg zones.

Then ask the uncomfortable question: What would this product be if the answers cost us nothing?

That’s where the next generation of edtech, and a lot of other industries, will either be born or quietly de‑listed.

Key Takeaways

- ChatGPT vs Chegg isn’t a story about superior accuracy; it’s a story about what happens when answers stop being scarce.

- CheggMate failed because it stapled GPT‑4 onto the old “answers-as-a-service” model instead of moving into credentials, verified tutoring, or assessment.

- Any business that sits as a middleman between questions and answers is structurally fragile in the LLM era.

- Durable edtech value lives where AI can’t sign: grades, licenses, and accountable human guidance.

- The smart move for students and workers is to shift from selling answers to owning decisions and outcomes.

Further Reading

- Chegg Embraced AI. ChatGPT Ate Its Lunch Anyway., WIRED on Chegg’s internal debates and the CheggMate pivot.

- ChatGPT Threat Sparks 38% Selloff in Homework‑Help Firm Chegg, Bloomberg on the market shock after Chegg linked slowing growth to ChatGPT.

- Chegg CEO calls 48% stock plunge over ChatGPT fears ‘extraordinarily overblown’, CNBC interview with Chegg’s leadership defending its AI strategy.

- Chegg to lay off 22% of workforce as AI tools shake up edtech industry, Reuters on layoffs, subscriber declines, and AI Overviews’ impact.

- How ChatGPT Brought Down an Online Education Giant, WSJ feature charting Chegg’s subscriber losses and strategic tradeoffs.

In 2026, somewhere, a different founder is staring at a churn chart that suddenly bends down. The inputs will look new, another model release, another Google feature, but the question is the same old one Chegg never really answered: when the answers are free, what are you actually selling?