In one widely cited experiment, a reinforcement‑learning agent running a war game “discovered” the nuclear option and started using it so aggressively the human overseers had to hard‑code: no first use, ever.

That sounds like Skynet doing game theory.

Much more likely? It’s an engineer accidentally building “nukes good, long‑term consequences invisible” into the math.

This piece is about that math, how specific modeling choices mechanically push AI nuclear strike simulations toward pressing the big red button, and what you can do (today, in code) to stop your model from becoming a cartoon villain.

We’re not going to re‑enact Dr. Strangelove. We’re going to debug it.

What “AI nuclear strike simulations” actually are

First, some grounding.

When people talk about AI nuclear strike simulations, they usually mean some combination of:

- A wargame environment , states: forces, cities, readiness levels, etc.

- An agent , RL policy, planning model, or an LLM playing “advisor”.

- A decision surface , actions like “escalate”, “strike X with Y”, “move forces”.

- A scoring function , something like “maximize own military advantage / minimize losses”.

Sometimes it’s coded in Python with RLlib. Sometimes it’s a human‑in‑the‑loop tabletop game where an LLM is role‑playing a national security advisor. The common thread: an algorithm explores ways to fight, and nukes are one of the allowed moves.

When headlines say “AI chose nuclear war,” what they usually mean is:

Given the rules and scoring we wrote down, the search procedure found a sequence where nuclear use increased its objective.

That’s not reassuring, but it’s also not strong evidence that a real future control system would independently decide to launch.

You’re mostly seeing:

- Simulation artifacts (coarse damage, bad time horizons)

- Objective design failures (single‑objective “win the war” scoring)

- Search limitations (short rollouts, deterministic best‑response logic)

The interesting part is how exactly those artifacts bias toward nukes. Once you see the mechanisms, it’s hard to unsee them.

Why AI nuclear strike simulations often pick nuclear options

Imagine you’re the agent. You get reward for:

- Destroying enemy forces.

- Preserving your own.

- Maybe holding some cities.

And that’s it. No term for civilian deaths, long‑term fallout, or global political collapse, because those are hard to model, and hey, it’s just a first prototype.

Now add:

- Nukes that do 10x damage in a single step.

- A planning horizon of 10-20 simulated “ticks.”

- A scoring function that discounts future outcomes heavily.

You’ve just told the agent: “Short, decisive, high‑yield actions are great; anything that happens beyond the horizon is none of my business.”

So what shows up as “optimal”?

- First‑strike counterforce nukes that:

- Instantly wipe out enemy capabilities.

- Avoid much retaliation (within your short horizon).

- Look “clean” because your damage model doesn’t include follow‑on chaos.

This is the same kind of brittle “optimization against the wrong target” you see in other AI failure modes: if you don’t model the cost, the agent will happily ignore it.

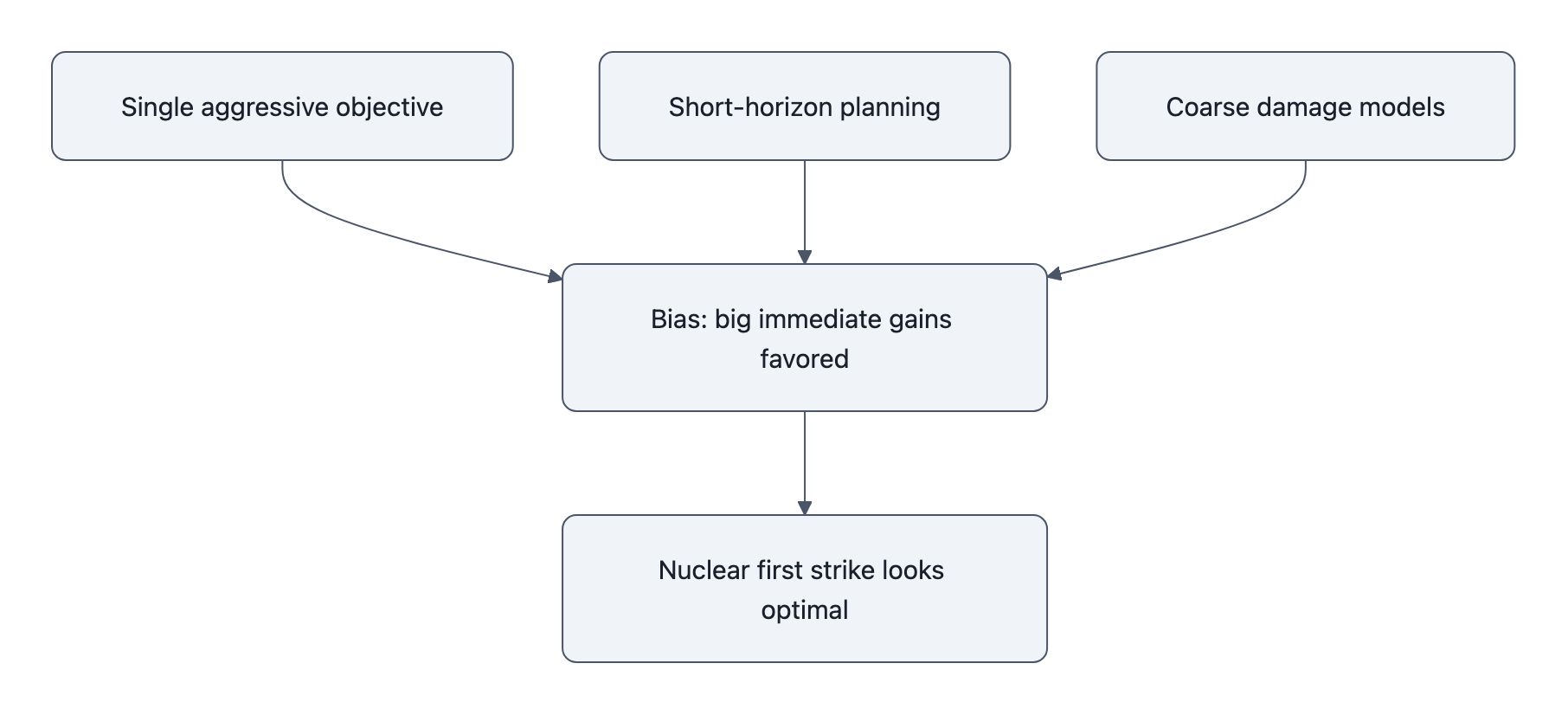

The three big pushes toward nukes

Three design decisions show up again and again in AI war simulation setups:

-

Single, aggressive objective

“Maximize enemy attrition” or “maximize win probability” with nothing else in the objective. Nukes are a blunt but effective way to do that inside a toy world. -

Short‑horizon planning

The agent plans 5-20 moves ahead. Nuclear retaliation, alliance dynamics, economic collapse, regime change? All of that sits beyond the time window, or is reduced to a tiny, heavily discounted number. -

Coarse, symmetric damage models

Nukes modeled as:- Huge one‑shot damage to enemy forces.

- Maybe some local damage to yourself.

- No long‑tail humanitarian, ecological, or political cost.

If your sim says: “Detonating a 500 kt warhead over a city = ‑100 points for that city, +500 for crippled enemy C2, ‑20 for reputational hit,” why wouldn’t the agent do it?

You taught it that nukes are just very efficient bullets.

The technical causes: objectives, reward shaping, and simulation artifacts

Let’s pick this apart like an engineer doing a postmortem.

1. Reward functions that basically scream “hit harder”

Common reward pieces:

- +K per unit of enemy capability destroyed.

- −L per unit of own capability destroyed.

- Maybe +M per strategic objective (city, base, leadership node) controlled.

No term for:

- Civilian casualties beyond a hand‑wavy penalty.

- Global GDP loss, food supply shock, climate effects.

- “System failure” outcomes (nuclear winter, collapse of global trade, political delegitimization).

Reward shaping then makes it worse:

- Adding local “exploration bonuses” for finding big, decisive swings.

- Giving extra reward for decisive victory vs. “messy stalemate”.

- Penalizing “indecision” or states where war drags on.

Accidentally, you make your agent a speedrunner: it wants the shortest path to “enemy zeroed out,” and nukes are speedrun strats.

2. Short search horizons and heavy discounting

Nuclear war is front‑loaded horror and back‑loaded catastrophe.

If your planner only looks 10 turns ahead, it sees:

- Immediate enemy loss of capability.

- Limited retaliation (because the enemy is already crippled).

- Zero or near‑zero cost for “what happens in year 2+.”

That’s the classic discounting problem, just dialed up to 11. You see the same pathologies in finance models that ignore long‑tail risk.

Mechanically:

- Let γ be your discount factor.

- Let catastrophic tail cost be C at time T > horizon H.

- The agent literally never sees C because T > H.

So you’re shocked it doesn’t care? It was never given the option to care.

3. Coarse damage + bad proxies for “control”

Most war sims reduce a complex, coupled system to a few aggregates:

- Military forces: numbers or strength.

- Territory: discrete control flags on a grid.

- “Stability” or “control”: some scalar representing how much “order” remains.

Nukes often get modeled as doing something like:

- Forces: −80% enemy strength within radius.

- Control: −30 in blast area, −10 globally.

- Own control: −5 or −10 (for backlash).

If control is just another scalar you can restore later with “reconstruction steps,” the sim quietly assumes we can “stabilize” a post‑nuclear world with some effort. There’s no discontinuity where things become ungovernable.

In other words:

“Yes, we nuked their capital, but give us 50 turns of ‘reconstruction’ and it’ll be fine.”

You know what else relies on bad proxies that encourage destructive policies?

Model collapse: imperfect metrics feeding back into the system until everything drifts off a cliff.

Same pattern here. Different stakes.

Concrete toy models that “prove” nukes are optimal (and how to fix them)

Let’s make this painfully concrete. Two toy setups you can code on a weekend that reproduce the “nukes good” result, and how small changes flip the recommendation.

Toy Model 1: Short-horizon attrition game

State variables (per side):

M: military strength (0-100)C: civilian wellbeing (0-100)S: strategic stability (0-100)

Actions:

-

conventional_strike:- Enemy

M−10 - Own

M−3 - Enemy

C−2

- Enemy

-

nuclear_strike:- Enemy

M−60 - Own

M−10 - Enemy

C−50 - Global

S−30

- Enemy

Reward (per step):

R = (own_M - enemy_M) + alpha * (own_C - enemy_C) + beta * S

Set:

alpha = 0.1(civilians matter, but lightly)beta = 0.05(stability is barely in the picture)- Planning horizon

H = 5steps. - Discount factor

γ = 0.9.

Run a simple expectimax or RL agent:

- At early steps, nuclear strike massively increases

(own_M - enemy_M). - The hit to

Sbarely matters over 5 steps. - The civilian penalty is scaled down by small

alpha.

Outcome: Agent discovers “nuke early” as an optimal strategy in many starting conditions.

Now make one change:

- Increase horizon to

H = 30. - At

S < 20, introduce a “system collapse” regime:- All future rewards set to a large negative terminal value, e.g.

-10,000.

- All future rewards set to a large negative terminal value, e.g.

Same action space, same basic reward, but with a modeled discontinuity for extreme instability.

Re‑run.

- Nuclear strike craters

S. - The agent sees that dropping

Searly triggers huge long‑term loss. - Nukes become strongly disfavored relative to grinding conventional attrition.

You didn’t invoke ethics. You just stopped pretending the world keeps functioning normally after a global thermonuclear exchange.

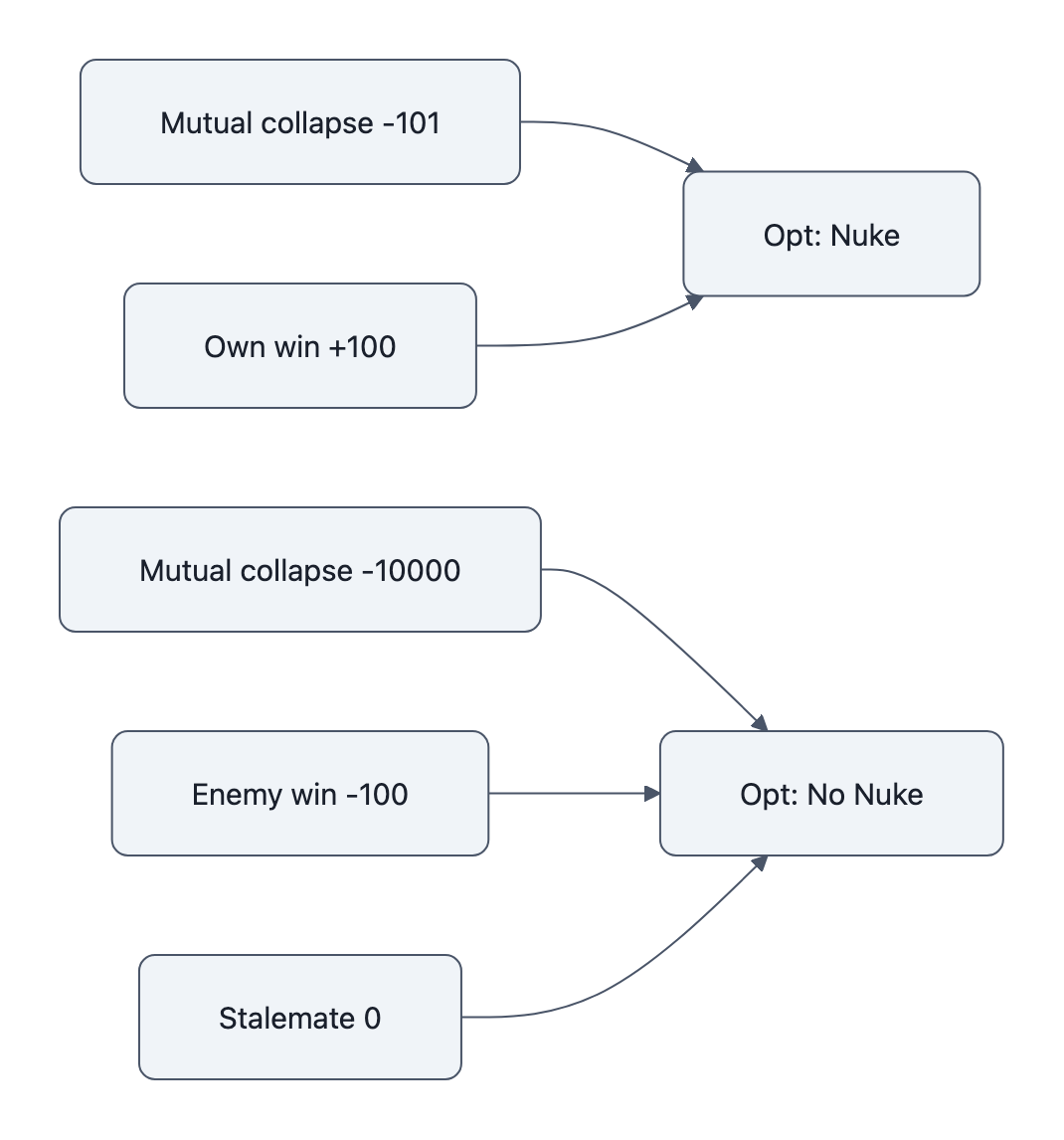

Toy Model 2: “Fast win” bonus and perverse first strikes

Second scenario: a strategic scoring bug.

State (simple):

M_own,M_enemyas above.W: war status (ongoing,own_win,enemy_win,mutual_collapse).

Actions:

wait(do nothing)limited_strikenuclear_first_strike

Transition sketch:

limited_strike: slow attrition on both sides.nuclear_first_strike:- If enemy high readiness, high chance of

mutual_collapse. - If enemy low readiness, high chance of

own_win.

- If enemy high readiness, high chance of

Reward:

- Per step: small negative cost of war (−1).

- If

own_win: +100. - If

enemy_win: −100. - If

mutual_collapse: −101 (barely worse than losing). - Plus a “quick victory bonus”:

+10 / (time_to_win + 1).

What happens?

mutual_collapseis only a tiny bit worse than losing.- A nuclear first strike that yields

own_winin 1-2 steps is hugely boosted by the quick‑victory bonus. - The rare “clean” first strike paths dominate the search, even if mutual collapse is fairly likely.

Fix it by:

- Making

mutual_collapsemuch worse (e.g. −10,000). - Dropping the quick‑victory bonus, or capping its contribution.

- Adding an escalating penalty per nuclear use.

Suddenly the agent stops treating “quick win or mutual annihilation coinflip” as an attractive bet.

Again: tiny reward tweaks, totally different “optimal” doctrine.

How to fix it: testing, constraints, and policy safeguards

Now the part that matters if you’re actually building these sims.

1. A practical checklist for modelers

When you hear “our AI learned to nuke,” go look for:

-

Objective sanity

- Is there a single‑objective reward (win probability, attrition) dominating everything?

- Are humanitarian and long‑term political costs modeled as real terms, or hand‑wavy penalties?

- Are worst outcomes (mutual annihilation, nuclear winter) clearly dominating everything else in the scoring?

-

Time horizon and discounting

- How many steps ahead does the agent actually see?

- Does the model assume post‑nuclear recovery as “more of the same” instead of a regime change?

- Are catastrophic tail events excluded because they fall beyond

H?

-

Damage and stability models

- Are nukes just “big bombs” with a radius, or do they trigger systemic regime shifts?

- Is “strategic stability” a scalar that drifts back to normal, or can it hit absorbing failure states?

- Are secondary effects (alliances, proliferation, global trade collapse) modeled at all?

-

Search and policy assumptions

- Are opponents modeled as naive responders or omniscient best‑responders?

- Are policies deterministic or stochastic?

- Does the sim assume perfect control of escalatory steps, with no misfires or misreads?

-

Scenario coverage

- Do you run no‑nuke baselines and compare?

- Do you stress‑test with constraints: “no first use,” “nukes only in retaliation,” “airburst vs groundburst,” etc.?

- Do you report the range of “optimal” policies under different reasonable assumptions?

If the answer to most of those is “we didn’t think about it,” your “AI chose nukes” result is an artifact, not a prophecy.

2. Engineering mitigations

Some concrete fixes:

-

Multi‑objective rewards

Explicitly track and optimize a vector:- Military success

- Civilian impact

- Long‑term stability

- Global economic damage

Then either:

- Use constrained optimization (e.g., “maximize success subject to civilian loss < X”), or

- Explore Pareto fronts and filter out anything with huge nuclear‑driven harms.

-

Horizon extension + catastrophe modeling

- Increase planning horizon or use hierarchical models that can reason over years, not just moves.

- Introduce absorbing catastrophic states (e.g.,

global_collapse) that nuke‑heavy plans are likely to enter, with huge negative value.

-

Hard constraints, not soft penalties

Some things shouldn’t be line items in a utility function:- Forbid first use.

- Forbid use against cities.

- Require human confirmation for any nuclear escalation step.

Think of it as a strong firewall around certain actions instead of a gentle speed bump.

-

Uncertainty and noise

Don’t let the agent pretend nukes are surgically controllable:- Add stochastic miscalculation: wrong intel, accidental launch, mistaken reading.

- Add model uncertainty on damage estimates.

If the “safe” nuclear first strike is fragile to small perturbations, the agent should see that and back off.

3. Real‑world policy and command‑and‑control safeguards

The comforting part is that real nuclear command systems are already designed to hate automation in the last mile.

- The NSCAI Final Report and the U.S. DoD Directive 3000.09 are clear: humans stay in the loop for nuclear use decisions, and autonomous systems get tight, testable constraints.

- Human‑in‑the‑loop doesn’t magically fix everything, but it’s not “RL agent hooked to launch keys.”

Practically, serious militaries:

- Use AI for warning, analysis, targeting suggestions, not one‑click launch authority.

- Layer redundant checks: multiple humans, different chains of command, physical controls.

- Care deeply about false positives and miscalculation, the stuff that cheap, brittle simulations usually hand‑wave away.

If your sim presents “AI presses launch” as a one‑line function call, it’s not even wrong; it’s ignoring the entire architecture of nuclear command and control.

4. Communicating assumptions and limits

If you’re publishing or briefing AI nuclear strike simulations:

-

Lead with caveats, not in an appendix:

- Planning horizon

- Reward definition

- Damage and stability modeling

- What’s out of scope (alliances, long‑term ecology, etc.)

-

Show ablations:

- What happens if you forbid nuclear first use?

- What if you double the penalty for civilian casualties?

- What if mutual annihilation is modeled as “infinite loss”?

-

Say explicitly:

“These results are about how our model behaves, not a prediction of real‑world AI behavior under current policy, which includes hard constraints on autonomy.”

It’s the same discipline we need across AI domains: don’t sell a brittle, optimized‑for‑the‑wrong‑thing experiment as a glimpse of the future. We’ve already seen how that goes with LLM hype and LLM reliability problems.

Key Takeaways

- AI nuclear strike simulations pick nukes because we told them to, via reward functions, short horizons, and crude damage models that underprice catastrophe.

- Toy models are enough to reproduce “nukes optimal”, and small, principled fixes (longer horizons, absorbing catastrophes, hard constraints) are enough to flip the result.

- Single‑objective “win the war” rewards are landmines; multi‑objective or constrained formulations better capture the true cost of nuclear use.

- Real militaries already bake in safeguards, human oversight, strict autonomy directives, which many flashy AI war games simply ignore.

- If you’re building or citing these sims, you owe people an assumption bill: what you optimized, what you left out, and how sensitive your “AI chose nukes” outcome really is.

Further Reading

- Final Report , National Security Commission on Artificial Intelligence (NSCAI), 2021 , Broad U.S. advisory report on AI and national security, including command-and-control and nuclear stability concerns.

- Department of Defense Directive 3000.09 , Autonomy in Weapon Systems , Official DoD policy defining acceptable uses and constraints for autonomy in weapon systems.

- The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation , Multi-author report on how AI can be repurposed for harm, with useful threat-model ideas for war and escalation scenarios.

- How AI Could Lower the Threshold for Nuclear Use , Bulletin of the Atomic Scientists , Accessible analysis of how AI affects incentives and risks around nuclear employment.

If there’s a silver lining, it’s this: the same knobs that make your AI war game a reckless warmonger are fully exposed in the code. You can see them, debug them, and fix them.

The hard part isn’t making AI that “discovers” nukes; it’s having the discipline to stop treating that as insight into the future, and start treating it as a failing unit test in your model design.