On paper, your office guest Wi‑Fi is safe. The SSID is separate, client isolation is turned on, and the checkbox in the controller GUI says “AP isolation: enabled.”

AirSnitch Wi‑Fi vulnerability is the moment you find out that checkbox was basically a “feel better” button.

The UC Riverside team didn’t “break WPA3.” They showed something worse: client isolation was never a real security boundary, because the Wi‑Fi stack never actually tied identity, encryption, and routing together in the first place.

The argument of this piece is simple: if you’re still treating “client isolation” as a wall, you already lost. You need to re‑architect where sensitive traffic flows now, and treat AirSnitch as a design‑level indictment, not a patch‑level glitch.

AirSnitch Wi‑Fi vulnerability, the real ‘so what’

Imagine you’re in a hotel on “Guest‑WiFi.” You can’t ping other clients; isolation is on. So you fire up your laptop, do some work over HTTPS, maybe hit your corporate VPN.

In the AirSnitch model, the attacker is also a guest, connected legitimately, and still manages to:

- Steer your packets through their machine (full Wi‑Fi MitM attack)

- Strip cookies, inject DNS replies, poison caches

- Do it all without ever “turning off” isolation on the AP

Lead author Xin’an Zhou told Ars Technica that AirSnitch “breaks worldwide Wi‑Fi encryption” in practice, even though, as co‑author Mathy Vanhoef points out, the crypto itself isn’t broken, it’s bypassed.

That’s the “so what”: every protection that quietly depended on “clients can’t talk to each other” is suddenly theater.

If your threat model assumed “other guests in the hotel can’t attack me because isolation,” throw it out and start over.

Why AirSnitch works: cross‑layer design failures, not broken crypto

The depressing part is how mundane the core flaw is.

Wi‑Fi never cryptographically tied three things together:

- Your MAC address (L2 identity)

- Your encryption keys (GTK/PTK)

- Your IP address (L3 identity)

Vendors then implemented client isolation at only one of those layers and called it a day. AirSnitch is what happens when someone methodically walks the gaps between them.

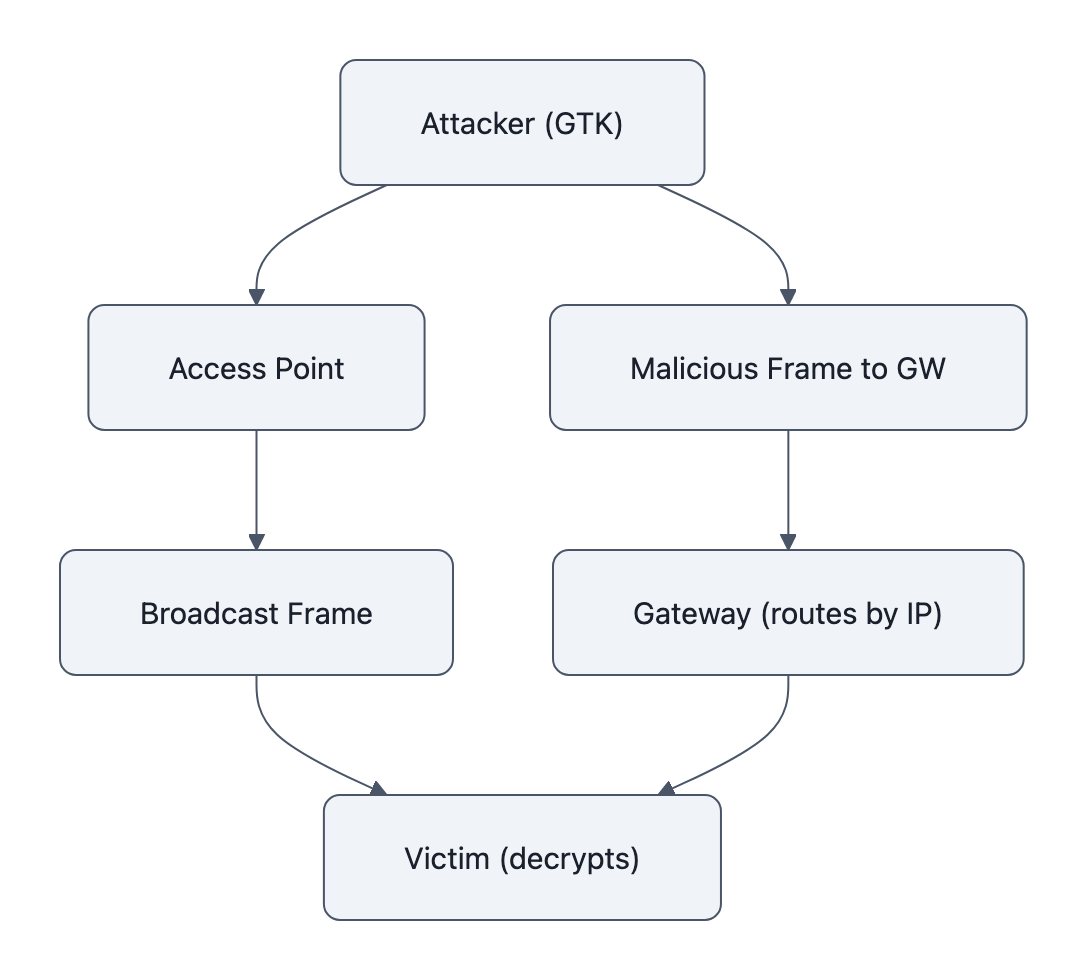

Concrete example: GTK abuse.

Most networks use a Group Temporal Key for broadcast/multicast. Every client connected to that SSID shares the same GTK. APs enforce “no client‑to‑client unicast,” but they must allow broadcast frames.

AirSnitch abuses that: it crafts a packet that’s encrypted as a broadcast (GTK) but whose payload is actually targeted at one victim. The AP happily forwards it, it’s broadcast, after all. The victim happily decrypts it, also broadcast.

You just got client‑to‑client injection through a channel every implementation is forced to allow.

Now layer in Gateway Bouncing:

- The attacker sends a frame to the gateway’s MAC

- But the inside IP header says: this is for victim 10.0.0.42

- Many gateways trust the IP header more than the MAC destination

- They route it onwards to the victim

The AP thinks: “I’m just sending client → gateway. That’s allowed.”

The gateway thinks: “I’m just routing to 10.0.0.42. That’s my job.”

Nobody is enforcing “this originated from a forbidden client talking to another client.”

Add MAC spoofing / port stealing on top and you start rewiring who gets which encrypted stream. Authenticate with the victim’s MAC, poison the mapping so downlink packets meant for them arrive at you instead. With enough of these primitives, you build a full bidirectional tunnel through features the standard encouraged and implementations normalized.

This isn’t one magic buffer overflow. It’s years of “seems fine” assumptions stacking into an architectural bug.

If that pattern sounds familiar, it should. We already saw the same mentality in network security failures driven by default passwords: security features deployed like decorations, not like walls.

How dangerous is AirSnitch in the wild? realistic threat model

Let’s kill two bad takes immediately:

- “This only matters if someone already hacked your Wi‑Fi.”

- “This destroys all Wi‑Fi; abandon hope.”

Both are wrong.

Requirement #1: the attacker has to be able to join or co‑locate with your network.

This usually means:

- They connect to the same SSID (hotel, airport, campus, office guest Wi‑Fi)

- Or they join a different SSID on the same physical AP / distribution network

- Or they operate a rogue AP close enough to lure your devices

So no, this is not someone on the other side of the planet popping your home network without credentials.

But once they are in radio and infrastructure range, the bar drops dramatically:

- The NDSS team tested five consumer routers, two open‑source firmwares (DD‑WRT, OpenWrt), and real WPA2‑Enterprise university deployments.

- “Every tested router and network was vulnerable to at least one attack primitive.”

Enterprise is actually more interesting here. UC Riverside’s own press release flatly says:

“Enterprise systems usually protect their networks using the most advanced encryption. So that means enterprises are seemingly relying on a fake sense of security.”

The fake sense of security is exactly “we turned on WPA3 client isolation, we’re good.”

The realistic threat pattern looks like this:

- Hotels / airports / conferences: Perfect hunting grounds. Attacker sits on guest Wi‑Fi, uses AirSnitch primitives to pivot into other guests’ sessions. Captive portals and “you’re isolated” marketing blurbs don’t save you.

- Universities / large offices: Many SSIDs (employee, guest, IoT) share the same controller and switching fabric. Isolation is set per‑SSID; AirSnitch works across SSIDs through shared GTK, MAC spoofing, and distribution‑switch tricks.

- Home and SOHO: Risk is lower, but not zero. If you use a single SSID for everything, once someone gets on, they have much richer lateral movement than your AP status page suggests.

If that last scenario sounds unlikely, “who’s on my home Wi‑Fi besides me?”, re‑read that Louvre piece about default passwords. The boring ways attackers get in are exactly the ones we systematically under‑secure.

The bottom line: this is not an internet‑worm situation, but anywhere strangers share RF space with your sensitive devices, “client isolation” just exited your threat model.

What to do now: immediate operational fixes and practical mitigations

You don’t fix an architectural illusion with a firmware checkbox. You fix it with layout.

The short version: move trust boundaries up to where you actually control them.

1. Stop treating one SSID + isolation as a security zone

If your design doc says “Guests on SSID ‘Guest’ can’t touch internal stuff because isolation,” rewrite it.

Do this instead:

- Put guests on their own VLAN, firewalled at L3 from everything you care about.

- Same for IoT, BYOD, and contractor devices, VLAN per trust class is the minimum bar.

- Assume AirSnitch gives every client on that SSID a cheap MitM against every other client on that SSID and anything reachable past sloppy routing.

This is boring legacy networking, and that’s why it actually works.

2. Use per‑client keys and controls where you can

Personal mode with a shared PSK is fundamentally at odds with strong isolation. You hand every device:

- The password

- The implicit right to share group keys

- A path to impersonate others at L2/L3

Where possible:

- Use WPA2/WPA3‑Enterprise with per‑user auth, not shared secrets.

- Prefer infrastructures that support per‑client group keys or equivalent, essentially making the “broadcast” space less shared.

- Harden your RADIUS and onboarding flows; AirSnitch demonstrated paths from MitM into brute‑forcing weak RADIUS secrets.

Is this more annoying than slapping the same password on the wall? Absolutely.

So was moving away from “admin/admin” on everything, and we still had to do it.

3. Monitor for weird link‑layer behavior

You can’t fix the standard today, but you can see when someone’s abusing it.

Look for:

- Duplicate MACs associating to multiple APs/BSSIDs

- Rapid MAC re‑associations from a single radio fingerprint

- Odd gateway traffic patterns (e.g., many client‑to‑gateway frames whose IP dests are other clients)

This is exactly the sort of low‑level weirdness that also shows up in application and tooling security issues like invisible Unicode attacks: nothing “crashes,” but the semantics have been quietly poisoned.

Also:

- Update firmware. Some vendors will add partial mitigations (stricter L2+L3 checks, better spoofing detection).

- If you operate a campus or hotel network, test with the AirSnitch toolkit on GitHub, against networks you own or have permission to test.

4. Reduce shared RF where possible

One practical trick: physically and logically separate SSIDs by risk.

- Put sensitive internal Wi‑Fi on different bands/APs than big public guest SSIDs.

- Don’t backhaul everything through the same cheap switch stack if you can avoid it.

- In truly sensitive environments, use wired or MACsec‑protected links instead of “just another SSID on the same controller.”

It’s not glamorous. It’s how you survive design mistakes you don’t control.

Beyond patches: vendors and standards have to stop trusting ‘client isolation’

Here’s the uncomfortable part: no amount of “best practices” will fully fix this as long as the standard itself thinks shared keys + half‑enforced isolation is acceptable.

The AirSnitch paper’s mitigations read like a to‑do list for IEEE and vendors:

- Bind identities across layers. MAC, key, and IP should form a single cryptographic tuple; if any one doesn’t match, drop the frame.

- Per‑client group keys by design. Group traffic should not be a catch‑all backdoor that any client can abuse to sneak in unicast payloads.

- Link‑layer enforcement. Higher‑layer gateways shouldn’t be able to “re‑enable” forbidden flows simply by trusting IP headers.

- Spoofing detection. Multiple associations using the same MAC, or suspicious MAC flapping, should be treated as hostile, not “quirky.”

The real takeaway for operators is harsh: if a feature isn’t enforced at the link where the attack happens, it is not a real security boundary.

Client isolation lives in marketing copy and controller GUIs, not in the primitives themselves. Until that changes, treat it like a speed bump, not a wall.

And be loud about it. Vendors respond to RFPs and angry customers quicker than they respond to NDSS papers.

Key Takeaways

- AirSnitch Wi‑Fi vulnerability doesn’t crack WPA2/3 crypto, it shows how weak, cross‑layer “client isolation” always was.

- Any network where untrusted users share SSIDs or infrastructure (hotels, campuses, offices) should assume Wi‑Fi MitM is cheap and practical.

- Immediate fix: stop trusting isolation checkboxes; use VLAN segmentation, per‑client keys where possible, and monitoring for spoofing/MAC weirdness.

- Long‑term fix: vendors and standards must cryptographically bind MAC, key, and IP, and stop treating shared GTK + partial isolation as acceptable.

- If your security design doc uses “client isolation” as a boundary, you don’t need a firmware patch, you need a new design.

Further Reading

- AirSnitch: Demystifying and Breaking Client Isolation in Wi‑Fi Networks, NDSS 2026, Official paper summary with attack primitives, experiments, and mitigation ideas.

- AirSnitch full paper (PDF), Xin’an Zhou et al., NDSS 2026, Full technical details, tested device list, and cross‑layer analysis.

- zhouxinan/airsnitch, GitHub, Proof‑of‑concept toolkit for validating AirSnitch attacks on authorized networks.

- New AirSnitch attack breaks Wi‑Fi encryption in homes, offices and enterprises, Ars Technica, Accessible deep dive with quotes from Zhou and Vanhoef on impact and limits.

- UCR press release: Computer scientists reveal Wi‑Fi security flaws, Institutional overview emphasizing enterprise risks and recommended hardening.

You don’t need to panic about the AirSnitch Wi‑Fi vulnerability; you need to stop pretending “client isolation” was ever more than a polite suggestion and start drawing your real boundaries where the math, and the packets, actually live.