On a random Tuesday, thousands of people went from “lol AI” to “fastest uninstall of my life.” The Cancel ChatGPT movement didn’t start with a bad feature rollout, it started when users realized the model in their homework app just signed up to help the Pentagon.

That “Cancel ChatGPT” spike is easy to dismiss as internet outrage. It shouldn’t be. Account deletions and Quit ChatGPT posts are a rare thing in tech: a clear, high‑volume market signal that can be turned into influence, if people treat it as bargaining, not just venting.

The argument here is simple: Cancel ChatGPT is a one‑time chance to hard‑code no‑surveillance / no‑weapons norms into AI contracts and buying decisions. If you just uninstall and move on, you wasted it.

Cancel ChatGPT: What sparked the boycott

Let’s ground this in the boring part: procurement.

In January, defense contractor Leidos put out a press release bragging about a partnership with OpenAI to “deploy AI to transform federal operations” across agencies, national security, health, infrastructure, the usual alphabet soup of “federal operations.” OpenAI models, including ChatGPT, become the engine under that hood.

Bloomberg Law framed it plainly: this is about rolling OpenAI systems out across federal agencies, not a one‑off pilot in some obscure office.

Now place that next to what happened with Anthropic, OpenAI’s closest rival.

Anthropic publicly drew two hard red lines: no Claude for autonomous weapons and no Claude for mass surveillance of U.S. citizens. The Trump administration’s response was not “respectfully disagree.” It was to designate Anthropic a supply‑chain risk and effectively ban Claude inside the U.S. government.

OpenAI, and Sam Altman personally, stepped into the gap.

He posted that OpenAI’s models would not be used for mass surveillance. A U.S. official immediately undercut that, saying the tools would be used for “all lawful means.” Under the Patriot Act, “lawful” can absolutely include mass data collection on citizens.

That’s the spark.

Within hours:

- Reddit’s front page: “Cancel ChatGPT movement goes big.”

- Top comments: “Fastest uninstall of my life.” “Deleted my account last night.” “Cancelled my membership and went Claude. For my use case, there’s really no difference.”

- Links out to Resist and Unsubscribe, an economic‑boycott playbook that says the quiet part out loud: “The president responds to one thing: the market… the shortest path to change is an economic strike targeted at the companies driving the markets.”

So no, this isn’t just “AI fans mad online.” It’s AI users adopting an old Wall Street tactic: coordinated exit to move a stock price, except here the stock is trust.

And trust is the only moat any foundation model vendor really has.

Why this user exodus could change AI market dynamics

Let’s be clear: you uninstalling ChatGPT does not make the Leidos-OpenAI contract vanish. That revenue is locked in.

So why does Cancel ChatGPT matter at all?

Because consumer trust is upstream of every lucrative enterprise deal. CIOs do not want to be dragged on X because they standardized on “the torture‑and‑surveillance AI.” Procurement officers don’t want to defend a tool their own employees are publicly boycotting.

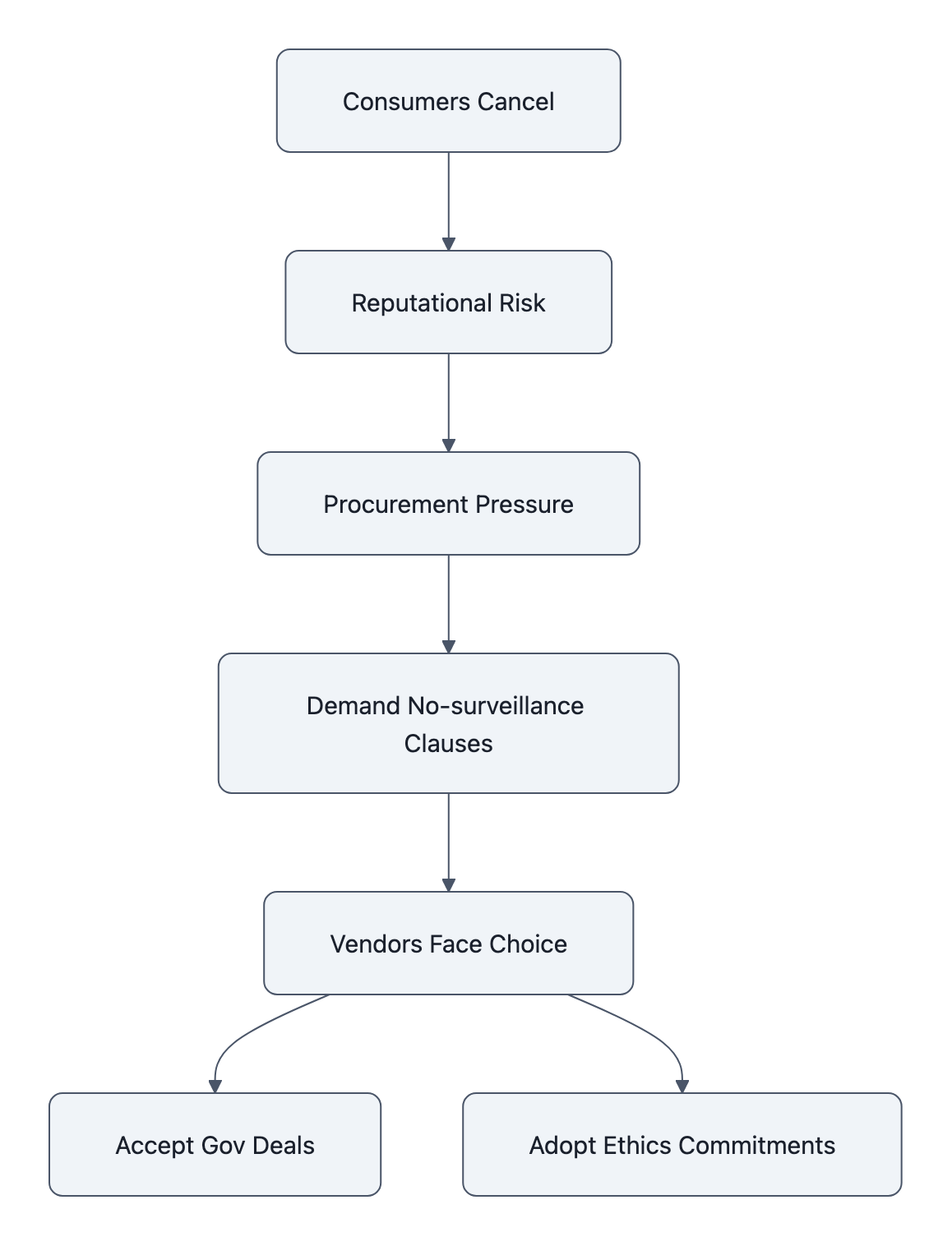

Here’s the dynamic Cancel ChatGPT exposes:

- AI vendors are desperate for government contracts. Training frontier models burns money; public‑sector deals are recurring, huge, and sticky.

- Governments are desperate for AI that will do the dirty work. Surveillance triage, predictive policing, battlefield targeting, algorithmic warrant factories, “all lawful means” covers a lot.

- The only real constraint is reputational pain. There’s no mature AI regulation here; the limiting reagent is how much heat vendors and agencies are willing to take.

Cancel ChatGPT turns that heat up.

Internally, the conversation in any big buyer now looks like:

“If we pick OpenAI and this Pentagon thing explodes again, are we next in the crosshairs? Is there a safer vendor with clearer red lines we can point to?”

Anthropic just handed them a ready‑made alternative: “We literally refused the same work on principle.” That’s a procurement officer’s dream slide.

And that leads to the shift that actually matters: ethical clauses as default line items.

Today, vendor terms say things like:

- “We comply with all applicable laws.”

- “We reserve the right to improve the service using your data.”

Tomorrow, after a few more public Cancel ChatGPT cycles, big contracts start to read:

- “Models will not be used for bulk communications surveillance of domestic populations.”

- “Models will not be used to select or control lethal targeting.”

- “Breach of this clause is grounds for immediate termination and liquidated damages.”

Once those clauses appear in one big contract, a Fortune 100, a major university system, a city government, they propagate. Lawyers copy‑paste. Boards start asking why their company doesn’t have the “no mass surveillance” line in its AI RFPs.

That’s the pressure point. Not your $20/month. The chilling effect on future weaponized‑AI deals because the risk department can point to a real Cancel ChatGPT movement and say: “We’re not touching that without guardrails.”

Practical steps: exporting data, switching safely, and signaling demand

If you’re in Quit ChatGPT mode, you can either flail or you can do something that moves the needle. Let’s talk concrete.

1. Get your data, then nuke the account

“Fastest uninstall” makes a good Reddit comment. It’s also how you lose evidence.

Before you cancel ChatGPT:

- Export your data from account settings: prompts, files, and any linked workspaces.

- Save a local copy.

This isn’t prepper paranoia; it’s about audit. If AI ends up at the center of a legal fight, employment disputes, IP claims, regulatory complaints, having your interaction history is real leverage.

Only then:

- Delete the account.

- Remove mobile and desktop apps.

- Revoke any API keys or plug‑ins in other tools.

Do the housekeeping like you assume this will matter later, because it might.

2. Don’t just switch models. Switch terms

Gemini, Claude, open‑source setups, there is no perfectly clean option. But they are not all equivalent.

If Cancel ChatGPT is about AI surveillance concerns and vendor ethics, you’re optimizing for alignment of incentives, not halo‑polishing.

At minimum, look for:

- Public red lines. Anthropic’s refusal to support autonomous weapons and mass surveillance is not marketing copy; it just cost them federal business. That’s a real signal.

- Data‑use control. Can you tell the provider “do not train on my data” and get it in writing?

- Export and deletion. Is there a clear, tested way to leave later?

For many personal workflows, Claude is a drop‑in replacement, the Reddit anecdote is right: for a lot of use cases, output quality is comparable. The difference isn’t IQ points. It’s what the company is willing to walk away from.

And if you’re more technical, putting an open‑source model behind your own API, even if it’s weaker, lets you decouple capability from corporate politics. You still rely on GPUs and frameworks, but you’ve narrowed the attack surface.

3. Signal demand where it actually counts

One of the smartest things in the Resist and Unsubscribe playbook is the focus on concentration:

“The shortest path to change without hurting consumers is an economic strike targeted at the companies driving the markets…”

Random boycotts splinter. Coordinated, time‑boxed strikes move KPIs.

For Cancel ChatGPT, that means:

- Tell your employer. If your company is piloting ChatGPT, document your concerns, surveillance, ethics, vendor risk, and ask what the alternatives are. CC procurement or security if you can.

- Push for contract clauses. If you’re anywhere near buying decisions, start inserting “no mass surveillance / no weapons” language. Even if Legal crosses it out, it forces a conversation.

- Contact actual regulators, not just X. Data‑protection authorities, local reps, city councils buying AI tools for policing or welfare, these are exactly the people who should be hearing “we don’t want our city to be the next mass‑surveillance case study powered by ChatGPT.”

And for technically curious readers: fork the politics. Write template clauses, model RFP language, open letters procurement teams can literally copy. That’s how “soft norms” become boilerplate.

What to watch next: regulation, vendor pledges, and corporate responses

Cancel ChatGPT is not the endpoint; it’s a test run.

Here’s what will tell you whether this moment translated into real constraint or just trended for a week.

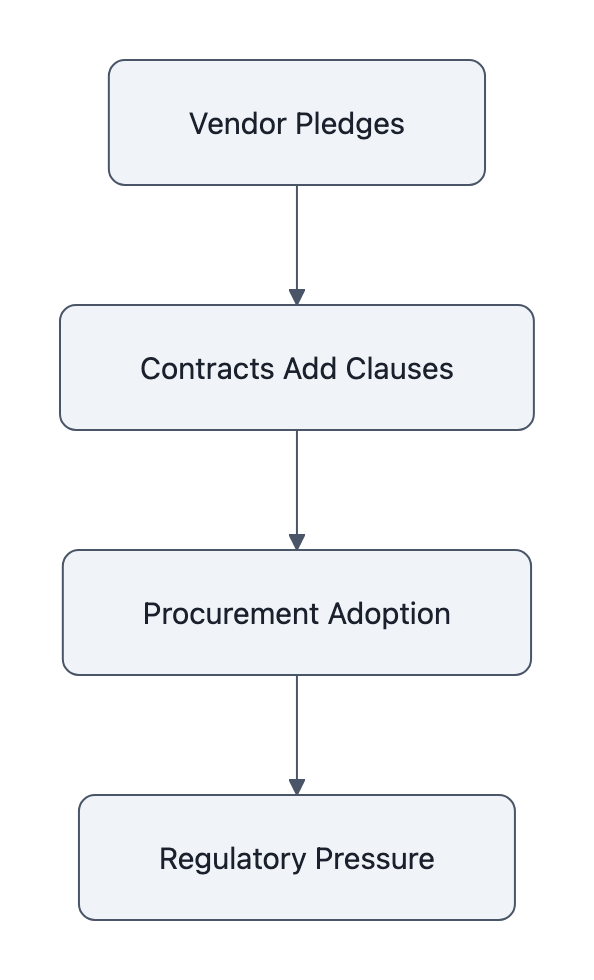

1. Do vendors put anything on paper?

Watch for:

- OpenAI’s next governance or safety blog posts. Do they make binding commitments about surveillance and weapons uses, or just vibes?

- Anthropic and others doubling down. Their refusal vs OpenAI stance is a differentiator now; if customer demand is real, they’ll push it harder.

If the Cancel ChatGPT movement has teeth, you’ll see more “we will not build X” pledges from vendors, and not just in speeches, but as contract riders.

2. Procurement creep: do clauses spread?

University systems, hospitals, city IT departments… these places are allergic to risk. If even a few start adding “no bulk domestic surveillance” language to AI deals, that’s the signal.

It’s the same pattern we saw with:

- Data‑processing agreements post‑GDPR.

- Diversity and harassment clauses in vendor contracts after high‑profile scandals.

Once one big player normalizes it, others follow to avoid looking worse.

Keep an eye on stories like the Cancel ChatGPT movement coverage and Bloomberg‑style federal contracting write‑ups. The moment you see “contract includes restrictions on surveillance uses,” you’ll know the boycott translated.

3. Regulatory triangulation

Regulators generally move in response to:

- A vivid abuse case

- Or a visible, organized constituency

Cancel ChatGPT is seeding both:

- Narrative: “Popular AI tool quietly powers mass surveillance.”

- Constituency: users who can honestly say “I cancelled and here’s why.”

Expect pressure for:

- Transparency mandates, governments must publish high‑risk AI contracts and their use cases.

- Use‑case bans, like the EU’s restrictions on real‑time biometric tracking, but extended to generative‑powered surveillance workflows.

- Fiduciary‑style duties, requirements that vendors disclose when a deployment meaningfully changes privacy or civil‑liberties risk.

If canceling turns into organized canceling, the Resist‑and‑Unsubscribe model applied to AI, regulators suddenly have cover to write those rules.

Key Takeaways

- Cancel ChatGPT isn’t just moral posturing; it’s a rare, loud market signal about AI surveillance concerns and vendor ethics.

- The real leverage isn’t your subscription fee, it’s the reputational risk that pushes enterprises to demand no‑surveillance / no‑weapons clauses in AI contracts.

- If you Quit ChatGPT, export your data first, then delete, you may need that audit trail later.

- Switching to alternatives only matters if you also switch terms: look for vendors with public red lines and strong data‑control options.

- The success metric for this movement is not OpenAI’s churn graph; it’s whether binding contract language and regulation tighten around high‑risk AI uses.

Further Reading

- “Cancel ChatGPT” movement goes mainstream after OpenAI closes deal with U.S. Department of War, as Anthropic refuses to surveil American citizens, Windows Central, Reporting on Anthropic’s refusal, OpenAI’s cooperation, and the user backlash.

- Leidos & OpenAI: Deploying AI to Transform Federal Operations, PR Newswire, The primary press release detailing scope and goals of the Leidos-OpenAI federal partnership.

- Leidos, OpenAI Partner to Deploy AI Across Federal Agencies, Bloomberg Law, Business coverage of the contract and which agencies and functions are targeted.

- Inside the story of large political donations by OpenAI executives, Wired, Background on OpenAI leadership’s political donations and why they feed trust concerns.

- Resist and Unsubscribe, Campaign framing for coordinated unsubscribe/boycott strategies aimed at tech and policy change.

The Cancel ChatGPT window will close fast. Either it becomes another flare‑up in the outrage cycle, or it hardens into contract language and regulatory muscle memory. Whether you’re pro‑ or anti‑boycott, the interesting question isn’t “Should you cancel?” It’s whether we use this moment to teach AI vendors that some lines, surveillance at population scale, AI in weapons loops, are more expensive to cross than any single government deal is worth.