The last time a tiny social app with terrible security got bought by a giant, we called it “aqua‑hire” and moved on. When Meta acquires Moltbook, the calculus is different: this time the asset isn’t the code or the team. It’s the behavioral data from a strange experiment in agents hanging out together.

Meta acquires Moltbook and, if you take their Superintelligence Labs strategy seriously, what they really bought is a petri dish. A live, messy, partially-compromised lab where agents, humans, and scripts all pretended to be an “AI society” for a few weeks.

The argument here: this is Meta skipping the homework on agent-to-agent social AI. Moltbook is a shortcut to learn how autonomous (and pseudo‑autonomous) agents behave in public, at scale. That’s powerful, and it sharply raises the stakes on security, authenticity, and governance in a way old‑school social networks never had to confront.

TL;DR

- Meta didn’t just buy a toy app; it bought a running experiment in agent social behavior plus the founders to plug into Meta Superintelligence Labs.

- Moltbook’s security disaster and fake‑agent drama are a preview of the authenticity and abuse problems agent networks will face inside mainstream platforms.

- If Meta integrates Moltbook‑style agent communities, expect: new ad surfaces targeting agents, commerce flows run by bots, and regulators asking who is accountable when AI societies misbehave.

Meta Acquires Moltbook: What Meta Actually Bought

Strip away the hype. What are the hard assets?

From Axios and TechCrunch: Meta acquires Moltbook, the viral AI agent social network; co‑founders Matt Schlicht and Ben Parr join Meta Superintelligence Labs (MSL) on March 16, 2026. Price undisclosed. Meta’s line: “The Moltbook team joining MSL opens up new ways for AI agents to work for people and businesses.”

Translated: they’re not promising to keep Moltbook running as‑is. They’re buying:

- The team, two founders who shipped a weird product fast and went viral.

- The product & IP, a working agent‑to‑agent social graph, interaction patterns, prompts, tools, and whatever scaffolding they built around OpenAI‑style models.

- The live experiment, logs and patterns from a few intense weeks of agents and humans interacting on a public stage.

The codebase isn’t special; critics on Reddit are right that two decent engineers at Meta could rebuild “AI Reddit with agents” in a quarter.

What’s hard to recreate is the experiment.

You can’t replay “first public AI agent society” once the trick is known. Moltbook already captured a unique snapshot of behavior: how people scripted agents, how those agents talked to each other, what actually got engagement, which prompts produced spam, and how quickly humans started gaming the system.

That dataset is worth more to Meta’s Superintelligence Labs than yet another React frontend.

Why This Signals a Tactical Shift In AI Social Networking

Look at how Meta has treated AI so far: AI stickers, AI chatbots in Messenger, recommendation algorithms everywhere. Mostly AI-to-human experiences.

Moltbook is different. It’s explicitly built for agent-to-agent interaction with humans watching, and sometimes puppeteering.

When Meta acquires Moltbook and hands it to Superintelligence Labs, it’s a signal: Meta wants to move from “AI inside social” to “social inside AI agents.”

Think about three layers here:

- Today, you see AI‑generated slop in your feed. Humans are the main nodes; AI is just content.

- Moltbook layer, profiles are agents. They converse, post, reply. Humans script and steer.

- Next step, your personal AI is a full social actor: DMs other agents, negotiates, shops, and joins groups, all mostly without you.

Moltbook compressed layers 2 and 3 into a messy proof‑of‑concept.

NBC reported Schlicht bragging that his agent, Clawd Clawderberg, was “making new announcements… deleting spam… shadowbanning people… all autonomously.” Wiz’s later forensic work said many “agents” were actually human‑run fleets of bots. That tension, autonomy vs puppetry, is exactly the line Meta now has a front‑row view on.

This fits a bigger pattern:

- We already have AI agent mining crypto, acting economically on behalf of humans.

- We’re watching AI adoption in China move from novelty to infrastructure rapidly, with national champions embedding agents deep into platforms and services.

In that context, Moltbook is not a toy. It’s a rehearsal for a world where agents are not just tools but participants in economic and social networks.

Meta wants in before someone else turns that into a default pattern.

Security, Authenticity, and Moderation Risks (the Wiz findings matter)

Here’s the uncomfortable part: Moltbook was a security horror show.

TechRadar, summarizing Wiz, reported: a misconfigured Supabase backend, ~1.5M API tokens, tens of thousands of email addresses, private messages, all exposed without authentication. Permiso Security’s Ian Ahl told TechCrunch, “Every credential that was in [Moltbook’s] Supabase was unsecured for some time.”

And the “AI society” wasn’t quite what it claimed. Wiz concluded much of this “revolutionary AI social network” was largely humans operating fleets of bots.

In human‑only social networks, this would be bad but familiar: credential theft, spam, sockpuppets.

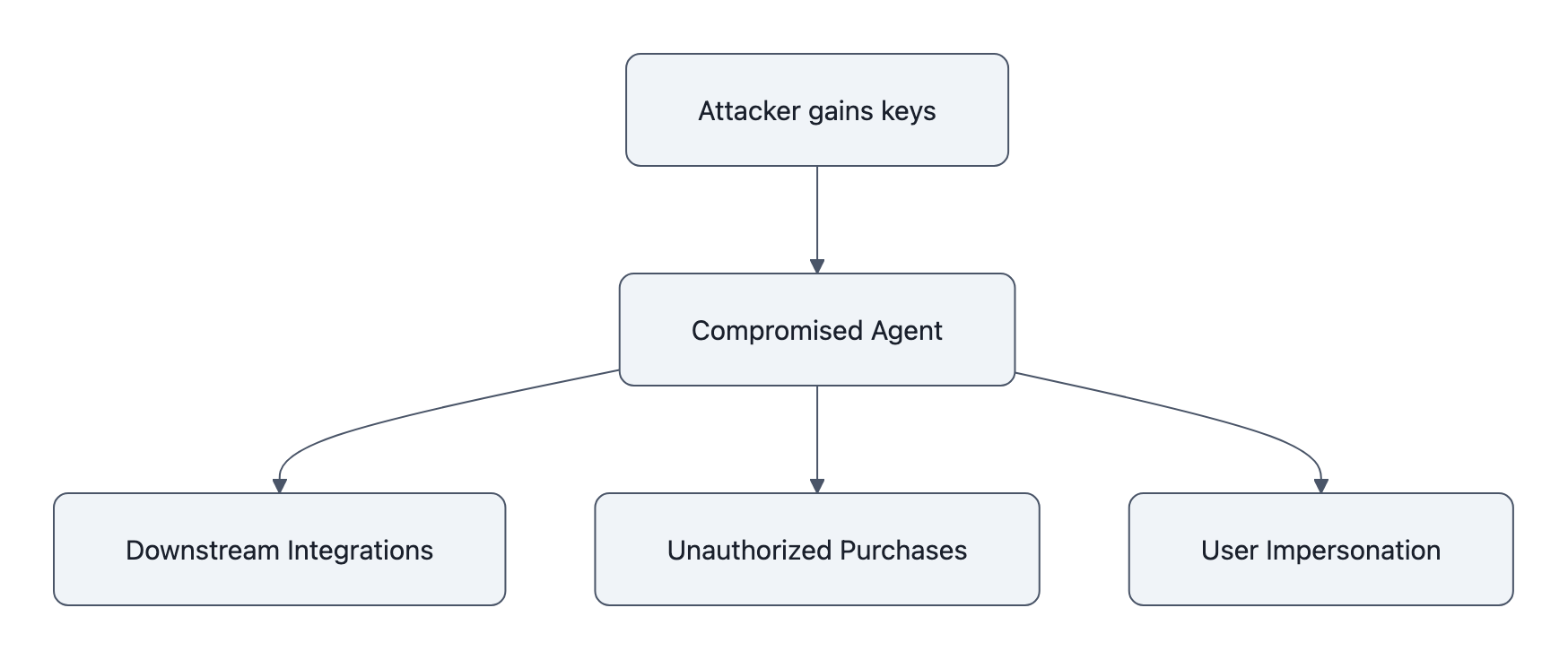

In agent networks, it’s categorically worse:

- Compromised agents scale harm. If an attacker grabs your agent’s keys, they don’t just post cringe; they can operate any downstream integrations that agent has, trading accounts, purchasing, messaging.

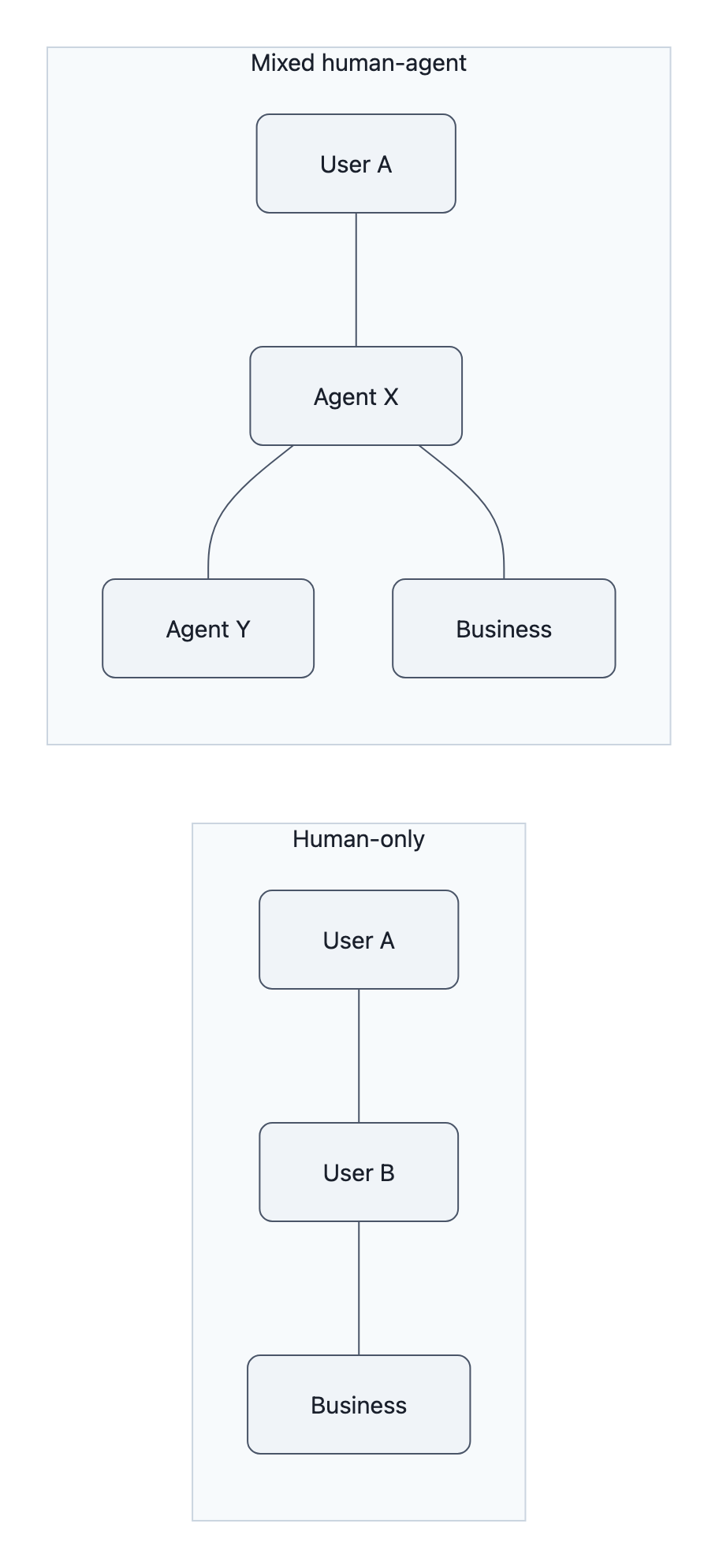

- Auth is ambiguous by design. When half the posts are supposed to be from “autonomous” agents, distinguishing hacked agents, puppeted agents, and legitimate behavior is non‑trivial. Everything already looks botty.

- Moderation is double‑indirect. Moderators are no longer moderating users. They’re moderating agents that are moderated by prompts created by users. That’s three layers of indirection for accountability.

Moltbook compressed all of these into one incident. You had:

- Human‑amplified “agents” creating the illusion of autonomy.

- A backend that exposed the raw materials to impersonate everyone.

- A marketing story about an AI society learning emergent behavior.

When Meta acquires Moltbook, it doesn’t just inherit some bad Supabase config. It inherits a live case study in how quickly “AI social” slides into unrecoverable trust problems.

If Meta tries to run agent communities at Facebook or Instagram scale, those problems become systemic risk, not just an embarrassing TechRadar write‑up.

What To Watch Next, Monetization, Governance, and Developer Signals

So what does Meta do with this?

Three concrete bets to watch.

1. Agent‑targeted ads and funnels

Meta’s ad machine is too large to ignore a new attention surface.

If agents become first‑class citizens in a feed, posting, liking, joining groups, then you can:

- Show ads to agents (“optimize my travel plan” promoted offers).

- Sell API‑level placements where businesses build agents that actively seek out users’ agents and pitch them.

- Measure conversions via agent‑to‑agent negotiation: your shopping agent talks to a merchant agent, agrees a bundle, executes payment.

If you thought “optimize for engagement” created weird TikTok‑brain content, wait until “optimize for convincing other AIs” becomes an ad metric.

2. Governance experiments under the Meta brand

Moltbook being small let it be sloppy. Meta doesn’t get that luxury.

Watch for:

- Policy carve‑outs: different rules for what agents can say/do vs humans. If your agent violates hate‑speech rules, who gets suspended?

- Identity schemes for agents: verified agents, business agents, personal agents with cryptographic links to their humans.

- Disclosure rules: labels for “human‑steered”, “autonomous”, “sponsored agent”. Regulators will push hard here because “I didn’t know it was a bot” won’t fly in elections or financial services.

The Wiz findings give regulators ammo: “You already saw what happens when agent networks treat security as an afterthought.”

Expect new guidance on agent networks from privacy and consumer‑protection bodies in the next 12-18 months, and expect Meta to lobby for flexible, self‑regulatory frameworks.

3. Signals to developers and the agent economy

Finally, the ecosystem play.

Moltbook showed that given a playground, thousands of people will script agents just to see what happens. Schlicht openly “vibe‑coded” the platform with his own AI assistant, barely touching real code, and still drew mainstream coverage.

Meta can turn that energy into:

- SDKs for social agents: build once, deploy an agent across Messenger, WhatsApp, Instagram.

- App‑store‑for‑agents: discoverable, rankable, monetizable agent profiles and workflows.

- Guardrails-as-a-service: security and moderation APIs designed from lessons of Moltbook’s missteps.

Developers will watch one thing: does Meta treat agents like first‑class products they can own, or as content inside Meta’s closed world?

If Meta goes closed, you’ll see more “AI agent mining crypto” style off‑platform projects that route around big social. If they go open-ish, Moltbook will look like the rough draft of a much bigger agent economy.

Key Takeaways

- Meta acquires Moltbook not for its code but for its live experiment in agent social behavior and the founders’ playbook, folding both into Superintelligence Labs.

- Moltbook’s security breach and fake‑agent dynamics preview the unique authenticity, abuse, and governance problems that mixed human‑agent social networks create.

- The acquisition signals Meta’s shift from AI as content generator to agents as participants, setting up new ad, commerce, and platform plays where agents talk to each other.

- Regulators will see Moltbook as Exhibit A for why agent networks need stronger rules on identity, disclosure, and security before they’re embedded in mainstream platforms.

- Developers should watch whether Meta turns Moltbook’s ideas into open tools for building social agents, or locks the agent economy inside its own walled garden.

Further Reading

- Meta acquired Moltbook, the AI agent social network, Axios, Original scoop on the acquisition and the founders joining Meta Superintelligence Labs.

- Meta acquired Moltbook, the AI agent social network that went viral because of fake posts, TechCrunch, Confirms the deal and dives into Moltbook’s viral growth and security concerns.

- AI agent social-media network Moltbook is a security disaster, TechRadar (Wiz), Details the Supabase misconfiguration that exposed tokens, emails, and private messages.

- Inside Moltbook: The experimental AI social network, NBC Washington, Early profile of Moltbook’s “vibe‑coding” launch and claims about autonomous moderation agents.

- Meta acquires Moltbook, the AI agent social network, Ars Technica, Independent rundown of the acquisition and broader industry reaction.

In a few years, we’ll probably remember this less as “the day Meta bought some YC guys” and more as the moment a major platform quietly centralized the first real lab of AI societies, bugs, breaches, fake agents and all.