The fake James Talarico smiles into the camera like he’s in on a joke only he can hear.

For 85 seconds he reads old tweets in a crisp blazer, reacts to them, praises them, almost like a YouTuber doing commentary on himself, except he never recorded this.

He’s an AI puppet in a Senate Republican ad, the latest example of deepfakes in elections.

There’s a tiny “AI GENERATED” disclaimer ghosted in the corner. Blink and you miss it. The message is clear anyway: don’t worry, this is just another campaign video.

That’s the real story here.

Not that the deepfake is hyper‑realistic, though digital forensics expert Hany Farid told CNN most viewers wouldn’t notice the seams.

The story is how quickly synthetic people are being folded into the grammar of normal politics.

TL;DR

- Campaigns aren’t waiting for perfect photorealistic deepfakes; they’re treating AI personas and sliced-up clips as just another kind of B‑roll.

- Tiny on‑screen disclaimers and “dramatic reading” jokes aren’t safeguards, they’re legal armor that makes it easier to keep escalating.

- The danger is that voters learn to discount everything on screen, which hurts real accountability more than it hurts the people playing with AI.

Why “deepfakes in elections” matter now

The Talarico deepfake doesn’t appear out of nowhere.

Weeks earlier, the National Republican Senatorial Committee (NRSC) rolled out a press package: “James Talarico’s Week of Crazy Takes.” Clips, quotes, snippets, like a highlight reel cut for maximum outrage.

One six‑second video chopped his border analogy in half.

In the debate he’d said, “Our southern border should be like our front porch. There should be a giant welcome mat out front and a lock on the door.” The NRSC‑boosted clip stopped before the lock. AFP later labeled it “deceptively edited” and traced the viral version back to NRSC posts.

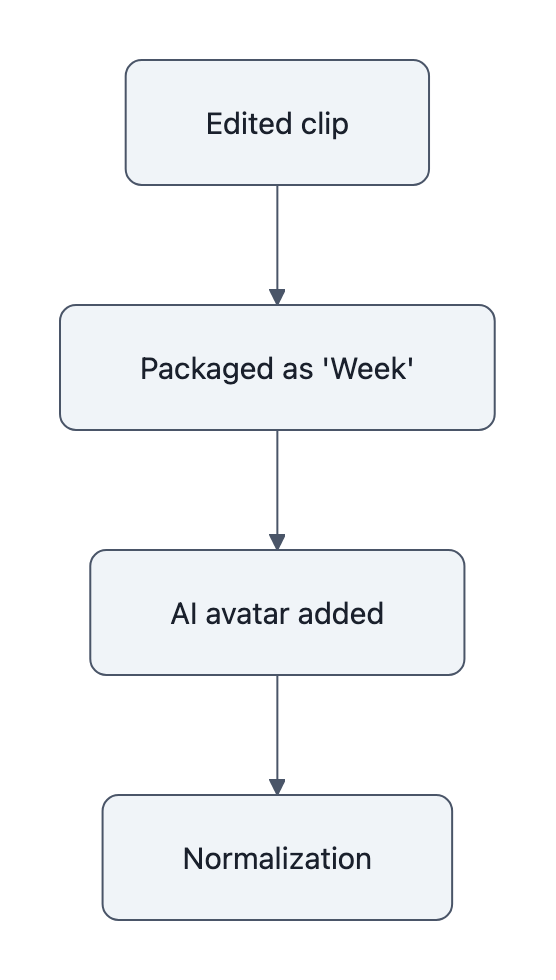

So the sequence goes like this:

- Start with standard attack‑ad editing: chop the sentence, change the meaning.

- Package the clips as a “crazy takes” week.

- Promote a full AI avatar to “visualize” his tweets, with added lines like “oh, this one is so touching” that he never said.

On paper, this is about deepfakes in elections.

In practice, it’s about continuity. Each step is only a small tweak from what came before.

That’s why it matters now: the line isn’t being crossed in one dramatic leap.

It’s being walked across in 5‑second increments.

If this feels familiar, it should.

The same NRSC experimented with an AI Chuck Schumer in 2025, complete with a quiet disclaimer, and other campaigns have tried AI Schumer‑style or Jon Ossoff deepfakes in local races. PBS has been documenting that march from novelty to routine ever since.

The point isn’t “AI shows up.”

The point is “AI shows up again, but slightly more normal.”

Normalization, not novelty: how campaigns are weaponizing AI

Listen closely to how the NRSC defends the Talarico video.

A source told CNN AI is a “consistently effective” way to highlight a candidate’s statements. These are “Talarico’s real words,” they say, they just “visualize them for voters using a modern tool, within all legal and ethical parameters.”

That phrase, modern tool, does a lot of work.

It collapses an AI‑generated synthetic human into the same category as a slow zoom or ominous string music.

Just another knob on the console.

And the structure around the ad reinforces that story:

- The narrator calls it a “dramatic reading,” a joke that makes the deepfake feel like sketch comedy rather than evidence.

- The “AI GENERATED” tag lives in the corner, technically present, practically ignorable.

- The extra commentary (“oh, I love this one”) is never acknowledged as fabricated; it rides along under the umbrella of “his own words.”

This is a pattern, not a glitch.

CBS Atlanta noted a Georgia campaign ad using an Ossoff deepfake with a small AI disclaimer. The Guardian described the NRSC’s earlier Schumer deepfake the same way: artificially generated video, tiny warning, big rhetoric.

When you look at deepfakes in elections through this lens, the strategy is clear:

- Blend AI output with real material so the ad feels rooted in truth.

- Label the AI lightly enough to deflect future criticism but not enough to scare viewers.

- Laugh at the artifice so anyone who objects looks humorless or “afraid of technology.”

The novelty is gone by design.

What’s left is a new norm: candidates can be puppeted so long as the fine print says the strings are digital.

Why this will work: attention, editing, and platform design

If you’ve ever watched a campaign video in your feed, you know the form.

Muted autoplay. Captions. You glance for two seconds before scrolling.

In that environment, disclaimers are not truth signals. They’re legal shields.

Platforms encourage this. Labels are small, out of the way, often visually identical to “paid for by” notices you’ve learned to ignore. Regulators ask for disclosures; designers tuck them into corners that don’t interrupt the narrative.

Now layer in AI.

We already live in a world where deceptively edited clips drive the conversation.

AFP had to publish a whole explainer just to reattach the missing half of one Talarico sentence. That’s old‑fashioned editing, no AI required.

Deepfakes don’t replace those tactics, they supercharge them:

- An AI avatar means you never run out of footage. No need to wait for a real gaffe; you can animate any past tweet into a fresh “moment.”

- Synthetic reactions (“oh, I love this one”) convey emotion that never happened but feels like behind‑the‑scenes access.

- Because the words are technically “real,” fact‑checking becomes a semantic fight about context instead of a clean “this never happened.”

Voters respond to structure more than to labels.

If a video looks like a direct‑to‑camera confession, cuts like one, and circulates like one, the brain tags it as “something he said,” not “AI sketch.”

Deepfake detection tools won’t fix this.

Most people don’t run forensics on political ads before they hit share. And even if they did, the NRSC can point to the corner label and say: We told you it was AI. What more do you want?

The deeper shift is psychological: once synthetic speech is a normal part of the campaign diet, “I saw him say it” stops being the gold standard of political evidence.

That doesn’t just make political deepfakes more effective.

It makes genuine, recorded misconduct easier to deny.

Three signals that show the threat is escalating

If you’re trying to tell “harmless stunt” from “new baseline,” watch for these three signals.

1. The disclaimers are getting smaller and earlier.

The Schumer and Ossoff deepfakes carried small “AI‑generated” labels.

The Talarico ad does too, but now it’s wrapped in a “dramatic reading” joke. The warning is shifting from “this is unusual” to “this is just the bit.”

When disclaimers migrate from bright red banners to faint corner text, that’s not graphic design. It’s a temperature check: how little friction can we add and still claim compliance?

2. The AI is moving from satire to surrogate.

We’re past the point of fake world leaders saying outrageous things in obviously distorted voices. The CNN piece quotes a digital forensics expert calling the Talarico face and voice “very good,” with only minor sync issues.

More important: the avatar isn’t doing something wild.

He’s calmly reinforcing a narrative: this guy is proud of the most controversial interpretations of his words.

When synthetic candidates become calm narrators of their own extremes, you’ve crossed from parody into character assassination.

3. The same committees keep showing up.

This isn’t a random meme‑war from anonymous accounts.

The NRSC is a national party committee with lawyers, pollsters, and long memories. It has now:

- Promoted deceptively edited clips, per AFP and AP.

- Packaged a week of “crazy takes” on its official site.

- Released multiple AI‑generated political ads with lean disclaimers, as documented by CNN and The Guardian.

Repeat behavior tells you this is a strategy, not a one‑off.

Every new ad doesn’t just convince voters, it trains courts, platforms, and watchdogs to treat AI personas as “within all legal and ethical parameters.”

If you want a deeper systems view of this, we’ve written before about the ai content feedback loop that emerges when synthetic media feeds on its own engagement, and how deepfakes in elections can blur the trail of responsibility after the fact.

Key Takeaways

- The most worrying deepfakes in elections today aren’t undetectable forgeries; they’re believable hybrids of real words and synthetic performance.

- Parties are using small disclaimers and humor to reframe AI puppets as “modern tools,” lowering the perceived ethical stakes.

- Old tactics, deceptive editing, clipped sentences, blend seamlessly with AI, making context disputes feel petty while the emotional impression sticks.

- The more often voters see synthetic candidates speaking, the less weight “I saw the video” will carry as political evidence.

- Watching who normalizes these tactics, and how often, tells you more about the threat than any single viral clip.

Further Reading

- Republicans release AI deepfake of James Talarico as phony videos proliferate in midterm races | CNN Politics, Details on the NRSC’s Talarico deepfake ad, expert analysis of realism, and the committee’s defense of its tactics.

- Deceptively edited Talarico clip spreads after Texas Democratic primary win | AFP Fact Check, Shows how a border‑security quote was cut mid‑sentence and tracks the viral clip back to NRSC posts.

- Talarico became famous with viral videos. Can Republicans turn that against him? | AP, Explores how Republicans are mining Talarico’s viral moments to recast him as “too radical.”

- James Talarico’s ‘Week of Crazy Takes’ press materials | NRSC, The committee’s own package of clips and language framing Talarico as “radically out of touch.”

- Republican ad uses deepfake of Chuck Schumer | The Guardian, Earlier example of NRSC using an AI Schumer, with small on‑screen disclaimers and strong rhetoric about “dystopian” tactics.

In a way, the Talarico avatar is a test audience member for the next few election cycles.

If he can stand there, half‑real and half‑invented, and we all shrug and keep scrolling, campaigns will learn the lesson: the future of persuasion isn’t about making perfect fakes. It’s about making fake things feel perfectly ordinary.