The headline story is simple: AI boosts creativity. Give people ChatGPT during a brainstorming or writing task, and their ideas score 10-25% higher on novelty and usefulness than people without it.

Except that’s not actually the interesting part.

The interesting part is what happens next: their skills often don’t improve, and everyone starts sounding the same.

TL;DR

- Experiments show AI boosts creativity for individuals but compresses idea diversity across groups and often leaves skills unchanged.

- The real split is emerging between people who use AI as a mirror on their own thinking and those who use it as an auto-complete button.

- If you don’t deliberately practice metacognition with AI, planning, monitoring, and revising your own moves, you’re not getting “more creative.” You’re just renting style.

AI boosts creativity, but in a very specific way

Let’s compress the evidence.

- In a Nature Human Behaviour study, people brainstorming with ChatGPT produced ideas that external judges rated as more creative than people without AI, across tasks like repurposing household objects.

- A Science Advances experiment with ~300 people writing micro‑stories found AI suggestions raised judged novelty and usefulness by around 10-25%, especially for weaker writers.

- In a large online design study (~800 people creating virtual cars), an AI that showed diverse example galleries led participants to explore more, spend longer, and design better‑rated cars.

So yes, AI boosts creativity in controlled settings. The numbers aren’t hype; they’re measured.

But look closely at how it does that.

The pattern across these studies is the same: AI doesn’t inject genius; it supplies scaffolding.

- It gives you starting points you wouldn’t have reached alone.

- It offers constraints and variations that push you off your first obvious idea.

- It provides instant feedback and alternatives so you can iterate instead of stall.

Think of it as handing every novice a strong first draft, plus a pile of variations.

That predictably lifts average quality. But it also quietly rewires what “being creative” even means.

The diversity trade‑off: AI as creativity’s central bank

Here’s the catch most “AI makes you more creative” takes skip over.

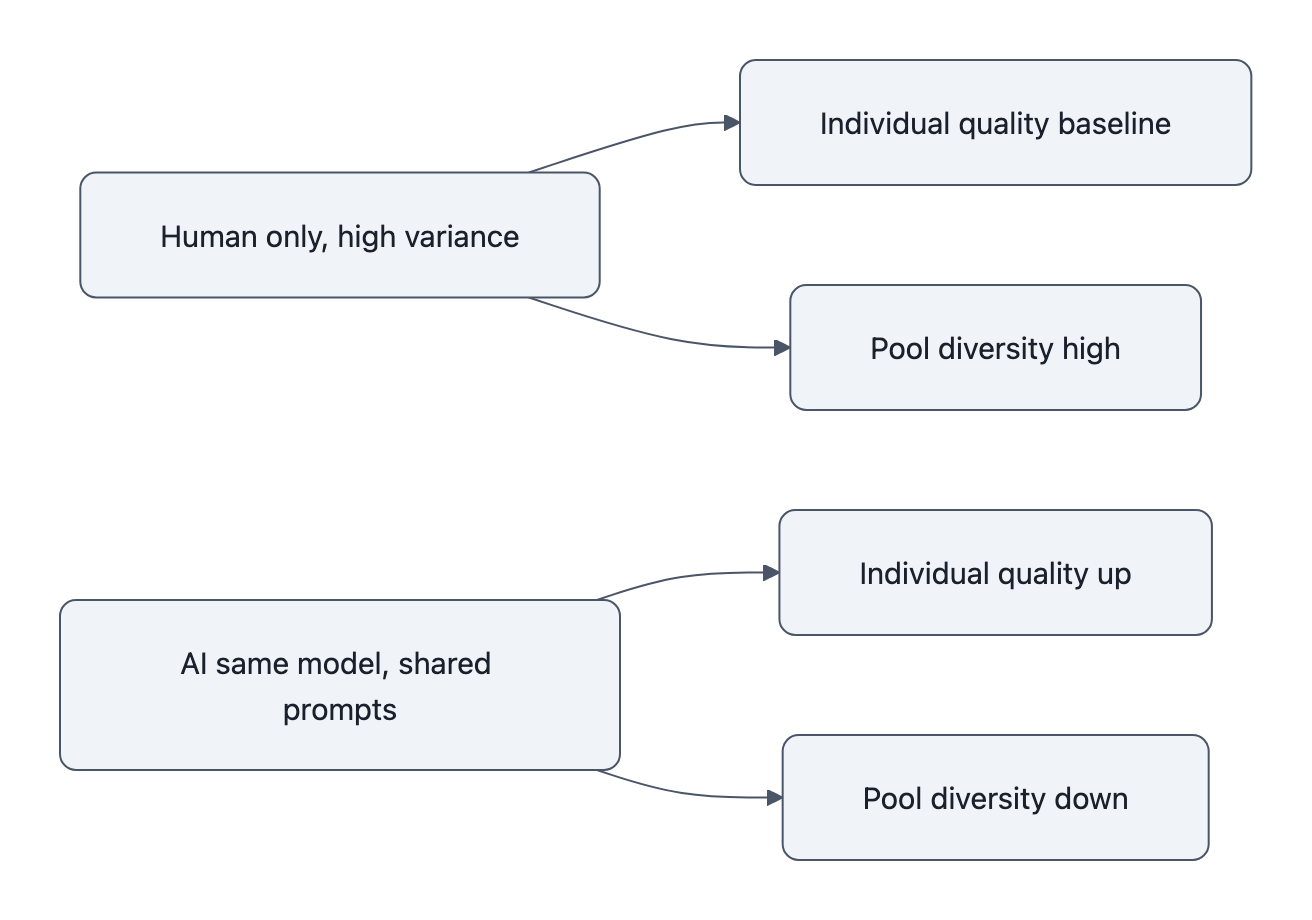

The same micro‑story study that saw individual novelty go up also found collective novelty went down. Participants with AI access wrote stories that were more similar to each other than the control group’s.

The Nature Human Behaviour team analyzing ChatGPT brainstorming found the same structural problem: as more people lean on the same model, the pool of ideas shrinks. You get more “pretty good” ideas, fewer strange ones.

This is not mysterious.

Large language models are trained to model what’s likely, then you ask them to be “creative” by sampling a bit away from the mean. If everyone hits the same model with similar prompts:

- Your personal ideas move toward the global training distribution.

- Everyone else’s ideas move there too.

- The group’s variance collapses, even if each individual steps up from their personal baseline.

AI becomes an interest rate on originality: it smooths out local spikes, stabilizes returns, and erases some of the weird outliers that used to exist when people free‑wheeled independently.

From a manager’s perspective, this is extremely appealing. The Harvard/Organization Science work on human‑AI collaboration in innovation challenges found AI‑assisted teams produced ideas that were more implementable and valuable on average than human‑only crowds, even if the human‑only group sometimes hit higher novelty peaks.

If you’re running a product roadmap, “fewer bizarre dead‑ends, more bankable ideas” sounds great.

If you’re worried about culture, research, or art converging on the same handful of tropes, less so.

The point is not that AI “kills originality.” It’s that AI centralizes the baseline. Like any central bank, it’s stabilizing, and that stability has a cost.

AI doesn’t teach you much unless you’re watching yourself think

There’s a second uncomfortable detail in the “AI boosts creativity” story.

The Rice University field experiment that followed employees in a real organization found: generative tools only increased creativity when people used metacognitive strategies, planning how to use the tool, monitoring what it did, and adjusting course.

When workers just asked for ideas and picked something, their output didn’t improve meaningfully.

This matches another pattern we’ve seen before, AI helps you write faster but teaches you less. If the model does the hard part and you don’t engage with the steps, your performance on this task goes up while your underlying skill graph barely moves.

Generative AI is very good at:

- Eliminating blank‑page panic.

- Filling in missing domain knowledge.

- Producing shippable work on your behalf.

It is very bad at:

- Forcing you to articulate your own reasoning.

- Making you inspect why something works or fails.

- Slowing you down enough to encode the skill.

If you treat AI as an autocomplete engine for creativity, you get performance without learning. Your work looks better and you are just as dependent as day one.

If you treat it as a metacognitive mirror, a way to externalize and interrogate your own thinking, you can turn the same tool into a tutor.

That’s the actual divide opening up: not “AI users vs. non‑users,” but prompt‑metacognitive users vs. button‑pressers.

It only becomes a skill if you practice with it

A useful analogy here is weight machines at a gym.

If you set the pin very light and let the machine guide your motion, you’ll move a lot of metal and feel productive. But you’ve offloaded balance, stabilization, and range awareness to the machine. You’re doing work, but you’re not learning the movement.

Most current AI use looks like that.

The Rice study’s metacognitive strategies, planning, monitoring, adjusting, are basically “mindful lifting”:

- Planning: What’s the creative move I’m trying to practice on this rep? Structure? Imagery? Constraint handling?

- Monitoring: Which part of this output came from my idea vs. the model’s interpolation? Where did I get lazy?

- Adjusting: How do I tweak the prompt, constraints, or my own sketch to push me into a slightly harder zone?

Without that loop, AI boosts creativity the same way your more talented group partner boosted your grade in school. You get the A. You don’t get their skills.

We’ve already written about the AI content feedback loop, models trained on their own output gradually homogenizing the web. There’s a parallel skill feedback loop on the human side:

- More AI dependence → less deliberate practice → shallower human skill → more pressure to rely on AI.

Breaking that loop is less about model alignment and more about user alignment with their own cognitive process.

How to use AI to grow creative skills (not outsource them)

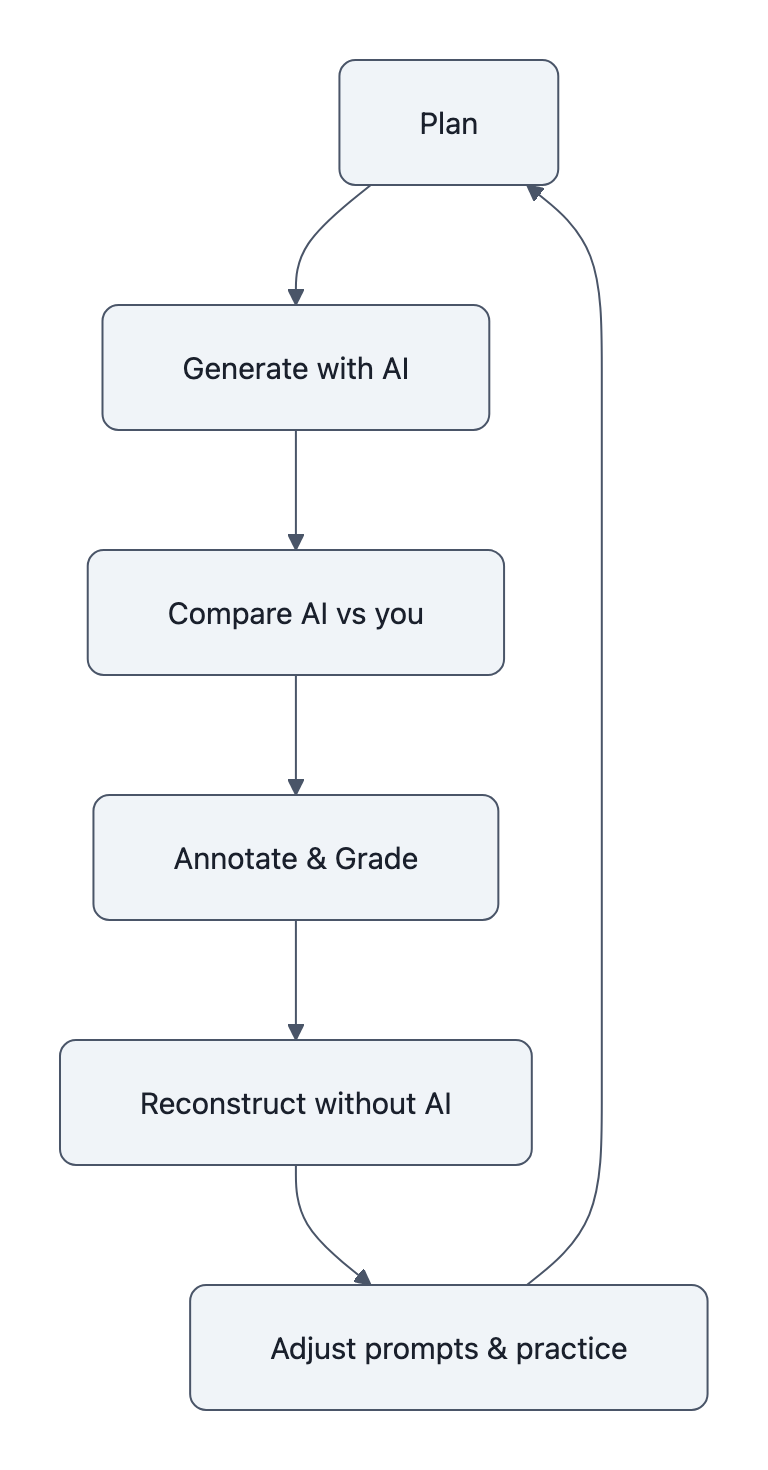

“Use AI as a tutor, not a typewriter” sounds nice. What does it look like in practice?

Three patterns, all metacognitive on purpose.

1. Reverse‑prompt your own drafts

Instead of asking the model for ideas, you write the first pass. Then:

- Ask the model: “Write the prompt you think would have produced this.”

- Compare its inferred prompt to what you thought you were trying to do.

- Now ask: “Generate three alternative outputs that push harder on [specific dimension: risk, humor, structure, constraint].”

You’re not just seeing alternatives; you’re seeing how your internal prompt differs from the external one. That gap is your skill map.

2. Force diversity before refinement

To avoid the “central bank” effect on your own thinking, separate divergence from convergence.

- Divergence pass: “Give me 10 radically different angles on this problem. Make at least 3 that you expect most people will hate.”

- Only then do you pick 2-3 and ask for expansions or hybrids.

You’re using AI to widen your personal search space, not to jump straight to the default “pretty good” answer the model gives everyone else.

3. Annotate the model’s work like a teacher

When the model produces something that feels “better than you,” don’t ship it.

Grade it.

- Highlight each section and write: “What is this doing? Why does it work? Where would it fail?”

- Ask the model to critique its own output, then critique the critique.

- Finally, rewrite the piece from scratch without looking, using only your annotations as a guide.

Now the AI output has become a worked example. The learning happens in your annotations and reconstruction, not in the first draft it handed you.

These are not productivity tricks. They’re practice protocols.

Used this way, AI boosts creativity in the only sense that matters long‑term: it rewires how you approach problems even when the tool is gone.

Key Takeaways

- Lab and field experiments show AI boosts creativity for individuals by 10-25% on judged novelty/usefulness, but often at the cost of narrower idea diversity across groups.

- Generative tools behave like creative scaffolding, great at lifting baselines, mediocre at teaching underlying skills unless you use them deliberately.

- The meaningful divide isn’t AI vs. no AI, but between people who practice prompt‑metacognition and those who treat models as automatic idea dispensers.

- Treat AI outputs as objects to annotate, reverse‑engineer, and vary, not as final answers, and you convert short‑term performance gains into long‑term skill.

Further Reading

- ChatGPT decreases idea diversity in brainstorming | Nature Human Behaviour, Shows how AI raises individual creativity scores while shrinking the diversity of idea pools.

- Scientists discover AI can make humans more creative | ScienceDaily, Swansea University’s MAP‑Elites study on AI galleries, engagement and exploration in design tasks.

- AI found to boost individual creativity, at the expense of less varied content, Science Advances summary of the micro‑story experiment where AI lifts judged novelty but reduces aggregate uniqueness.

- The Creative Edge: How Human‑AI Collaboration is Reshaping Problem‑Solving, Harvard D^3 writeup on human‑AI teams beating human‑only crowds on value and implementability.

- AI tools aren’t a creativity machine on their own, Rice expert says, Field experiment showing AI only helps creativity when users adopt metacognitive strategies.

In a world where everyone can summon decent ideas on demand, the scarce resource is no longer output. It’s people who still know how their own ideas got there.