Upload a random street photo, get back GPS coordinates within tens of meters. That’s no longer a closed SaaS demo; it’s something a college student ships on GitHub and research labs publish as a reproducible benchmark called GeoBench.

Open-source image geolocation is not just another clever model release, it’s the moment a niche OSINT superpower becomes a normal software dependency for any newsroom, police department, or risk team with a Python stack.

TL;DR

- Open-source image geolocation makes “where was this photo taken?” a commodity API, not a specialist craft.

- Netryx and GeoVista show that precise, global photo geolocation can run locally and reproducibly, even if it still fails in messy real-world edge cases.

- The real shift isn’t accuracy, it’s absorption: once this ability is open and cheap, institutions will normalize using it far faster than norms or oversight can catch up.

Why open-source image geolocation matters right now

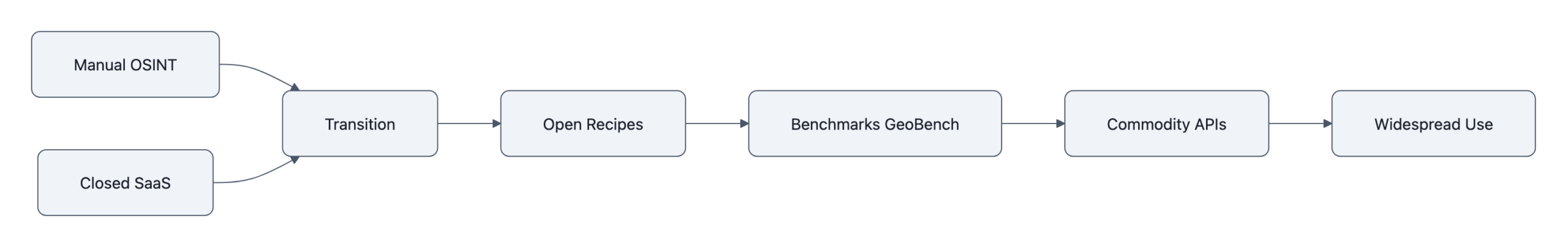

For a decade, precise image geolocation lived in two places:

patient OSINT volunteers manually matching rooftops on Google Earth, and opaque commercial tools sold to governments and large enterprises.

Now we have two signals at once:

- Netryx: a local-first pipeline that matches any street-level photo against a prebuilt index of Street View panoramas, claiming sub‑50m accuracy on typical city scenes.

- GeoVista: a research model that treats geolocation as an agentic reasoning problem, zoom into details, call web search, iterate, and reports 72.7% city-level accuracy and 52.8% of predictions within 3 km on its GeoBench dataset, close to models like Gemini 2.5 on that benchmark.

Individually, those are impressive engineering results.

In combination, they mark a transition: geolocate photo goes from a specialist skill to a standard capability, like spellcheck or face detection.

Once that happens, the question “can this be done?” stops mattering. The questions become “who is doing it by default?” and “what does that do to the people on the other side of the lens?”

How open-source image geolocation (Netryx & GeoVista) actually works

Both Netryx and GeoVista are useful to understand not because you’ll run their exact code, but because they show the pattern of how this capability industrializes.

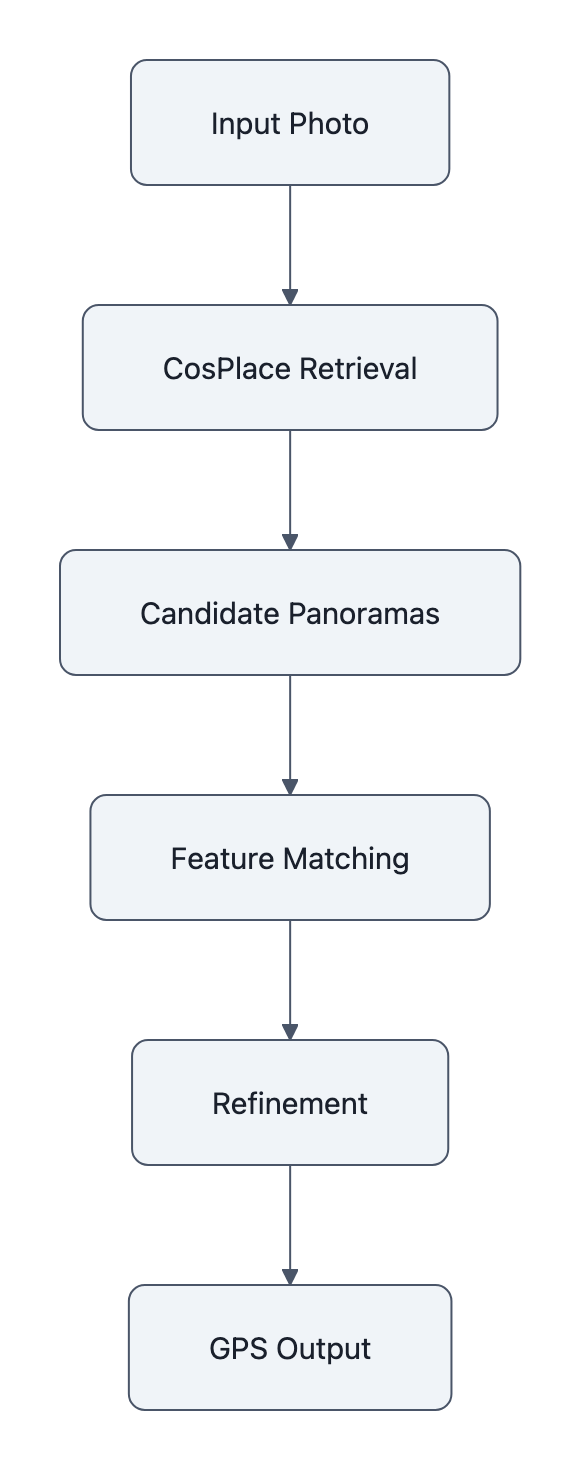

Netryx is almost aggressively practical. It chains three existing vision components:

- CosPlace: turns every Street View panorama into a 512‑dimensional “place fingerprint” and retrieves the top few hundred candidates that look like your image.

- ALIKED / DISK + LightGlue: zooms in and checks geometric consistency between your image and candidate panoramas, filtering until one location fits.

- A refinement stage nudges heading and field-of-view to tighten the match.

Underneath the buzzwords, this is classic information retrieval: coarse similarity search, then precise verification. The important detail is that the whole thing runs locally on your GPU and never needs to upload your query image to a third-party API.

GeoVista takes the opposite approach. It wraps a vision-language backbone in tools, zooming into parts of the image, issuing web searches, reading back snippets, and trains that loop with reinforcement learning. On GeoBench (1,142 curated images across 66 countries and 108 cities), it can tell you “this is in Barcelona, near Sagrada Família” with competitive accuracy versus big proprietary models, at least under benchmark conditions.

Two things matter here:

- The recipes are public. The GitHub repos show how to wire retrieval, feature matching, and agentic tool use into a working pipeline. This is “AI builds AI” in practice: once a few teams demonstrate the pattern, other developers can swap pieces, tune for their regions, or integrate with in-house data.

- Benchmarks exist. GeoBench gives everyone the same test set and metrics. That’s not just about progress, it’s about AI accountability: if a vendor claims city-level accuracy, you can ask “on GeoBench or on what, exactly?”

Once a task has an open recipe and an open benchmark, it tends to commoditize very quickly.

The real limits, where these tools fail or lie

If you read only the GitHub README or a headline like “near parity with top commercial models,” it’s easy to mentally round this to “AI can perfectly geolocate any photo.” That’s not what the data says.

GeoVista’s numbers are strong for the images it chose to evaluate:

– ~93% country, ~80% province/state, ~73% city accuracy

– ~53% of predictions within 3 km; median error ~2.35 km

But GeoBench explicitly filters out images that are either trivial (Eiffel Tower) or basically impossible to place. It’s evaluating “reasonably localizable” photos. Outside that curated set, suburban sameness, generic forests, indoor scenes, performance will drop.

Netryx has its own hard constraints:

- It only knows places covered by its Street View index. Some regions are missing or outdated; some countries limit or ban that coverage.

- It needs a radius or region to search efficiently; a truly global search at high resolution is computationally heavy.

- Perspective mismatches, occlusions, or weather/lighting can defeat the feature matching stage.

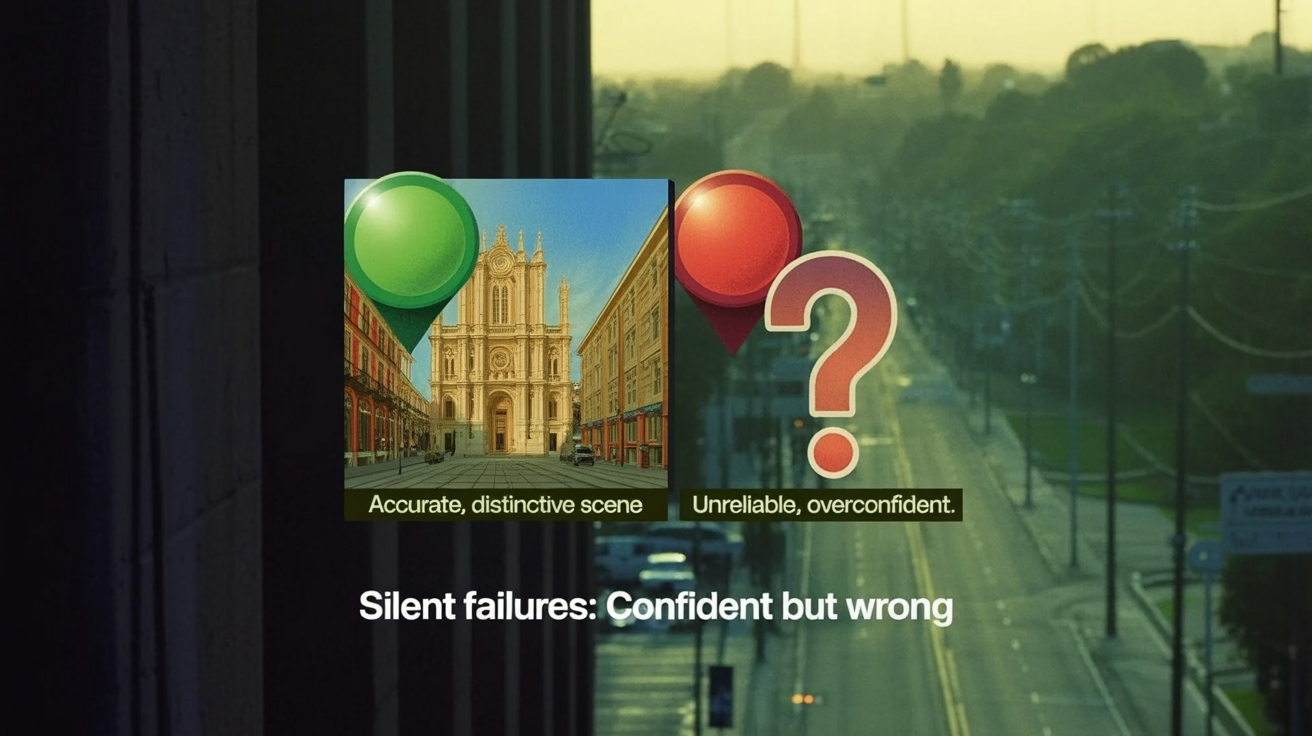

And then there is model behavior under uncertainty. Bellingcat’s tests of LLM geolocation tools show the problem cleanly: when models are unsure, they don’t say “I don’t know,” they guess, often confidently and incorrectly. All the evaluated models hallucinated at some point.

So we end up with a specific failure pattern:

The system is highly accurate when the scene is distinctive and within coverage, and completely unreliable in many other cases, but it rarely looks unreliable.

That is a very human-friendly error mode. It invites over-trust.

In a newsroom or police workflow, the risk is not “AI is wrong all the time.” The risk is “AI is right often enough that its wrong answers get believed.”

Why the shift to open source changes who’s at risk

The usual argument about openness here is straightforward: open code and benchmarks mean more scrutiny, reproducible evaluations, and a chance to audit how well photo geolocation actually works before power concentrates in a few closed vendors.

That’s all true. It’s also the less important shift.

The more consequential change is sociotechnical: who uses the capability by default.

When geolocation lived in:

- a few elite OSINT outfits, and

- closed SaaS with procurement hurdles and high per-seat pricing,

you got a very particular risk profile. Abuse was serious, stalkers, authoritarian states, corporate surveillance, but the number of actors and workflows that could casually plug “geolocate this” into their process was limited.

Open-source image geolocation changes that in three ways.

1. It lowers the organizational friction

Once Netryx or a GeoVista-style pipeline exists, any medium-sized institution can:

- Clone a repo

- Point it at their own imagery or Street View mirrors

- Wrap it behind a REST endpoint

No legal review of a vendor’s terms. No third-party data sharing to argue about. It’s just “another microservice.”

The same dynamic has already played out with facial recognition and content moderation models. As soon as running the model locally becomes the default, the barrier to deploying it in bulk drops from “executive decision” to “a sprint ticket.”

2. It normalizes continuous use, not exceptional use

Geolocation used to be a case-by-case decision. A reporter or analyst would think: “We really need to know where this was; let’s invest the time.” That mental hurdle was a privacy guardrail.

Turn it into a one-line API and you get continuous use:

- Newsrooms auto-geolocating every UGC photo they ingest.

- Platforms pre-scoring images to detect protests, critical infrastructure, or “high-risk” locations.

- Corporate security teams linking employee or customer photos to precise office or home coordinates.

The technology didn’t get more malevolent. The default setting changed.

3. It shifts risk from “exotic threat” to “institutional ordinary”

For individuals, the headline risk moves from “some spy agency might find me” to “my employer, my insurer, my local government quietly runs this on every photo I post.”

This is the same pattern we saw with web tracking. Initially, cross-site tracking required serious engineering and bespoke deals. Once open-source trackers and default analytics scripts appeared, everyone had the capability, and the harm wasn’t a few bad actors, it was pervasive, normalized surveillance.

Open-source image geolocation is on track to follow that curve unless we consciously push back.

That’s why the accountability question shifts. It’s not just “can we verify GeoVista’s GeoBench scores?” It’s “what data protection rules, internal governance, or social norms will we impose on ourselves now that any developer can drop geolocation into their stack?”

And as with other AI systems, the infrastructure for that governance will lag. Institutions will adopt the capability long before they adopt robust AI accountability frameworks specific to location inference.

Key Takeaways

- Open-source image geolocation moves geolocation from a specialist OSINT craft and closed SaaS market into a commodity capability any developer can run locally.

- Netryx shows how far you can get by chaining existing vision models into a practical retrieval+verification pipeline; GeoVista demonstrates agentic, web-augmented reasoning that nears commercial performance on curated benchmarks.

- These tools are highly accurate on distinctive, well-covered scenes, but still fail silently and hallucinate under uncertainty, especially off-benchmark or outside Street View coverage.

- The biggest shift is sociotechnical: geolocation becomes a routine part of institutional workflows, in newsrooms, law enforcement, and corporations, rather than an exceptional tool used sparingly.

- The risk curve bends away from a few powerful vendors toward many ordinary organizations quietly inferring where your photos were taken.

Further Reading

- Netryx, Open-Source Next-Gen Street-Level Geolocation (GitHub), Repository and README detailing Netryx’s local-first pipeline using CosPlace, ALIKED/DISK and LightGlue.

- GeoVista: Web-Augmented Agentic Visual Reasoning for Geolocalization (arXiv), Research paper introducing GeoVista, its agentic loop, and the GeoBench benchmark and metrics.

- GeoVista (GitHub), Code, weights, and evaluation tooling to reproduce GeoVista experiments on GeoBench.

- GeoVista Brings Open-Source AI Geolocation to Near-Parity With Top Commercial Models (The Decoder), Technical write-up summarizing GeoVista’s performance and highlighting privacy and misuse concerns.

- Have LLMs Finally Mastered Geolocation? (Bellingcat), Independent testing showing both progress and persistent hallucinations in LLM-based geolocation.

Open-sourcing the recipes for geolocation doesn’t just tell us how well these systems work; it decides who gets to make “where was this taken?” a background question, and how quietly that answer gets folded into everyday decisions about our lives.