The screenshot is mundane: a VS Code sidebar, a drop‑down of models, and in one corner a tiny string that shouldn’t be there, kimi-2.5. A few Redditors zoom in, squint, and suddenly Cursor Composer 2 isn’t just “frontier‑level at coding” anymore; it’s “apparently just Kimi K2.5 with RL fine‑tuning.”

Cursor Composer 2 gets accused of being a reskinned Chinese model, Moonshot AI is dragged into the spotlight, and everyone starts asking: is this theft?

That’s the wrong primary question. The more interesting story is how easy it is, in 2026, to build a billion‑dollar product on top of opaque models, and how little anyone can actually prove about what’s running under the hood.

TL;DR

- There is no verified public evidence that Cursor Composer 2 is literally Kimi K2.5, only community forensics and screenshots.

- The incident is a stress test of the AI supply chain: convoluted distillation, weak provenance, and perverse incentives for “wrapper” products.

- The real harm isn’t one possible licensing violation, it’s eroding trust in developer tools and blurring where the product’s real value sits.

Cursor Composer 2: what the companies say

Cursor’s official story is crisp and tidy.

In their “Introducing Composer 2” post, they say the model’s leap comes from “our first continued pretraining run.” From that base, they train on “long‑horizon coding tasks through reinforcement learning.” They price it confidently: $0.50 per million input tokens, $2.50 per million output, frontier‑ish money for a “frontier‑level” coding model.

Moonshot’s Kimi K2.5 story is, on the surface, not that different.

Their Hugging Face card explains that Kimi K2.5 builds on a base model with continued pretraining, supervised fine‑tuning, and RL. InfoQ’s coverage emphasizes multi‑step reasoning and agentic behavior. Fireworks.ai happily offers Kimi K2.5 hosting and full‑parameter RL fine‑tuning for customers.

Same ingredients: continued pretraining, SFT, RL, coding tasks.

What you won’t find in any official blog: Cursor naming Kimi K2.5 as a base, or Moonshot acknowledging Composer 2 at all. And despite Anthropic’s separate allegation that Moonshot itself ran industrial‑scale distillation of Claude, no reputable outlet has published “Composer 2 = Kimi K2.5” as a confirmed fact.

So why does the Reddit theory feel so plausible?

Why the Kimi K2.5 allegation remains unverified

Reddit’s case rests on three kinds of evidence, all squishy:

- Client traces and metadata.

Users post screenshots where internal identifiers likekimi-2.5show up in Cursor contexts or logs. If accurate, this at least suggests routing through Kimi endpoints or weights at some point. - Output fingerprints.

People run the same prompts through Composer 2 and Kimi K2.5 and claim the responses are suspiciously similar, same weird variable names, same formatting tics, same failure modes on edge cases. - Deleted social posts.

Several commenters say Moonshot briefly posted (then deleted) statements implying Cursor used Kimi without proper licensing. These are second‑hand claims. The supposed originals are gone.

None of this would pass as evidence in a courtroom or a serious investigation. There’s no signed admission, no leaked contract, no public DMCA, no third‑party audit tying Composer 2’s weights to Kimi K2.5.

And yet, if you’ve followed the AI world for more than five minutes, you probably feel an uncomfortable intuition: this could totally be happening.

The allegation lands not because it’s proven, but because structurally it fits the way the AI supply chain works right now, open weights, distillation, and opaque hosted endpoints braided together with marketing.

That’s the real problem.

This isn’t just about copying, it’s about provenance and incentives

Imagine you’re Cursor.

You sell a coding environment, not a research lab. Your users aren’t reading model cards; they just know Composer 2 “went from meh to kind of amazing overnight.” Your investors want that graph. Your competitors ship every week.

Out in the world, the menu is overflowing:

- Open‑weight models like Kimi K2.5, DeepSeek, Llama, all improving fast.

- Hosted services that will fine‑tune those models for you, full‑parameter RL included.

- A licensing tangle where base models might themselves be distilled from proprietary systems, as Anthropic claims about Moonshot and others.

If you’re rational, and slightly cynical, you realize:

- Most users can’t tell models apart.

They experience “this feels like Claude” or “this is worse than GPT‑4o,” not “this is clearly K2.5‑derived.” That’s especially true in a narrow domain like code. - Auditing tools are weak.

There is no standardized “model VIN number.” Distilled models blur signatures. The best attribution techniques (like Anthropic’s) rely on private telemetry and deep forensics, not something a random developer can run on a weekend. - Marketing wants proprietary stories.

“We wrapped Kimi K2.5 nicely” doesn’t sell at $20/month. “We built Composer 2 from scratch with our secret sauce RL” does.

The incentive, for any “AI wrapper” product, is to push as much value as possible into the story and the UX, and to keep the lineage of the underlying weights as foggy as the lawyers will let you get away with.

This is exactly what we argued in The myth of AI wrappers and where value hides: the layers closest to the user capture the brand and the revenue, even when most of the technical lift lives beneath them.

So yes, maybe Composer 2 really is just Cursor’s own continued pretraining run. Maybe it’s Kimi with RL sprinkles. Maybe it’s something in between.

The point is: from the outside, you can’t tell. And that opacity is starting to rot trust in developer tools.

Why model provenance suddenly matters for dev tools

Two years ago, “what’s the base model?” was a trivia question.

Now it touches everything you care about as a developer:

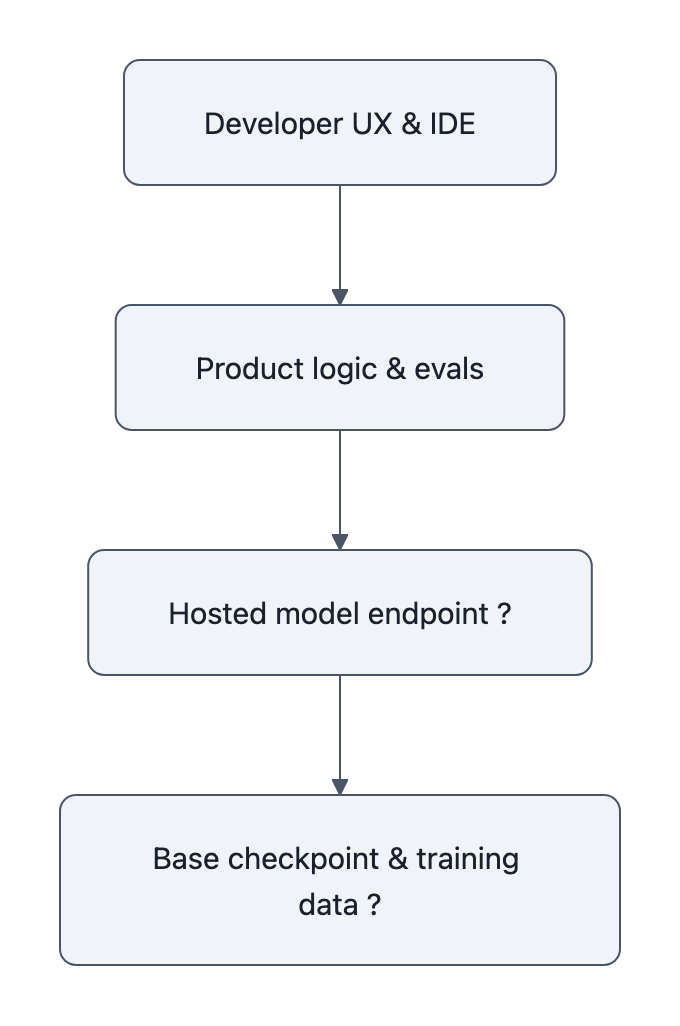

- Pricing reality.

If Composer 2 is secretly Kimi K2.5 routed through a host, you’re paying Cursor’s margin on top of model costs, and possibly on top of someone else’s proprietary lineage or licensing risk. That’s not necessarily wrong, but it is a different value proposition than “our own model.” - Reliability and regression.

If your tool swaps underlying checkpoints without telling you, your team’s finely‑tuned workflows can quietly drift. A refactor script that was safe last month starts hallucinating migrations this month. Was that “improvement,” or just a stealth model swap? - Ethics and legal risk.

Anthropic’s report on distillation attacks didn’t just call out Moonshot and peers for fun. It framed a future where downstream products may unknowingly depend on models built from unauthorized data or contractual violations. That risk propagates into your org whether you like it or not. - Attribution and debugging.

When something goes wrong, security bug, license‑violating snippet, subtle data leak, you need to know: did this come from Cursor’s RL setup? From Kimi’s pretraining? From Claude’s distillation? Without provenance, you’re stuck guessing.

In other words, model lineage has become part of the API contract between dev tools and developers.

We’re just pretending it’s optional.

What developers and users should watch (and do) next

The Composer 2 flap won’t be the last time a “proprietary” model turns out to be mostly someone else’s work. The practical question is: how do you live sanely in that world?

A few concrete habits help.

1. Ask for provenance like you’d ask for uptime

When a tool is central to your workflow, “what’s the base model?” should be as routine as “what’s your SLA?”

You don’t need every training detail. You do need:

- Which family (Kimi, Claude, Llama, etc.) they’re built on, if any.

- What they control vs. what a third‑party host controls.

- How often they change the underlying checkpoint, and how you’ll be notified.

If a vendor refuses to answer at that level of granularity, they’re telling you something about their relationship to their own stack.

2. Treat sudden leaps with productive suspicion

Overnight 2x quality jumps in AI are rare. When they happen, you should wonder:

Did they discover a new algorithm, or did they swap in a different base?

You can’t run Anthropic‑grade forensics, but you can:

- Run a stable benchmark suite across versions.

- Compare failure modes, does the model now fail in exactly the way another public model does?

- Watch for telltale quirks in style, formatting, or error messages.

This is the same mindset you’d bring to a cloud provider quietly changing hardware under your instances.

3. Separate “model value” from “product value”

Composer 2, even if it were pure Kimi under the hood, might still be worth paying for.

Why? Because product value often lives in:

- How the IDE integrates suggestions into your workflow.

- How tools orchestrate agents, tests, and refactors.

- The evaluation harness that decides when to not apply a change.

You can see this in Anthropic’s own work, Claude coding its future isn’t just about raw model power, but about how they wrap it with agents and guardrails.

But for that argument to land, vendors have to be honest about the split: “We’re standing on open weights, and the value you’re paying for is everything we’ve built on top.”

Not “we invented Composer 2 from the ground up, please don’t look too closely at the headers.”

4. Reward transparency long before regulation forces it

Regulators will eventually insist on something like model VIN numbers and provenance disclosures.

Until then, you have two levers:

- Choose vendors who publish model cards, changelogs, and lineage diagrams, even when they’re messy.

- Call out opacity in public channels, not with pile‑on outrage, but with calm, repeated questions: “What’s the base? How often does it change? What’s your policy on distillation?”

Markets don’t automatically favor the most ethical players. But engineers have more influence than they think when they make trustworthiness part of their buying criteria.

Key Takeaways

- There is no confirmed public proof that Cursor Composer 2 is literally Kimi K2.5; the allegation is driven by community screenshots and side‑by‑side testing, not verified audits.

- The episode exposes how opaque model provenance and weak auditing make it trivial for products to present repackaged or distilled models as proprietary breakthroughs.

- For developer tooling, model lineage is now part of the API contract, affecting pricing, reliability, and legal risk, not just trivia for researchers.

- Developers should demand basic provenance disclosures, benchmark model behavior across versions, and distinguish between the value of the base model and the value of the product wrapped around it.

- The real long‑term danger isn’t one act of alleged copying, it’s cumulative erosion of trust in the tools we’re increasingly letting rewrite our codebases.

Further Reading

- Introducing Composer 2, Cursor, Cursor’s official description of Composer 2’s training recipe and pricing.

- moonshotai / Kimi-K2.5, Hugging Face, Technical details, benchmarks, and licensing for Kimi K2.5.

- Moonshot releases Kimi K2.5, InfoQ, Overview of K2.5’s capabilities and architecture.

- Detecting and preventing distillation attacks, Anthropic, Anthropic’s account of large‑scale distillation campaigns and their attribution methods.

- Reddit discussion: “Cursor’s ‘Composer 2’ model is apparently just Kimi K2.5”, The origin of the Composer 2 = K2.5 allegation and community forensics (unverified).

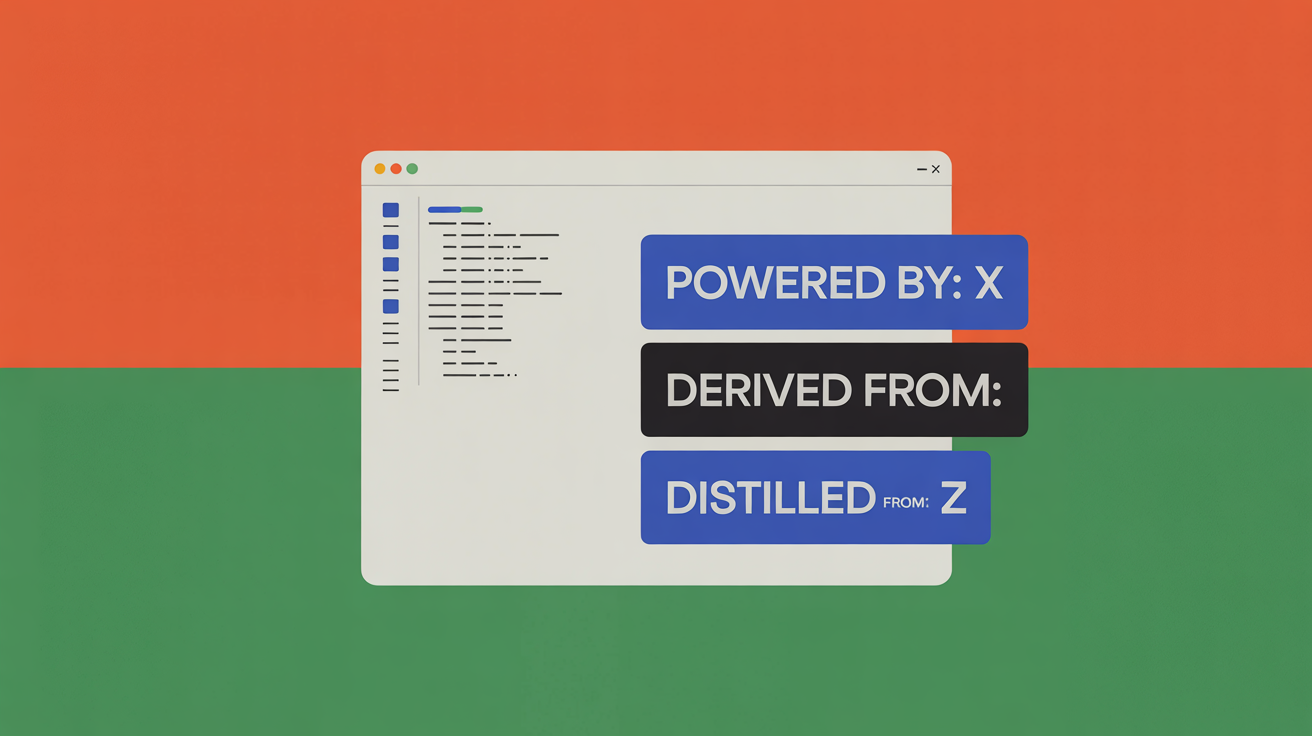

In a year or two, it will likely be normal to see a small badge in your editor: “Powered by: X, derived from: Y, distilled from: Z.” Until that future arrives, the Composer 2 story is a reminder that in AI, what you don’t see in the sidebar may matter more than the model name you do.