A red plastic robot rolls past a taco truck in Los Angeles, weaving around a couple arguing over parking. It pauses at the corner, camera pods swiveling, then glides straight through a cluster of pedestrians like it’s walked this block a hundred times.

It hasn’t.

The trick lives somewhere else entirely, inside Pokémon Go data, stitched together from millions of tiny, voluntary acts: a student circling a mural to get “a better scan,” a dad filming the playground PokéStop while his kid spins the disc, an Ingress diehard dutifully capturing every church door in town.

TL;DR

- Niantic didn’t “accidentally” train robots with Pokémon Go data; it deliberately built a pedestrian‑eye map and is now selling it to robotics companies.

- The interesting question isn’t whether players “knew”, it’s how a private game studio ended up authoring quasi‑public mapping infrastructure with almost no civic oversight.

- If robots and AR services are running on player‑contributed scans, we need infrastructure‑level transparency and consent, not just another checkbox in an app.

Pokémon Go data didn’t accidentally train robots, players built a pedestrian map

Niantic now describes a “Large Geospatial Model”, an LGM, their phrase, made of billions of geolocated images and “neural maps” that power its Visual Positioning System, or VPS.

In plainer language: it’s a street‑level memory palace, built from user phones, that lets machines recognize exactly where they are, down to the centimeter, in places GPS is fuzzy.

The crucial detail is where this data comes from.

Niantic says it has about 10 million scanned locations around the world, with over a million “activated” for VPS, and “about 1 million fresh scans each week” flowing in from Pokémon Go, Ingress, and scanning apps like Scaniverse. Those scans aren’t just pictures; they come with the phone’s pose, depth cues, and all the tiny visual anchors, bolts on a railing, cracks in the sidewalk, you and I mostly ignore.

This is not Street View for cars. Niantic brags, correctly, that its data is taken from a pedestrian perspective and includes places cars never see: alleys, parks, plazas, garden paths, weird shortcuts behind supermarkets.

What looks to the player like a quick side quest, “scan this PokéStop for a bonus”, is, structurally, a request to go stand in exactly the gap in the map and patch the hole.

The map isn’t a byproduct. It’s the point.

What Niantic says it collected (and what those numbers actually mean)

Niantic is explicit about one thing: “This scanning feature is completely optional… Merely walking around playing our games does not train an AI model.” That’s from their own blog explaining the Large Geospatial Model.

Two important consequences fall out of that sentence.

First, the company is drawing a line between passive play and active contribution. To generate VPS data, you have to:

– Choose to scan a location

– Point your camera around it, often following an on‑screen grid

– Upload that video to Niantic’s servers

From a consent lawyer’s perspective, this is gold. There’s a clear action, explicit language that the scan is being sent to Niantic, and an opt‑in flow. Compared to, say, Google’s old habit of turning your Street View Wi‑Fi signals into mapping data on the sly, this looks almost quaint.

Second, the sheer volume, those 10 million locations, 1 million weekly scans, tells you that “optional” doesn’t mean “rare.” It means the incentives were tuned well. Stick enough XP and loot behind the “scan” button, and a slice of players will happily film every statue they walk past.

We should be honest about another thing too: these numbers are self‑reported. We don’t have an external audit of how many locations are scanned, what’s in those scans, or how long the data sticks around. Niantic has answered privacy questions before, a 2016 Senate inquiry pushed them to explain what Pokémon Go collected and how kids’ data was handled, but the LGM and VPS live in a newer, foggier category: not exactly “content,” not exactly “telemetry,” more like invisible infrastructure.

This is the same gray zone we wrote about with automated labeling bias: a quiet pipeline where individual clicks and scans turn into high‑stakes systems, without clear accountability.

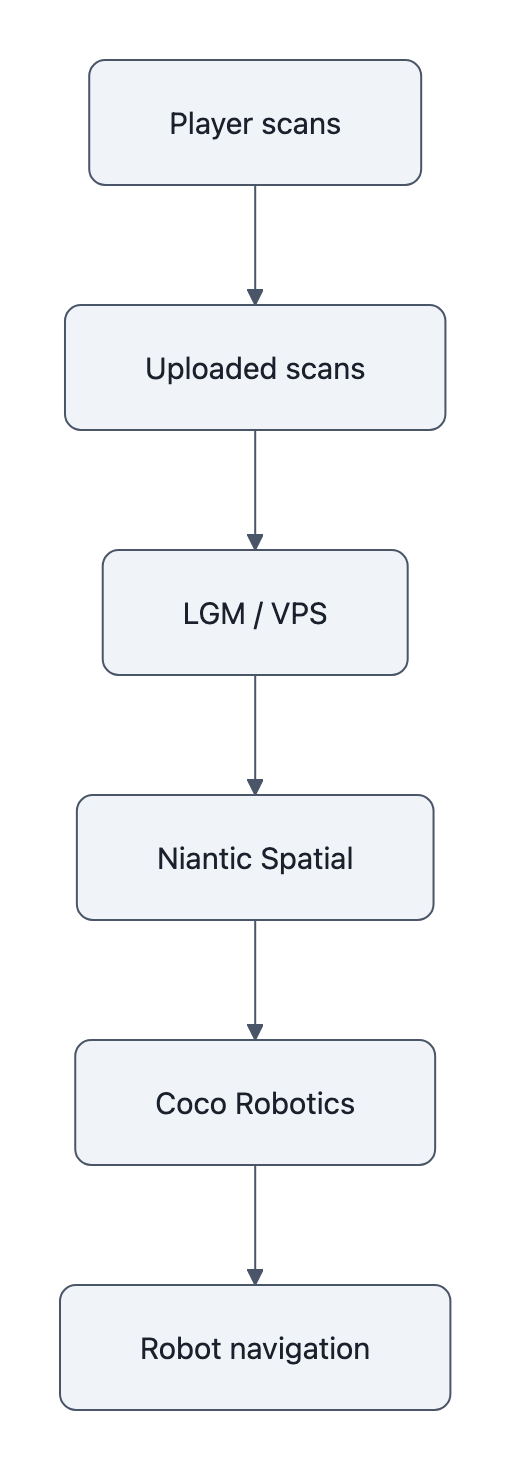

How those scans turn into centimeter-scale navigation for delivery robots

The jump from “PokéStop scan” to “taco‑delivery robot” is easier to see if you imagine how you navigate your own neighborhood.

You don’t look at a blue dot on a flat GPS map. You orient by visual anchors: the crooked tree, the faded mural, the trash can with the sticker that always makes you laugh.

Niantic’s VPS does the same thing.

The company’s geospatial blog describes a stack of “more than 50 million neural networks” and “over 150 trillion parameters” trained to match the camera’s live view against its library of scans. Feed the system a frame from your phone, or a robot’s camera, and it replies: you’re here, 1.3 meters from this bench, facing northeast. That’s centimeter‑scale localization.

Now drop this into a robot.

Niantic Spatial, the spin‑out that sells this tech, has a partnership with Coco Robotics, whose bright robots are starting to deliver food in cities like LA and Chicago. Coco’s latest “Coco 2” model, Semafor reports, goes fully autonomous on certain routes and uses Niantic’s visual positioning to navigate dense city blocks where GPS jitters, skyscrapers block satellites, and Wi‑Fi signals are chaotic.

The point isn’t just “robots use cameras.” It’s that the map those cameras rely on comes from players, moving at human walking speed, looking at human points of interest, over human‑only ground.

The robot isn’t just on your sidewalk. It’s walking through the negative image of your community’s play.

The real issue isn’t secret training, it’s private companies authoring public space

Most of the online reaction landed on a familiar axis: did players “know” their Pokémon Go data would power AI? Were they “unpaid labor”?

That frame misses the weirder, more important thing.

What Niantic has built, and what Coco is now driving around on, looks a lot like civic infrastructure. It’s a high‑resolution, constantly updated, pedestrian‑scale map of public space.

If a city government commissioned this, we’d have committees, procurement rules, public comment periods, accessibility audits, maybe a heated town hall about whether the robot should be allowed on the bike path.

Instead, the map was authored by:

– A for‑profit company

– Through a game

– On top of spaces no single person owns, but everyone uses

And now that map is being productized, with potential customers ranging from delivery startups to, by Niantic’s own executive’s admission to 404 Media, governments and militaries. (“Adding amplitude to war,” he said, is “definitely an issue”, which is the kind of sentence you only get when the possibility is real enough to worry about.)

We’re used to thinking about AI accountability in terms of outputs: a model mislabeled a satellite photo, a strike went wrong, someone has to answer for it. (See: the Iran strike case in our AI accountability piece.)

Here, the accountability question lives underneath the outputs, in the map itself. Who gets to decide:

– Which places are richly scanned and constantly updated?

– Which neighborhoods remain blank spots robots can’t reliably enter?

– What happens when a community doesn’t want its plazas and playgrounds turned into machine‑readable infrastructure at all?

Those are zoning‑board questions, not app‑settings questions. But they’re being answered in Terms of Service updates and B2B product brochures.

Why this matters: consent, civic infrastructure, and what to do next

So what should change?

Not “ban Pokémon Go scans” or “never train robots on public spaces.” That would be cosmetic, privacy theater aimed at individual players, while the structural incentives remain untouched.

The more honest move is to treat geospatial AI maps as infrastructure, and expect infrastructure‑grade norms:

- Public registers of private maps. If a company operates a city‑scale VPS or LGM, there should be a public description of its coverage, update cadence, and major customers. You shouldn’t need to read a robotics press release to learn that your daily walk is now part of a commercial robot route.

- Civic influence over coverage. Cities negotiate where fiber lines go; they should be able to negotiate where dense, persistent scanning is appropriate. Maybe schools and shelters are low‑scan zones by default. Maybe historically underserved neighborhoods get mapping guarantees, so their streets aren’t algorithmic blind spots.

- Player‑level transparency that’s honest about downstream use. “Scan this PokéStop to help our AR map” undersells the story when that same scan may eventually guide an autonomous robot or a police drone. If the uses are open‑ended, the disclosure should say that, plainly.

That still leaves a big philosophical question hanging in the air: when you walk through public space with a camera, who owns the ghost of that walk, the trail of pixels and pose vectors that make up a point in Niantic’s LGM?

Right now, the answer is “whoever wrote the app you liked enough to install.”

I keep thinking about that little red robot rolling past the taco truck. It doesn’t know anything about Pokémon, or gyms, or incense lures. But every smooth turn it makes is a faint echo of someone else’s footsteps, someone who spun a PokéStop, watched the scan grid dance on their screen, and thought they were just playing a game.

Key Takeaways

- Pokémon Go data wasn’t a side effect; Niantic explicitly used player-contributed scans to build a pedestrian‑scale geospatial model now sold as navigation infrastructure.

- Niantic’s own numbers, 10 million scanned locations, roughly 1 million new scans each week, show “optional” scanning can still produce massive, dense coverage.

- Robots like Coco 2 use Niantic’s VPS for centimeter-level localization, meaning their street behavior is literally anchored to how and where players once held up their phones.

- The ethical problem is less “unpaid labor” and more that private platforms are authoring de facto public mapping infrastructure with minimal civic input.

- We need infrastructure‑style norms, public registries, civic influence over coverage, and blunt disclosure of downstream uses, for future geospatial AI, not just better app permissions.

Further Reading

- Building a Large Geospatial Model to Achieve Spatial Intelligence, Niantic, Niantic’s own description of its Large Geospatial Model, VPS, and player-contributed scanning.

- Niantic is building a ‘geospatial’ AI model based on Pokémon Go player data, The Verge, Context on how Pokémon Go data feeds Niantic’s spatial AI ambitions.

- Niantic uses Pokémon Go player data to build AI navigation system, Ars Technica, Reporting on the scope of Niantic’s pedestrian-perspective dataset and its uses.

- Niantic Spatial blog, Coco Robotics partnership, Company announcement detailing how VPS integrates into Coco’s delivery robots.

- Coco’s new delivery robot is hitting the streets, Semafor, On-the-ground look at Coco 2 and its deployment plans with Niantic’s tech.