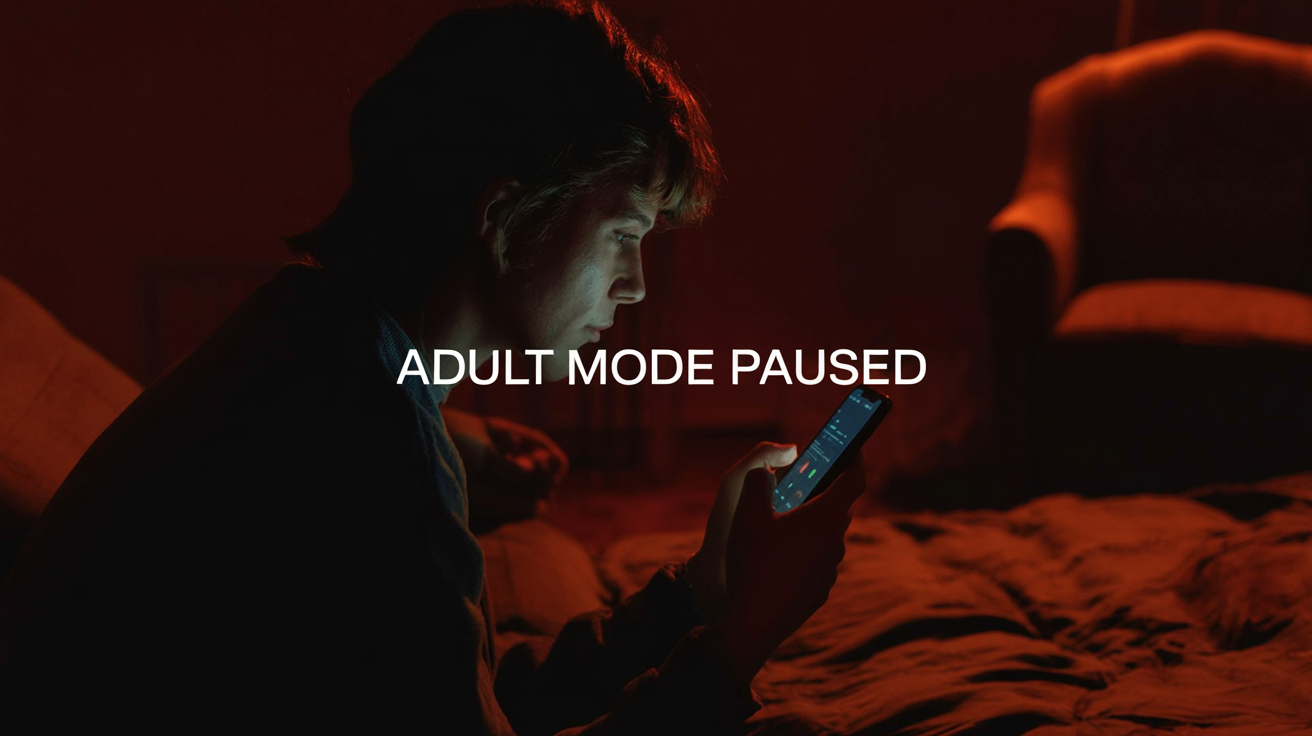

The imagined user for ChatGPT adult mode is not a headline, but a room: one person, one screen, the soft blue light of a tab they probably wouldn’t open at work.

Sam Altman promised those people “erotica for verified adults” back in October 2025, part of a plan to “treat adult users like adults.” Now ChatGPT adult mode is “pushed out” again, with no new date, as OpenAI tells Axios it needs to focus on other priorities and “getting the experience right will take more time.”

This looks like a story about sex and prudishness. It isn’t. It’s a stress test that revealed something deeper: the current generation of big, general‑purpose AI platforms doesn’t actually know how to hand adults real autonomy without breaking its own safety, liability, and trust machinery.

TL;DR

- ChatGPT adult mode was supposed to allow explicit erotic text for “verified adults,” then quietly slipped from December 2025 to Q1 2026 to… sometime later.

- The pause isn’t really about erotic content; it exposes how badly large platforms handle age verification, moderation at scale, and emotional risk in intimate use cases.

- That gap is already being filled by niche vendors like Venice and Grok, setting up a two‑tier AI world where responsibility clusters with big brands and risk migrates elsewhere.

What OpenAI Promised With ChatGPT Adult Mode

The original promise was crisp enough to fit in a keynote slide.

Altman told reporters that once age‑gating rolled out, ChatGPT would “allow even more, like erotica for verified adults.” The Guardian and TechCrunch described a text‑only “adult mode,” not AI porn videos, just words: romantic stories, explicit roleplay, the sort of thing users had been coaxing out of loopholes anyway.

The idea sounded almost boringly reasonable. Enforce age checks, loosen the filter, keep everything consensual and legal. A grown‑up switch in a very grown‑up product.

But embedded in that neat phrase “verified adults” is the part where the story starts to wobble.

Who counts as verified in a world where your “user” is a browser tab and an email address anyone can make in under a minute?

Why OpenAI Pushed The Launch, Safety, Age‑Gating, and Liability

On paper, the delay is simple: OpenAI told Axios it’s “pushing out the launch of adult mode” to focus on higher‑priority work like intelligence and personalization. No date. Just “will take more time.”

Off paper, the reporting paints a thornier picture.

Wall Street Journal‑summarized accounts say internal advisers worried about three things:

- Minors slipping through whatever age‑prediction or document‑check fences OpenAI erected.

- Users developing unhealthy emotional dependence on an erotic persona that never sleeps or says “no.”

- Moderation systems missing non‑consensual or illegal sexual content in a fast, multilingual firehose of prompts.

None of those are unique to sex. They’re all amplified versions of problems the company already has.

If you can’t reliably tell whether a user is 15 or 35, you can’t safely serve “adult mode.” But you also can’t safely serve gambling‑adjacent advice, or depression chats, or anything else where the law and ethics change with the birthdate.

If your moderation stack sometimes lets through jailbreaks about self‑harm or hate speech, it’s going to miss edge cases in erotica, too. Only now the stakes include law enforcement and regulators who do not find “oops, the model hallucinated” very funny.

And if your core product is already nudging people toward dependency, the “hooked on help” pattern we’ve written about before in AI dependency and harms, erotic intimacy isn’t just another feature. It’s a force multiplier.

Adult mode didn’t introduce a new category of risk. It concentrated several existing ones into a single, very visible switch.

This Pause Is A Structural Moment, What Big AI Can’t Admit

The obvious narrative is: OpenAI chickened out. The less obvious one is: OpenAI finally ran into a product it couldn’t safety‑wrap with its usual tools.

Big AI platforms live on three pillars:

- Scale, same model, same app, hundreds of millions of users.

- Brand safety, nothing in the core experience that scares advertisers, partners, or politicians.

- One‑size policy, a few global knobs for “family‑friendly” versus “research preview” versus “enterprise.”

Erotic chat breaks all three.

First, it demands hard segmentation. An AI that writes copy for a bank and sexts you in the next tab is not just a PR risk; it’s a discovery fight waiting to happen. One HR screenshot, one mis‑routed prompt, and you’ve got a front‑page story.

Second, it makes content classification binary in a system that prefers gradients. A joke about “hot takes” can be misread, a racy metaphor can be flagged, you either under‑block (risk) or over‑block (user rage). There is very little middle.

Third, it exposes the gap between “we treat adults like adults” and “we treat regulators like they might subpoena us.” The more OpenAI leans into being a general cognition layer, your therapist, tutor, assistant, friend, the harder it is to say “but this one persona is totally separate, don’t worry.”

In that sense, the ChatGPT adult mode delay rhymes with the broader ChatGPT user backlash over product changes. OpenAI keeps promising your‑life‑in‑a‑box, but every time the edges get weird or messy, it has to yank features back to preserve the illusion of one safe, clean, universal assistant.

The pause is not prudishness. It’s a confession: at this scale, with this architecture, OpenAI can’t convincingly be both Walmart and a sex shop in the same storefront.

Who Will Fill The Gap (And What That Means For Users)

Meanwhile, other builders didn’t get the memo about waiting.

Venice.ai advertises “Private AI for Unlimited Creative Freedom” and “Unrestricted Intelligence.” Their homepage promises that prompts are “100% private,” staying on your device. The model list includes things like “Venice Uncensored 1.1” alongside GPT‑5.2 and Grok. On Reddit, if you want “sexy chat,” users already tell you: go to Grok or Venice; “they don’t sell porn at Walmart.”

That’s the two‑tier system taking shape:

- Tier one: the Walmarts, OpenAI, Anthropic, maybe Google, all pushing family‑safe general assistants with thick legal padding.

- Tier two: the Venice/Grok world, narrower brands, “unrestricted” slogans, looser safety nets, often draped in the language of privacy and freedom.

Responsibility and scrutiny cling to tier one. Experimental, intimate, and legally spicy use cases drift to tier two.

For users, the paradox is sharp:

If you stay with a company big enough to be hauled in front of Congress, you won’t get ChatGPT adult mode anytime soon. You might get oblique allusions and fade‑to‑black prose. You’ll also get logs, audits, and a moderation team whose risk tolerance is calibrated somewhere between “think of the children” and “our market cap.”

If you go to the “unrestricted” vendors, you may indeed find what you’re looking for: explicit roleplay, romantic companions, maybe even video. But you’re also betting on a very small company’s claims about privacy, security, and model behavior. If something goes wrong, harassment, leaks, blackmail, you don’t have much leverage beyond closing the tab.

In other words, the people seeking AI intimacy will not disappear because ChatGPT adult mode is delayed. They will simply walk into quieter, stranger rooms on the internet, where there are fewer adults in the legal sense, and even fewer in the product‑management sense.

Key Takeaways

- ChatGPT adult mode was never just about erotica; it collided with unresolved problems in age verification, moderation, and emotional risk.

- OpenAI’s delay shows that at massive scale, you can’t bolt on “adult” autonomy without re‑architecting how your platform handles identity and liability.

- The pause accelerates a split: big, sanitized assistants on one side; smaller, “unrestricted” AI vendors on the other, picking up the most intimate use cases.

- Users chasing erotic or companion‑style chat will keep going, but they’ll be pushed toward services with weaker oversight and murkier privacy guarantees.

- The real question isn’t whether AI should be sexy; it’s who we’re comfortable trusting with the most private conversations we’ve ever had with a machine.

Further Reading

- OpenAI delays ChatGPT ‘adult mode’ (Axios), On‑record OpenAI statement about pushing out adult mode to focus on other priorities.

- OpenAI delays ChatGPT’s ‘adult mode’ again (TechCrunch), Timeline of Altman’s promise, the initial target, and subsequent delays.

- OpenAI will allow verified adults to use ChatGPT to generate erotic content (The Guardian), Coverage of the original “treat adults like adults” announcement.

- AP News background on AI erotica debates, Context on mainstream AI chatbots and explicit content policies.

- Venice.ai: “Unrestricted Intelligence”, Example of a smaller vendor positioning itself as private and permissive, with uncensored models.

In that quiet room with one person and one screen, the desire for an AI that doesn’t flinch is not going away. The only real question is whether the most powerful systems we’re building will ever be allowed to sit there, or if intimacy with machines is destined to live in the back alleys of the AI world, forever someone else’s problem.