A Tomahawk‑class missile hits next to a girls’ school in Minab. Open‑source sleuths geolocate the blast; U.S. officials quietly tell Reuters and CBS that American forces were “likely” responsible and that dated intelligence may have mis‑labeled the area as a military site. Within days, a local paper runs a piece suggesting an “AI error” and a Claude‑based system in the targeting stack.

If your first thought was “ah, so the AI did it,” you’ve already lost the plot on AI accountability.

TL;DR

- Blaming an “AI error” for Minab is politically convenient because it turns a systemic failure into a software bug.

- The likely failure points are boring: stale data, rushed integration, and opaque chains of human sign‑off, not a rogue model.

- Real AI accountability means enforceable decision provenance: immutable audit logs, timestamped intel lineage, and non‑waivable human sign‑off baked into procurement.

AI accountability: why “AI error” is a dodge

The emerging narrative is tidy: the Pentagon used Anthropic’s Claude, something in the AI‑assisted targeting pipeline went wrong, and a school full of girls died.

Except none of the serious reporting says “the AI fired the missile.”

Bellingcat and The Washington Post did the visual work: video and satellite imagery tie a Tomahawk‑like cruise missile to the IRGC compound next to the school on Feb. 28, within a few hundred meters of classrooms full of kids. Reuters and CBS then quote U.S. officials saying the strike was likely American and may have used “dated intelligence” that still tagged the area as a military installation. Reuters

The Claude story is separate: WaPo reports that Palantir’s Maven Smart System used Claude to synthesize satellite and signals intel and crank out ~1,000 prioritized targets in the first 24 hours. Experts like Paul Scharre warn, in plain language, that “AI gets it wrong” and humans need to check its work.

The gap between those two threads, a likely U.S. Tomahawk and a Claude‑assisted target factory, is where “AI error” lives. It’s a foggy place, and that’s the point.

“AI error” is politically useful because it suggests:

- No individual chose this.

- It was a technical glitch, not a policy or command failure.

- The remedy is more AI safety talk, not less war.

If that framing sticks, everyone in the chain of command moves one step further from blame. The model becomes the scapegoat, and AI accountability becomes a vibes‑based PR exercise instead of a traceable chain of decisions.

That is not an accident; it’s a design choice.

For more on how vendors and governments already play hot potato with responsibility, see our earlier pieces on the Anthropic ban in US Govt and Anthropic rejecting the Pentagon.

Where the failure actually happens: stale data, integration, and human oversight

Look at the facts we do have.

CBS cites a U.S. assessment that the school “was not intentionally targeted” and “may have been hit in error” due to dated intelligence that still treated the area as a military facility. Al Jazeera’s imagery work shows the school had been physically separate from the adjacent IRGC site for years. Local reporting quotes a DOJ appointee saying “the immediate theory is that the AI program included the school’s position based on older, archived intelligence.”

Notice the constant: stale data.

This is the most boring possible failure mode. Not Skynet. Not emergent deception. A database row that didn’t get updated when an IRGC building became a primary school.

Call it a stale intelligence error. The key questions are:

- Who validated that the target database matched ground truth?

- Who certified the “kill chain” was safe to automate around that database?

- Who had the authority to require fresh ISR (surveillance) on anything within X meters of civilian infrastructure before firing?

None of those are “AI questions.” They’re system‑engineering and command‑authority questions.

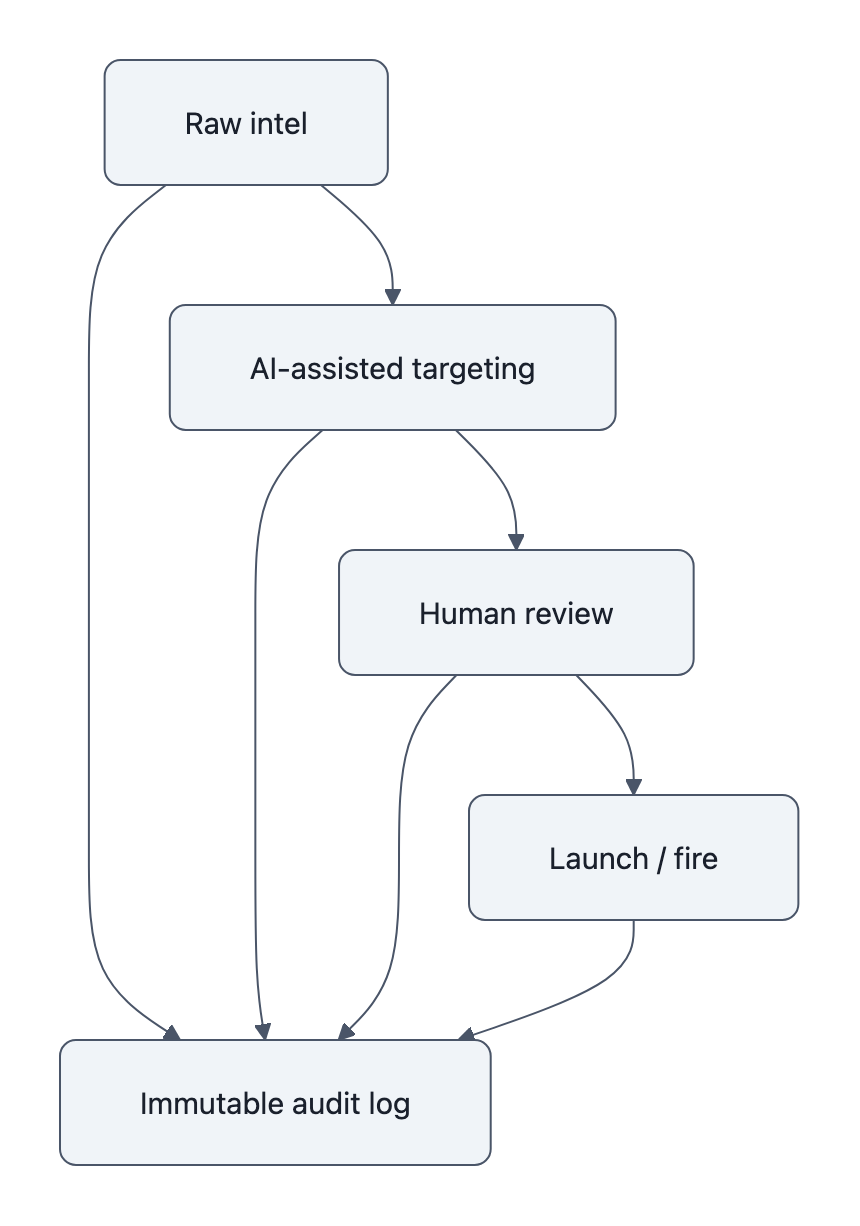

Now add integration. WaPo describes Claude embedded in a Palantir interface, fusing streams of sensor data into target proposals. That’s classic “AI‑assisted targeting”: the model proposes; the system ranks; humans, in theory, approve.

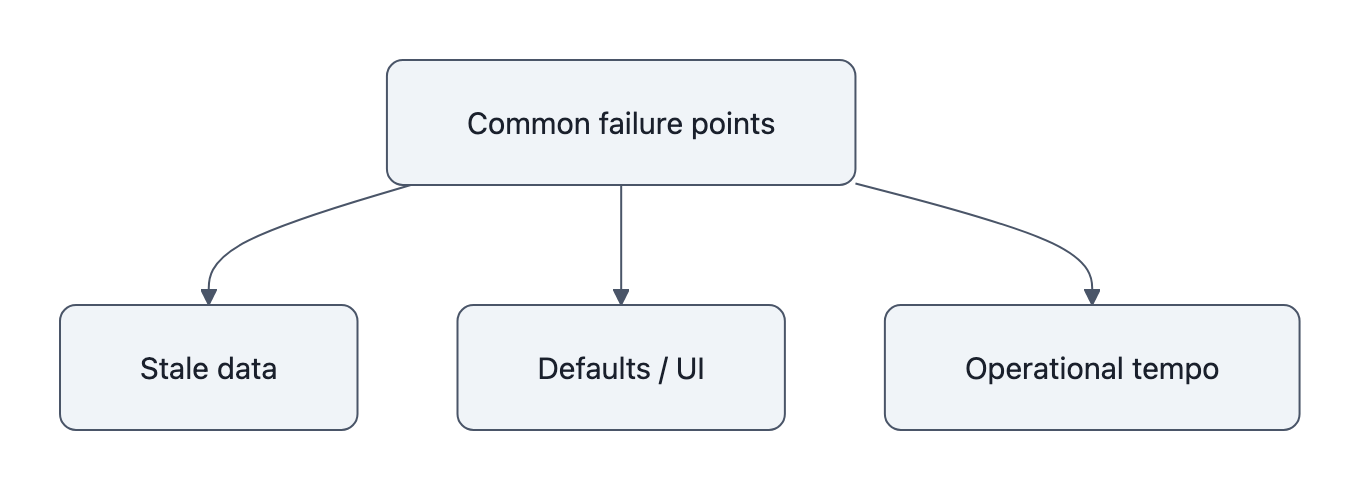

Where do these failures usually hide?

- In defaults: a system that surfaces a target as “high confidence” based on unreadable internal heuristics.

- In interfaces: a green box saying “IRGC facility” with a small “last updated: 2016” field nobody clicks.

- In tempo: a thousand targets in 24 hours, and analysts triaging at speed with political pressure to “degrade capabilities” fast.

The claim that “the AI included the school based on older, archived intelligence” is essentially: the system treated unvetted, out‑of‑date data as live ordnance‑worthy truth.

Maybe an LLM helped link that coordinate to other intel. But some human decided that “AI‑assisted” output was good enough to load into a weapon system without a mandatory recency check.

When you strip away the branding, this is identical to driving a tank off a cliff because the satnav hadn’t downloaded new maps. Blaming “AI” is like blaming “GPS” instead of the officer who set the rules for when to ignore the map.

That’s the core of AI accountability here: the dangerous decision wasn’t “use Claude.” It was “treat this whole AI‑assisted pipeline as a trusted part of lethal targeting without hardened controls on data freshness, override authority, and sign‑off.”

What meaningful accountability looks like: provenance, immutable audit logs, and required human sign‑off

You can’t hold anyone accountable if you can’t reconstruct what they actually did.

Right now, if Minab’s investigation ends with “AI error,” you have no idea whether the root cause was:

- A contractor mis‑configured the Palantir interface.

- An intel officer skipped a required review.

- A commander loosened rules of engagement under political pressure.

- A model hallucinated a relationship that no one caught.

So the fix is not “better ethics,” it’s better forensics. Decision‑provenance by design.

At a minimum, AI‑assisted targeting should have:

- Immutable audit trailsThink flight recorder, not Jira ticket.

Every step in the chain, data ingest, model call, ranking, human edits, final approval, gets logged to an append‑only store with cryptographic signatures. If a target appears in the fire plan, you can see:

- Which model version produced it.

- What inputs it saw (and from when).

- Which human looked at it, on what screen, and what they clicked.

- Who, by name and role, authorized lethal action.

You don’t get to say “the logic behind the launch is unclear,” as one anonymous DOJ appointee did, because the system won’t let that logic be unclear.

- Timestamped data lineageEvery intelligence object should carry a born‑on date, last‑verified date, and source.

The targeting UI should scream if you’re about to act on something older than, say, 12 months near civilian infrastructure. No soft warnings; hard interlocks. “Target requires fresh ISR or commander override with justification.”

That’s not sci‑fi. We already do stricter versioning for Kubernetes deployments than for some kill lists.

- Mandatory human sign‑off for lethal effects“Human in the loop” is currently a slogan. Make it a constraint.

- No autonomous or AI‑assisted strike within a given radius of schools, hospitals, or dense housing without a named human (O‑6+, say) signing a digital waiver.

- That waiver is in the immutable log. It’s tied to a person’s career and, if things go very wrong, their criminal liability.

The standard here is aviation, not ad‑tech. We don’t let Airbus shrug and say “autopilot error” when a plane augers in. Regulators start from: which human certified the system, set the operating envelope, and signed off the flight?

Do these controls prevent all tragedy? Obviously not.

But they do one crucial thing “AI error” carefully avoids: they make it impossible to smudge the boundary between model output and human decision. AI accountability becomes a matter of reading the log, not guessing motives from press quotes.

And they’re actually implementable. No one needs AGI alignment breakthroughs to add write‑once audit logs and data timestamps to a targeting stack.

What you can do: the questions citizens, journalists and engineers should ask now

The Minab strike is still under investigation. But if you want AI accountability to mean more than “awkward press conference,” the questions need to change now.

If you’re a citizen or journalist, stop asking “did AI do it?” and start asking:

- Does the targeting system have a tamper‑evident audit log from intel ingest to launch?

- Can investigators reconstruct, step by step, which human approved the use of which AI output?

- Are there written rules on data recency for lethal targeting? Who can override them?

If the answer to any of those is “we’re looking into that,” you already have your story.

If you’re an engineer working anywhere near military AI or dual‑use tooling:

- Refuse to ship AI‑assisted targeting or analysis features without immutable logs and timestamps. Treat them as non‑negotiable requirements, like authentication.

- Design UIs that force humans to see data age and provenance, especially near civilian sites.

- Push for contracts that specify auditability and human sign‑off, not just model accuracy.

Procurement is the pressure point. Governments already use “supply chain risk” labels, as with the Trump administration’s move against Anthropic, to strong‑arm AI vendors for political reasons. The same machinery can demand technical safeguards:

- No contract for AI‑assisted targeting without a certified “black box” subsystem.

- No deployment authority without a demonstrated chain‑of‑command sign‑off mechanism.

- No waiver just because “the tempo of operations required it.”

You don’t fix Minab with better model cards. You fix the incentives so that when 150 girls die, there is a clear, logged path back to humans with names.

That is AI accountability. Everything else is branding.

Key Takeaways

- “AI error” in Minab is a narrative convenience, not a technical diagnosis; the evidence points to stale intelligence and opaque decision chains, not autonomous killing machines.

- The real failure surfaces where AI‑assisted targeting meets old data and rushed integration, and where no one is forced to own the final lethal decision.

- Meaningful AI accountability in military AI means decision provenance: immutable, cryptographically secured audit trails plus strict data lineage and recency rules.

- Mandatory, name‑attached human sign‑off for strikes, especially near civilian infrastructure, should be enforced by procurement contracts and system design, not left to policy memos.

- Citizens, reporters, and engineers should stop accepting “AI error” as an answer and start demanding concrete details about logs, timestamps, and who actually clicked “fire.”

Further Reading

- Exclusive: AI Error Likely Led to Girl’s School Bombing in Iran, Local reporting citing anonymous officials who point to an AI‑assisted targeting pipeline and stale intelligence as a likely cause.

- Anthropic’s AI tool Claude central to U.S. campaign in Iran, Washington Post on how Claude was embedded in Palantir’s Maven Smart System to generate and prioritize targets.

- Video appears to show U.S. Tomahawk hit naval base near Iranian school, Forensic verification tying Tomahawk‑class munitions to the Minab strike area.

- Video Shows US Tomahawk Missile Strike Next to Girls’ School in Iran, Bellingcat’s open‑source analysis mapping the strike location relative to the school.

- Exclusive: U.S. investigation points to likely U.S. responsibility for Iran school strike, Reuters‑sourced reporting on internal U.S. assessments that American forces were probably responsible, with dated intelligence as a suspected factor.

In aviation, “pilot error” used to be the catch‑all phrase that ended inquiry. Then regulators started asking why the cockpit made that error easy. “AI error” is our new excuse; the only interesting question is whether we’ll demand to see the black box before we let them use it again.