A senior Hill staffer boards a flight to London. The ticket, hotel at the Marriott Grosvenor Square, and a curated tour of AI labs are all covered by a nonprofit you’ve never heard of. A month later, the same staffer is revising draft language on federal preemption for AI laws.

Those are the AI lobbying trips Sludge dug up in House and Senate gift-travel disclosures. The interesting part isn’t that the trips exist, it’s that in a fast-moving field like AI, these “educational” junkets are basically a shortcut to regulatory capture.

TL;DR

- AI lobbying trips turn industry-funded tours into the default AI curriculum for Congress.

- Normal disclosure rules assume policy moves slowly; AI doesn’t, so capture happens earlier.

- Fixing this is boring and mechanical: ban privately funded policy trips, fund neutral briefings, and enforce gift-travel rules like they matter.

AI lobbying trips: what Sludge’s records actually show

If you tried to build an influence campaign around AI law from scratch, you’d probably start exactly where Sludge found the Innovative Future Collective (IFC): with the people who write the first draft.

Sludge reports that IFC, a nonprofit formed in December 2024 and stacked with lobbyists for OpenAI, Andreessen Horowitz, Microsoft, Coinbase, Stripe, and others, has paid for congressional staff travel to San Francisco, Los Angeles, New York, and London for AI company tours. The source isn’t rumor; it’s House and Senate gift-travel disclosures plus the House Clerk’s searchable database.

On paper, these are “AI education” trips. In practice, they’re multi-day briefings run almost entirely by companies that want weak federal rules and strong federal preemption so states can’t write their own.

The advisory committee numbers matter: Sludge says 12 of IFC’s 15 advisors are current or recent corporate lobbyists, including several directly tied to AI companies. That’s not “industry in the room.” That’s industry running the room.

If you’re a staffer, you now have:

- A free trip.

- Hours of one-on-one access to “experts” whose job is to shape your bills.

- Slide decks, talking points, and anecdotes you can reuse in memos.

That’s your AI curriculum.

Why privately funded staff travel is more than “education”

The defense of these AI lobbying trips is always the same: “Staff need to learn about AI somewhere. This is just educational.”

Educational… but with a syllabus written by the people being regulated.

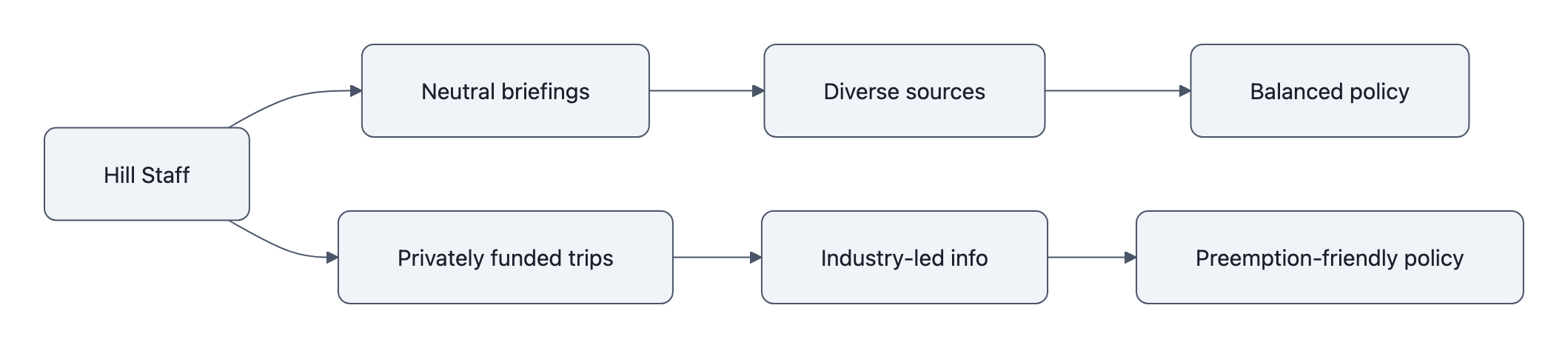

If you were designing an honest training pipeline for congressional staff, you’d want:

- Mixed instructors: industry, academics, civil-society, state AGs, maybe EU regulators.

- Published agendas anyone can inspect.

- Some way to compare what you’re told against independent evidence.

Gift travel short-circuits that.

First, how the rules work in broad strokes:

- House and Senate staff can take privately funded trips if they’re “officially connected” to their work, approved in advance, and disclosed afterwards.

- Sponsors file a form describing the purpose, itinerary, and cost; staff list who paid, where they went, and for how long.

- In theory, this sunlight makes things safe.

In reality, the constraint isn’t whether the trip is visible. The constraint is cognitive bandwidth.

Most Hill staff:

- Are generalists.

- Do not have a deep technical background in AI.

- Have more issues on their plate than hours in the day.

So the trip isn’t just “a free flight.” It’s:

- The highest-bandwidth AI explainer they will get this year.

- Delivered in an environment where everyone around them agrees on what “reasonable” AI rules look like.

- Sourced from entities with a direct financial stake in, say, federal preemption that blocks state-level AI protections.

You don’t need a cartoon bribe for this to work. The tradeoff is subtler:

- Staff gain real knowledge about how foundation models, datacenters, and “AI safety” teams work, as told by the companies themselves.

- In exchange, independent expertise (safety researchers, labor groups, state regulators) gets excluded from the formative mental model staff use when they sit down to write law.

By the time a skeptic testifies in a hearing, the framework is already baked.

Why this matters now, AI’s speed makes capture urgent

We’ve seen this movie before.

Telecom in the 90s. Pharma in the 2000s. Industry-funded “education” created the scaffolding for rules that were formally complex but structurally friendly to incumbents. By the time independent experts were heard, the standards and enforcement playbooks were locked in.

AI makes that process worse in three ways:

- The tech moves faster than the rules

Public Citizen counts more than 3,500 lobbyists working on AI issues, about a quarter of all federal lobbyists. LegiStorm says privately financed AI-related trips exploded from a few dozen in 2022 to ~80 in 2023 and nearly $500k in spending in 2024.

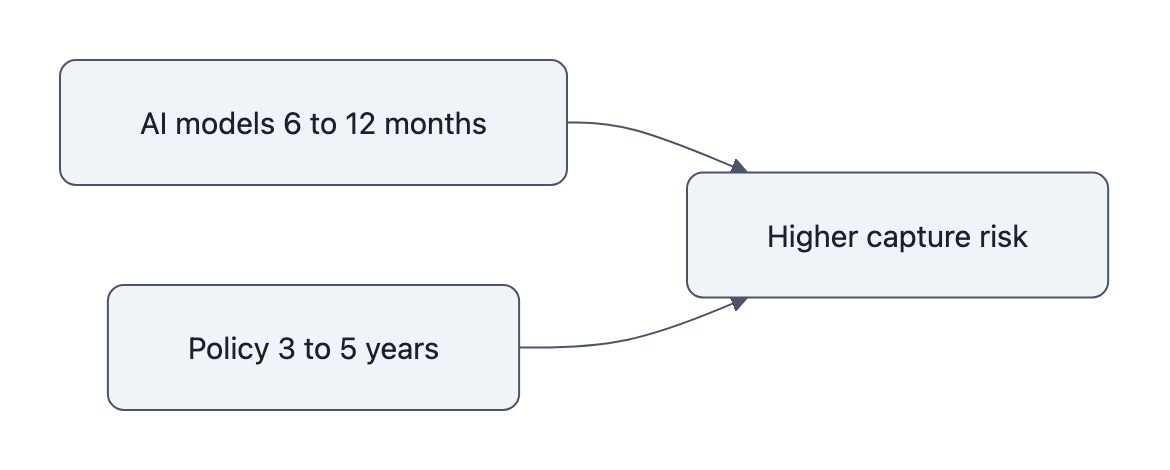

At the same time, AI models are iterating on 6-12 month cycles. Regulatory text moves on 3-5 year cycles.

So whoever shapes the first AI statute or preemption clause effectively sets the stage for multiple model generations. You don’t get to “fix it later” before damage is done; you get to fight over enforcement details while the base law stays captured.

We’ve already written about what happens when AI accountability is bolted on after the fact, see the AI accountability after the Iran school strike piece. By the time you discover the failure mode, the doctrine is entrenched.

- Infrastructure lock‑in is massive

Microsoft alone is committing around $145 billion this year in capital expenditures, largely for AI infrastructure. Once that spend is sunk, the political argument becomes, “We can’t afford rules that threaten critical infrastructure.”

This is why so much lobbying energy focuses on federal preemption and weak liability standards. If you can preempt strong state rules today, those datacenters become facts on the ground tomorrow. After that, any tough regulation is framed as an attack on jobs, innovation, or national security.

Look at how quickly OpenAI’s revenue curve translated into political leverage, see OpenAI revenue 2026. Money printed by one policy regime funds the lobbying to protect the next.

- Agencies are already nervy about entanglement

Some agencies have started distancing themselves from particular AI vendors over trust and conduct concerns, see Anthropic ban: why U.S. agencies cut ties.

Now imagine those same agencies trying to implement AI rules drafted by staff whose main “education” came from company-branded tours. When the first major enforcement fight hits, guess which side can say, “But you wrote it this way”?

The AI speed issue isn’t just about models evolving quickly. It’s about how fast capture happens relative to independent expertise getting onboarded.

Practical fixes: transparency, public briefings, and policy safeguards

The nice thing about this problem is that the fix doesn’t require reinventing democracy. It’s basically a set of boring constraints you’d add if you were threat‑modeling an engineering system.

If you were designing a safer AI policy pipeline, you’d do three things:

1. Ban privately funded policymaking travel

Not all travel, not all lobbying. Just this:

- If your role involves drafting, negotiating, or advising on AI legislation or regulation, you cannot accept privately financed trips whose primary purpose is “education” on that subject.

- If it’s really that important, taxpayers can pay.

This does two things:

- Forces industry to make its case in venues where others can be present (hearings, public roundtables, on-the-record submissions).

- Removes the subtle reciprocity pressure that comes from a three-day junket with nice catering.

2. Fund publicly run, balanced AI briefings

Right now, industry is filling a capacity gap.

Fix the gap.

- Create a standing, publicly funded AI briefing program run by GAO, CRS, or a dedicated nonpartisan office.

- Require that for any major AI bill, staff attend at least one balanced briefing that includes technologists, civil-society advocates, labor, state regulators, and yes, industry.

- Publish the agendas and speakers.

If industry wants to sponsor content, submit slide decks and whitepapers to the same portal everyone else uses.

This doesn’t eliminate bias, but it breaks the monopoly on staff attention.

3. Tighten and enforce gift-travel reporting for AI

Gift-travel disclosures exist, but they’re treated like bureaucratic afterthoughts.

Make them bite:

- Add a simple “AI/advanced computing” checkbox to filings so AI lobbying trips are easy to track.

- Require disclosure of all corporate funders behind a nonprofit sponsor like IFC, not just the front entity.

- Impose meaningful penalties, loss of committee privileges, public reprimands, for inaccurate or late filing on AI-related travel.

Also: make the data actually usable. A raw PDF repository is not transparency. A filterable, machine-readable feed is.

What this means for builders and readers

If you build AI systems, this isn’t abstract politics. The rules written under this influence regime are the ones you’ll be shipping under for the next decade.

Expect:

- Soft requirements dressed up as “AI safety” that happen to map cleanly onto incumbent infrastructure.

- Liability shields that assume centralized, giant-model deployment is the only sane option.

- Preemption clauses that lock out state-level experimentation on safety and rights.

If you’re a citizen, this is one of the rare places where unglamorous process changes actually matter. The levers are extremely specific:

- Ask your representatives, on the record, whether their staff have taken any AI-focused privately funded trips, and whether they’ll support a ban on such travel for AI policymakers.

- Support boring reforms groups (not just AI activists) who push for travel and lobbying disclosure upgrades.

- When you see “AI education” nonprofits pop up, look up their advisory boards and donors. If it’s 80% lobbyists, treat their whitepapers like advertorials, not neutral expertise.

The real test is simple: if AI policy education is important enough to shape the laws that govern billion‑dollar models and military deployments, it’s important enough to fund with public money and run in public.

Key Takeaways

- AI lobbying trips turn industry-sponsored junkets into the default AI education pipeline for congressional staff.

- Existing gift-travel rules expose who paid, but they don’t fix the knowledge asymmetry or timing problem.

- AI’s speed and infrastructure lock‑in mean early, industry-shaped statutes can dominate multiple generations of technology.

- Concrete fixes include banning privately funded AI policy trips, funding balanced public briefings, and tightening gift-travel enforcement.

- If AI rules are written in boardrooms and five-star hotel conference centers, public “oversight” will be mostly theater.

Further Reading

- AI Lobbyists Are Flying Congressional Staffers Around the Country on Luxury Trips, Sludge’s investigation into IFC-sponsored travel and who’s behind it.

- House Clerk, Gift Travel Filings, Official database of pre-approvals and disclosures for privately financed congressional travel.

- AI Lobbyists Descend on Washington, DC, Public Citizen’s report on the explosion of AI-focused lobbying.

- Privately financed travel increasingly focused on AI, LegiStorm’s data on the rise of AI-oriented junkets.

- K Street and the AI Gold Rush, Politico’s look at how lobbyists scrambled to capture AI policy work.

In software terms, privately funded AI junkets are an unsafe default. They’re not the whole exploit, but they’re an easy escalation path. Locking that down now is a configuration change, not a revolution, and it’ll be a lot harder once the system is in production.