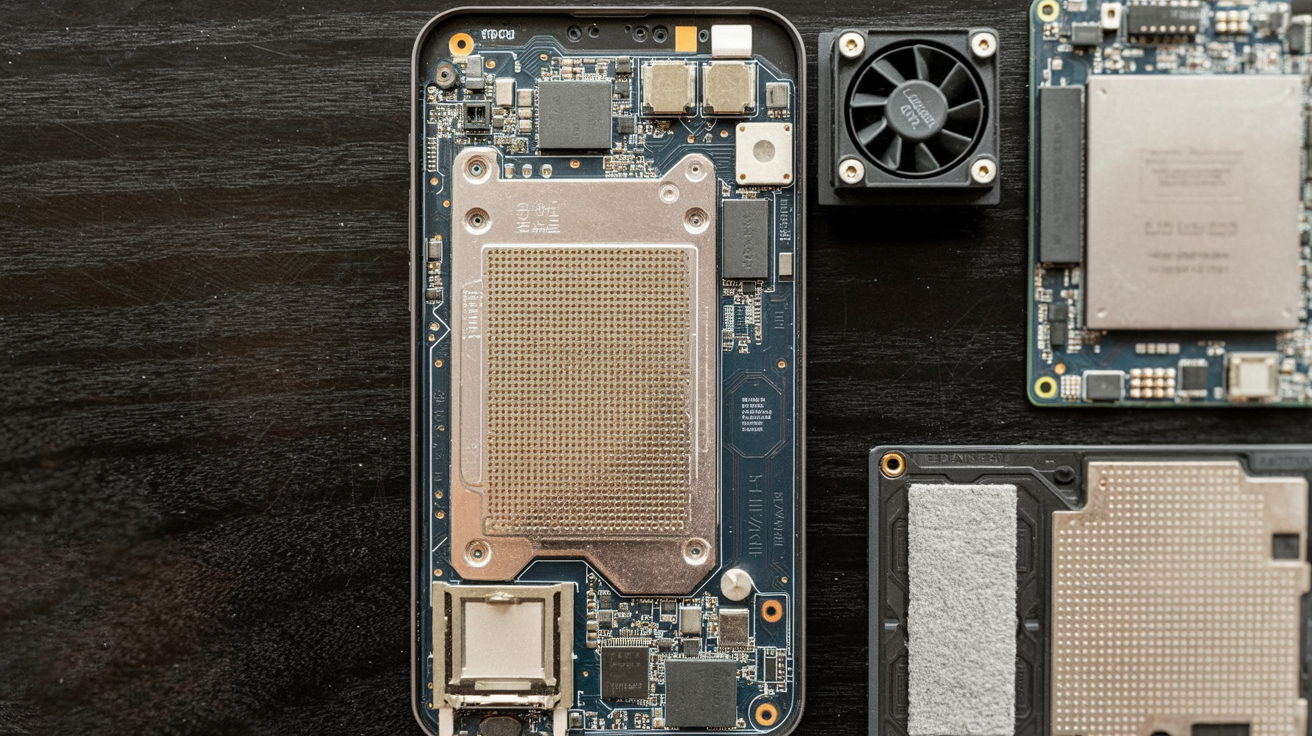

A local AI node is usually imagined as a tiny PC, a used workstation, or a Raspberry Pi that immediately runs out of steam. The more interesting build is a phone: one Reddit user claims they turned a Xiaomi 12 Pro into a 24/7, LAN-accessible local AI node serving Gemma through Ollama.

Read as a novelty, this is just “look, my phone runs AI.” Read as infrastructure, it is more interesting: a modern flagship can be stripped of the parts that make it a phone and repurposed into a small inference appliance. The gain is not one trick. It comes from changing the operating system, thermal behavior, charging pattern, and network stack until the device behaves less like consumer electronics and more like a server.

The evidence is mixed. Verified: the Xiaomi 12 Pro hardware exists as described, with a Snapdragon 8 Gen 1, a 4,600 mAh battery, and 120W charging support, according to Xiaomi and launch coverage. Plausible but not independently verified: the poster’s claimed setup details, LineageOS, ~9GB free RAM, frozen Android framework, custom thermal daemon, and 80% charging cutoff. There are no published benchmarks, scripts, or third-party measurements yet.

Why a Xiaomi 12 Pro can become a local AI node

The hardware case is straightforward. Xiaomi’s product page confirms the Xiaomi 12 Pro ships with Qualcomm’s Snapdragon 8 Gen 1 and markets its 7th Gen AI Engine; launch coverage confirms the 4,600 mAh battery and 120W wired charging. That is not server hardware, but it is enough compute, RAM, and power density to make small-model inference plausible.

The strategic frame is simple: consumer hardware is overbuilt for bursty interaction and underused for steady compute. A flagship phone is designed to absorb short spikes, gaming, camera processing, AI features, while staying pocketable. If you stop caring about pocketability, screen-on use, and app UX, you can redirect that budget toward inference.

That matters because the ceiling for useful local models has dropped. Gemma is explicitly designed for local deployment, and Ollama has become the default packaging layer for serving local models without building the stack from scratch. We have already seen this dynamic on laptops and desktops in local LLM coding. The phone version is the same move, just pushed into a smaller thermal envelope.

What the setup actually changes under the hood

The Reddit post makes four concrete claims about the conversion.

First, OS optimization: the user says they flashed LineageOS and stripped away the usual Android UI and background load, leaving about 9GB of RAM available for model compute. That number is unverified, but the logic is sound. Android is not just an app launcher; it is a stack of services, UI processes, radios, notifications, and vendor software all competing for memory and thermal headroom.

Second, headless operation: the poster says the Android framework was frozen and networking was maintained with a manually compiled wpa_supplicant, effectively creating a headless Android server. This is the most technically interesting part because it changes the device’s role entirely. A phone normally assumes there is always a foreground user. A server assumes the opposite.

Third, thermal automation: a custom daemon reportedly monitors CPU temperatures and flips on an external active cooler via a Wi‑Fi smart plug at 45°C. That is plausible but unverified, and it tells you exactly what this project is really about. Not model serving. Thermal governance.

Fourth, battery discipline: the user says a power-delivery script cuts charging at 80% to reduce degradation during 24/7 operation. Again, no independent verification yet. But this is the right problem to solve. A phone left on charge indefinitely is not just a computer with a free UPS. It is a battery aging experiment.

What is notably missing is just as important. There are no token-per-second benchmarks, no comparison against stock Android, no logs showing idle power draw, and no demonstration of how much of the result comes from Ollama/Gemma versus the OS surgery. So the setup is credible as a systems design, but not yet measured enough to treat it as a repeatable performance recipe.

The real trade-offs: heat, battery, and throughput

The easy story is that stripping Android unlocked performance. The harder story is that stability is the actual product.

A phone can run a model for a demo. Running a local AI node all day is different. Heat accumulates. Batteries sit at elevated charge states. Wireless networking adds latency and variability. Thermal throttling can erase whatever theoretical gains you got from freeing RAM in the first place.

That is why the 45°C cooling trigger is the tell. The poster is not chasing peak benchmark numbers; they are trying to keep the device in a stable operating band. That is a server problem. The same goes for the 80% charging cap. It is less about today’s output and more about whether the device still works in six months.

There are three hard limits here:

| Constraint | What we know | What it means |

|---|---|---|

| Memory | User claims ~9GB RAM free after OS stripping; not independently verified | Small models may fit, larger ones quickly hit the wall |

| Thermals | Active cooling reportedly triggers at 45°C | Sustained inference, not burst demos, is the bottleneck |

| Battery longevity | 4,600 mAh battery and 80% charge cap claim | 24/7 operation without battery bypass remains a wear issue |

The networking choice matters too. The build is described as LAN-accessible, apparently over Wi‑Fi. A commenter suggested USB-C ethernet with power delivery instead. That is not a side note; it is the next obvious optimization. A phone repurposed as a local LLM server stops being “mobile hardware” the moment you anchor it to fixed power, cooling, and wired networking. At that point, you are really building a tiny appliance from subsidized components.

There is also a software trade-off. One commenter pushed llama.cpp on Android instead of Ollama. Another asked about Google’s LiteRT path. Those are meaningful alternatives, but they also miss the broader point: packaging matters less than operational overhead once the model already runs. If Ollama costs a bit of efficiency but makes the box easier to expose as an API, that may be the right trade. Infrastructure wins by being boring.

Why this matters beyond one hacked phone

The default interpretation is that this is a clever hobby project. The better interpretation is that phones are drifting into the same category as used mini PCs: general-purpose compute that can be reclaimed from their original market.

That matters for two reasons.

First, the economics are weirdly favorable. A used flagship phone includes a high-density battery, premium SoC, RAM, storage, radios, enclosure, and power management in one mass-produced package. Buying all of that as separate parts is worse. The market subsidized the hardware for mobile consumers; tinkerers get to buy the leftovers.

Second, the software stack is finally catching up. Small local models are getting more capable, and model families like Gemma are designed to run outside the data center. If you have been following Gemma 4 native thinking, the important shift is not just quality. It is that more useful work now fits inside constrained hardware.

There is also an energy angle. Centralized inference is powerful, but not every workload needs a server rack or an API roundtrip. Some tasks benefit from living near the user or device, especially when privacy, latency, or intermittent connectivity matter. That does not make phones better than the cloud. It makes them part of a broader compute spectrum, one we have also seen in work on neuro-symbolic AI energy, where system design matters as much as raw model capability.

My prediction: within 12 months, we will see a small but real tooling layer emerge around turning old Android flagships into dedicated inference boxes, not mainstream, but standardized enough that “flash ROM, install server, add cooling, cap battery” becomes a repeatable recipe. The interesting companies will not be the ones bragging that a phone can run AI. They will be the ones packaging the phone as infrastructure.

Key Takeaways

- A Xiaomi 12 Pro is interesting as a local AI node not because it can run a model, but because it can be turned into a stable inference appliance.

- Verified: the hardware specs. Unverified: the claimed ~9GB free RAM, frozen framework, thermal daemon, and charging scripts.

- The biggest gains likely come from system-level changes, less background load, better thermal control, steadier charging, not from any single model-serving trick.

- The hard limits are sustained heat, battery wear, and memory ceiling, not whether a demo can produce tokens.

- The bigger story is economic: old flagship phones may become a cheap class of on-device AI infrastructure.

Further Reading

- Xiaomi 12 Pro official product page, Primary specs for the Snapdragon 8 Gen 1, battery, and Xiaomi’s AI positioning.

- Engadget Xiaomi 12 series launch coverage, Confirms launch-era battery and charging details.

- Gizchina Xiaomi 12 Pro launch coverage, Secondary spec confirmation and launch context.

- Ollama official site, The local model-serving stack used in the claimed setup.

- Google Gemma documentation, Official documentation for the Gemma model family and deployment context.

A phone running AI is a demo. A phone turned into a dependable appliance is a category. The moment these builds get repeatable benchmarks and a stable setup guide, the used-phone market stops looking like e-waste and starts looking like edge infrastructure.