The loudest fear about AI in healthcare is straightforward: software gets good enough, and doctors start getting replaced. The evidence we have points somewhere more immediate and more awkward. AI in healthcare is already changing what patients expect when they walk into the room, and a lot of clinicians and institutions do not look ready for that shift.

I started this expecting the real story to be autonomous diagnosis. That story exists, but mostly as a plausible near-term pressure, not a verified mass rollout. The more solid evidence is messier: patients are already using chatbots as informal triage, health systems are buying AI tools at scale, insurers and providers are fighting over AI scribes, and trust is getting thinner rather than thicker.

That matters because medicine still runs on an old social contract. Patients wait weeks, sometimes months, for brief visits, then are expected to accept rushed judgments from a system they barely get access to. Once patients can show up with better questions, alternative explanations, and printouts from a model that sounds confident, the old deference model starts to crack.

AI will not remove the need for human oversight in medicine anytime soon. But it is already raising the bar for what clinicians need to do in real time: explain uncertainty, justify decisions, and work with patients who no longer arrive empty-handed.

Why doctors feel AI is changing medicine now

Part of the anxiety is cultural. Part of it is that the workflow has already changed.

Verified reporting from STAT shows U.S. health organizations spent $1.4 billion on AI tools in 2025, nearly 3x the previous year. In a February 2025 study cited by STAT, 66% of respondents reported low trust in their health care system to use AI responsibly. Only 58% said they trusted their system to ensure AI would not harm them. That is a bad combination: rapid deployment and weak trust.

The institutional rollout is also real. STAT reports that UnitedHealth Group has 22,000 software engineers worldwide, and more than 80% are using AI to write code or build agents. The company is applying AI to claims processing, fraud detection, documentation, and billing-code selection. That is verified internal adoption at one of the most powerful actors in U.S. healthcare. Whatever doctors think about AI, the organizations around them are not waiting.

Then there are AI scribes. STAT’s April 8 reporting says insurers and providers broadly agree these tools are increasing coding intensity and healthcare costs. Caroline Pearson said in a PHTI roundtable it was “quite clear” scribes are increasing coding intensity. That does not mean scribes are useless. It means a tool sold as administrative relief is also changing incentives, reimbursement patterns, and what happens to the clinical note.

So when some doctors say AI feels suddenly real, they are not imagining things. The pressure is not just “a bot might diagnose strep.” It is that the chart, the billing workflow, the patient message queue, and the institution above them are already being reshaped.

What patients are already using AI for

Patients are not waiting for formal permission. That part is plausible to widespread, supported by anecdotal evidence and by the surrounding access data, even if we do not yet have a clean national usage number in the brief.

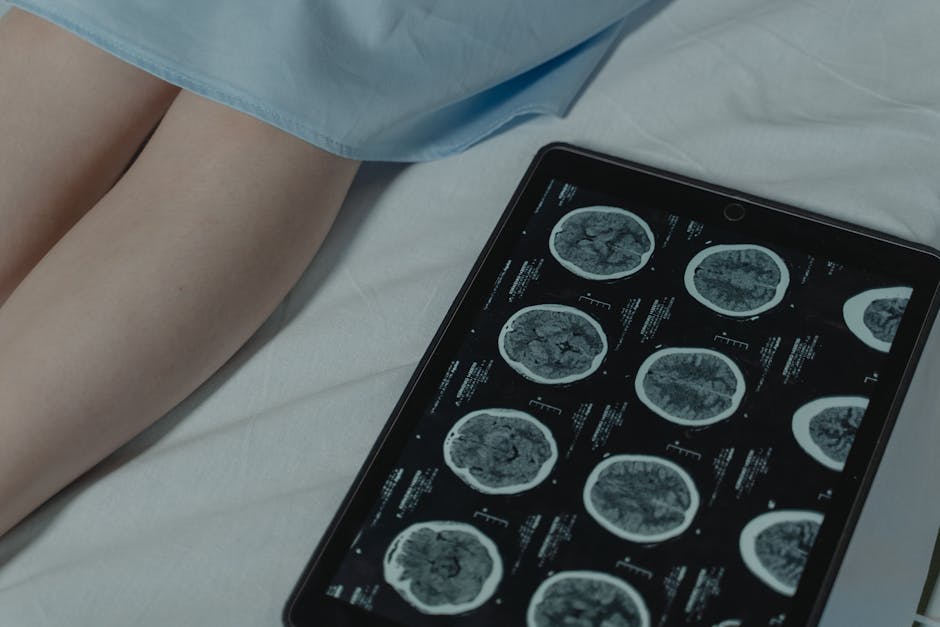

The Reddit thread that sparked this discussion is unverified as a primary source, but it captures a pattern that matches the reporting: patients use chatbots to interpret symptoms, prepare for appointments, review imaging reports, find treatment options, and decide whether a visit is urgent. Treat that thread as anecdotal, not evidence on its own.

The stronger evidence is the access crisis that makes this behavior rational. STAT reports the average wait for a new doctor appointment in the U.S. is now 31 days, up from 26 days in 2022 and 21 days in 2004. One cited example: 231 days to see an OB-GYN in Boston. Doctors also spend more than five hours of record-keeping for every eight hours with patients. If the system wanted patients to avoid AI triage, it picked a strange way to show it.

That is the “oh, that can’t be right” number here: five-plus hours of documentation for every eight clinical hours. At that point, patients turning to a chatbot is not some weird new pathology. It is what happens when access is scarce and the official channel is slow.

Some of this use is genuinely helpful. A patient can arrive with a cleaner symptom timeline, sharper questions, or a better understanding of side effects. Some of it is dangerous. Chatbots still hallucinate, flatten uncertainty, and can sound equally confident when they are wrong. That is why the best current use case is preparation, not final authority.

This is also where Automated Labeling Bias Is Hiding Medical AI Harms matters. Medical AI can look better than it is when evaluation systems inherit bias from the humans and institutions they are supposed to check. Better patient-facing interfaces do not remove the need for careful validation.

The real pressure point: trust, triage, and access

The replacement story is overstated. The trust story is not.

STAT’s March 23 report argues AI is worsening existing distrust because patients often are not told when AI is used. That claim is verified by the reporting and survey data cited there. If a hospital quietly uses AI to draft messages, sort cases, or influence care pathways, patients do not experience that as efficiency. They experience it as one more opaque layer between them and a person who can answer for a decision.

The Doctronic and Project Glasswing reporting pushes this further. The Utah medical board reaction, as described by STAT, shows regulators are already confronting autonomous clinical AI pilots. That is verified reporting on a specific pilot and regulatory response. What is still unverified is any claim that these systems are ready to replace broad swaths of frontline medicine safely. We do not have that evidence here.

What we do have is a system where AI triage is already happening in practice, whether officially sanctioned or not. Patients ask a model first. A portal message may be summarized by software. An insurer may use automation in review and routing. A clinician may use a scribe during the visit. The patient sees one encounter. Underneath it is a growing chain of machine judgments.

That chain changes the burden of proof. If the patient already asked ChatGPT why symptom A might connect to medication B, “because that’s usually how we do it” stops working as an answer. And if access remains terrible, patients will trust the tool that is available over the professional who is booked out for a month.

There is also a liability problem lurking here. Once AI becomes part of routine triage or documentation, the question is not just whether a doctor made a call. It is who is responsible for the machine-shaped version of that call. NovaKnown’s piece on AI liability is useful background because healthcare is about to run straight into that question.

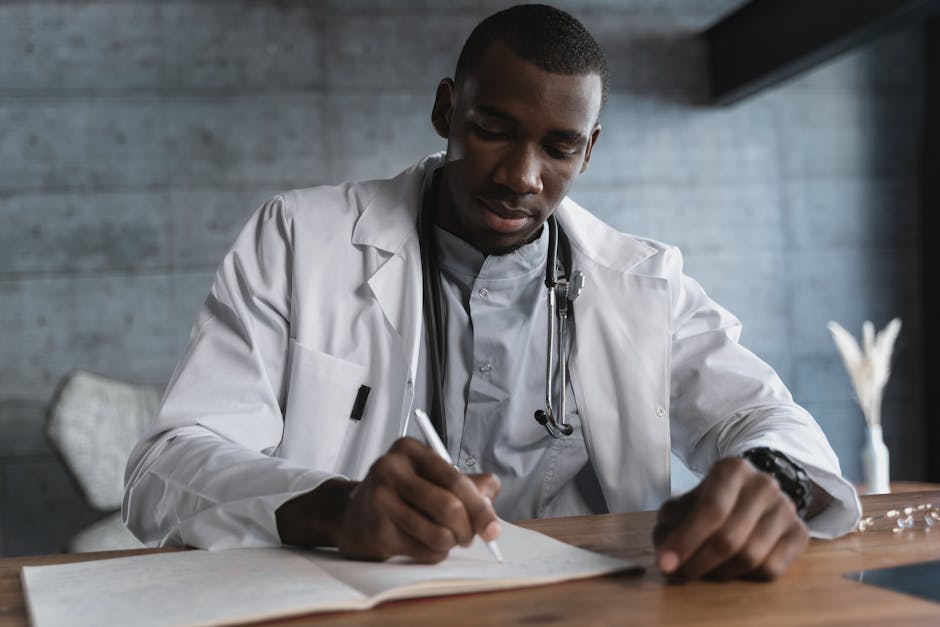

Why AI in healthcare raises the bar for clinicians

The clinicians most at risk are probably not the ones people think.

A good doctor does more than recall facts. They notice what does not fit, weigh messy tradeoffs, know when the textbook case is misleading, and can explain why a likely diagnosis is still uncertain. AI does not remove the value of that. It makes the absence of that value easier to spot.

That is why AI in healthcare raises the bar. Patients with chatbot help can now test whether a clinician can engage, not just pronounce. They can ask why one diagnosis was ruled out. They can ask about prevalence, side effects, or alternative treatments. They may be wrong a lot. They may also force a more explicit standard of reasoning.

That same pressure is showing up across knowledge work; our earlier piece on AI and unemployment made a similar point in a different domain. AI often does not replace the professional outright. It strips away the shelter around mediocre performance first.

The catch is that clinicians cannot carry this alone. If visits stay short, inboxes stay overloaded, and documentation keeps eating the day, then “collaborate better with informed patients” becomes one more demand piled onto a strained system. The friction is professional, yes. But it is also institutional. Patients are getting a faster interface to medical information at the exact moment the healthcare system has become worse at offering timely human explanation.

Key Takeaways

- The immediate disruption from AI in healthcare is a trust and expectations shift, not mass doctor replacement.

- Verified evidence shows AI is already inside institutions through scribes, coding, claims, and administrative workflows.

- Patients are turning to AI because access is bad: 31-day average waits and extreme specialist delays make unofficial AI triage predictable.

- Human oversight still matters because current medical AI can hallucinate, hide bias, and overstate certainty.

- The clinicians who do best will be the ones who can explain, justify, and collaborate in real time.

Further Reading

- STAT: A $15 AI test, Project Glasswing, and how Doctronic pilot blindsided Utah medical board, Recent reporting on an autonomous clinical AI pilot and the regulatory backlash.

- STAT: Everyone agrees AI scribes are increasing health care costs. No one agrees what to do about it, Concrete evidence that AI scribes are changing billing and cost incentives, not just note-taking.

- STAT: What does UnitedHealth Group’s massive AI push mean for patients?, Documents large-scale AI deployment inside a major healthcare payer and operator.

- STAT: Medical misinformation wins when patients can’t see their doctors, Key access and workload numbers explaining why patients seek information elsewhere.

- STAT: AI is exacerbating Americans’ distrust in health care, Survey-backed reporting on distrust, disclosure, and rapid AI spending in medicine.

The cleanest way to say this is also the least futuristic one: AI is not mainly forcing medicine to choose between humans and machines. It is forcing medicine to explain itself to patients who no longer have to show up in the dark.